Featured

The story of AI progress is dominated by scale. Training AI systems with more compute, power and data has consistently led to better performance. Epoch tracks the scale-up of the resources used to train AI systems, and what this means for capabilities and the future of AI. Epoch's research covers training compute trends, data availability, scaling laws, hardware constraints, and the question of whether scaling can continue through the end of the decade.

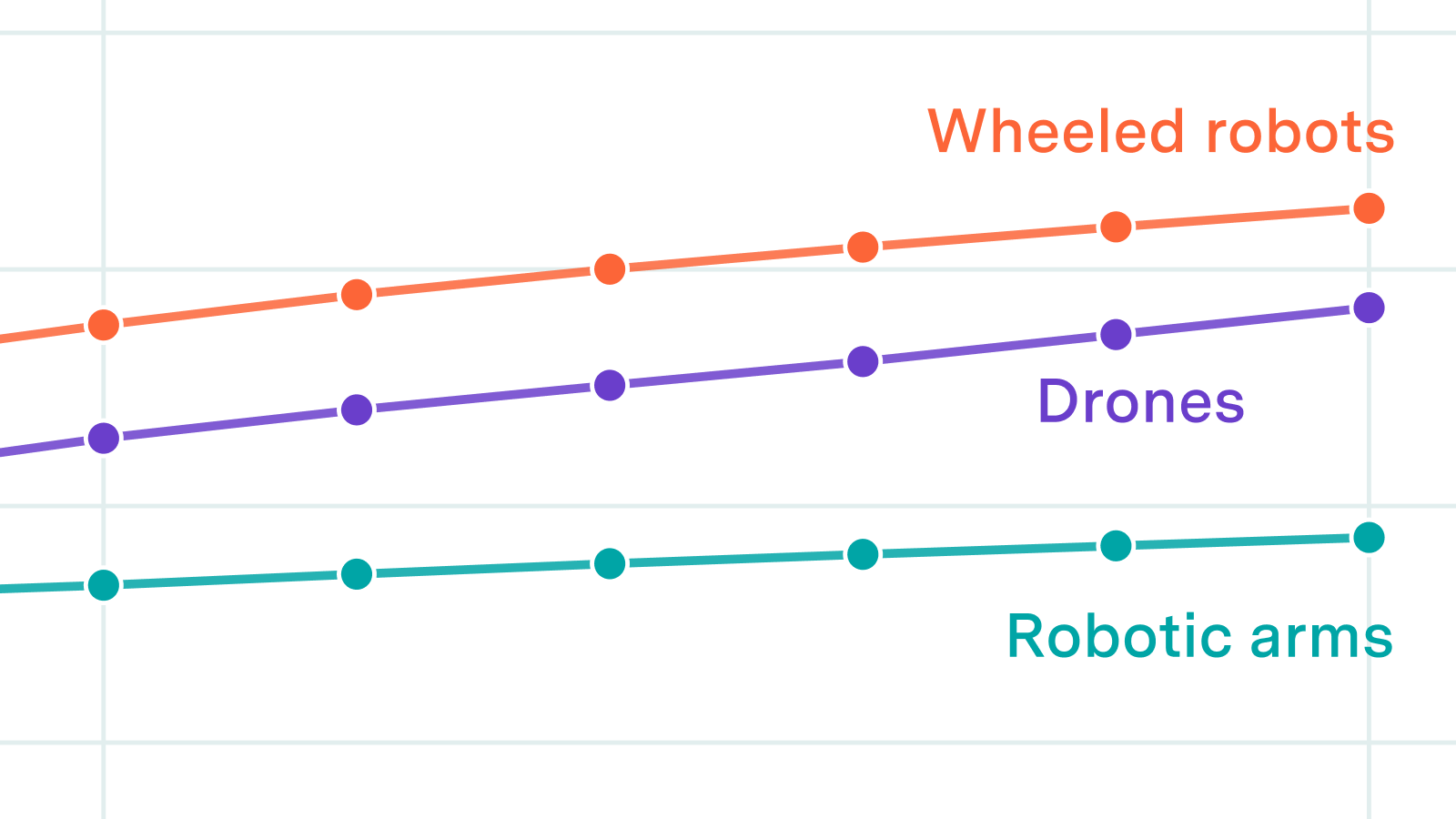

We look at reference classes, factory buildout timelines, and upstream component supply to estimate plausible production rates for humanoids, quadrupeds, robotic arms, wheeled robots, and drones.

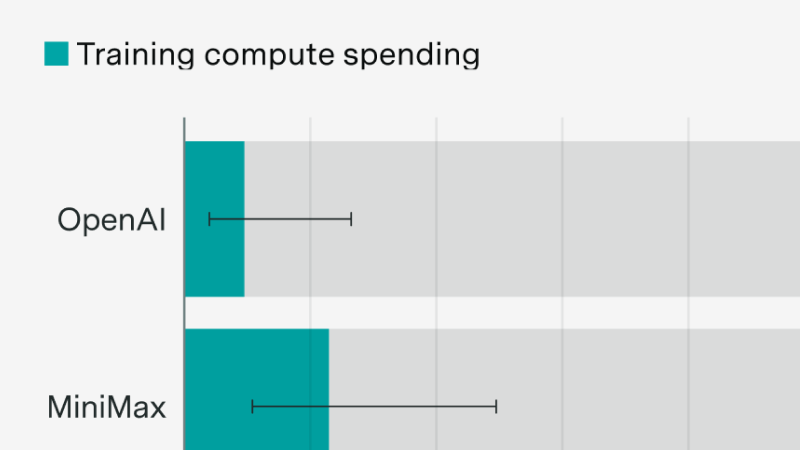

New evidence following the MiniMax and Z.ai IPOs

An opinionated guide to “algorithmic progress” and why it matters

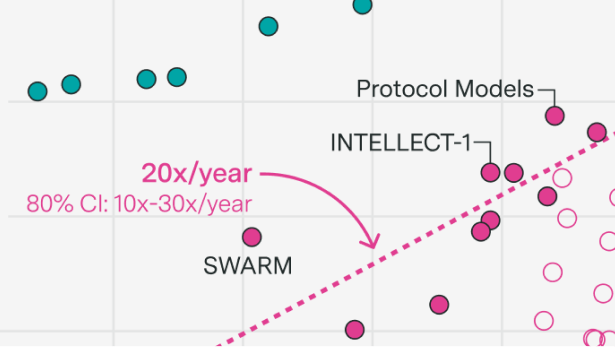

Decentralized training over the internet promises to scale training to the limits of the internet.

The existing debate rests on data and assumptions that are shakier than most people realize. To make progress, we need better evidence, and experiments are the best way to get it on the margin.

OpenAI focused on scaling post-training on a smaller model

Continual learning, scaling RL, and research feedback loops

If scaling persists to 2030, AI investments will reach hundreds of billions of dollars and require gigawatts of power. Benchmarks suggest AI could improve productivity in valuable areas such as scientific R&D.

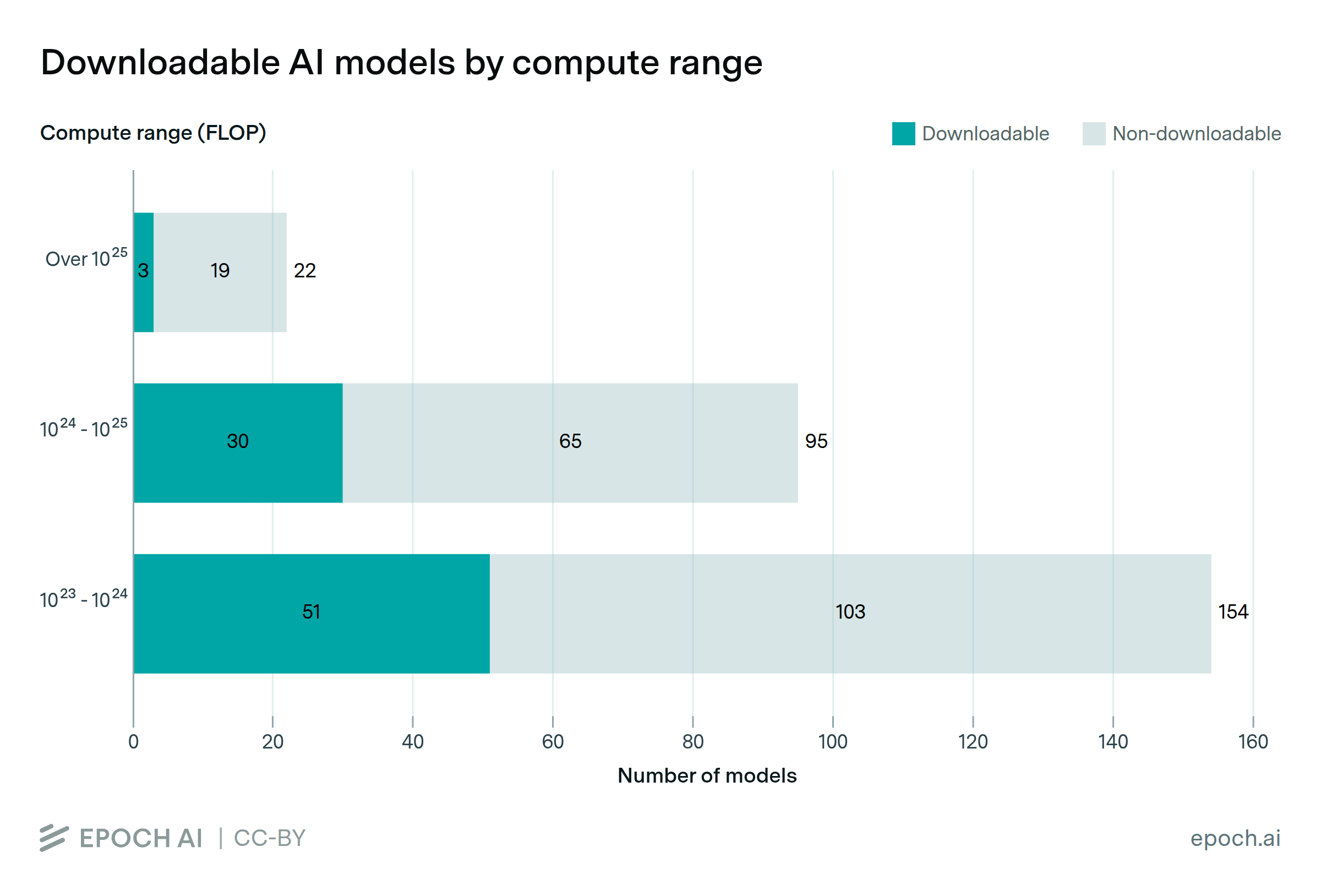

'Training compute' is constantly evolving, and compute-based AI policies must adapt to remain relevant

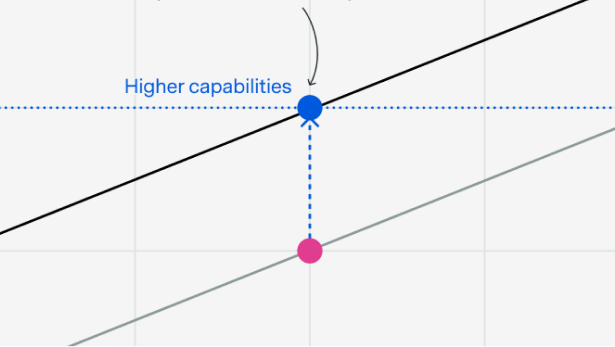

A heavily underappreciated dynamic when thinking about AI timelines.

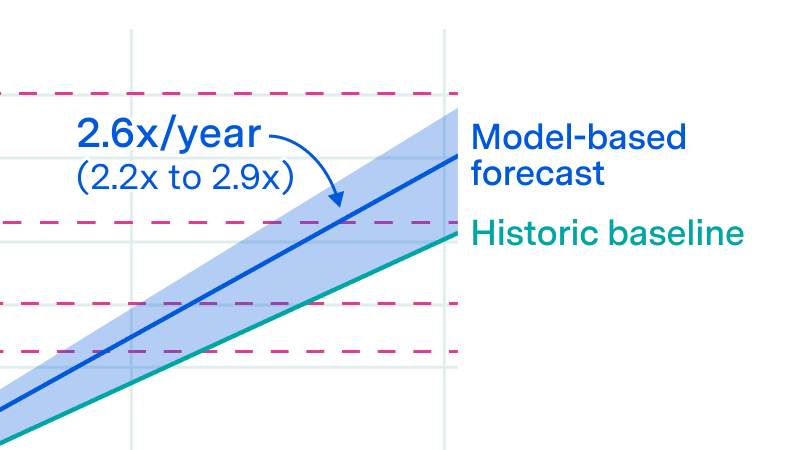

Epoch AI researchers Jaime Sevilla and Yafah Edelman forecast AI progress to 2040: coding automation, 10% GDP growth, and wild uncertainty after 2035.

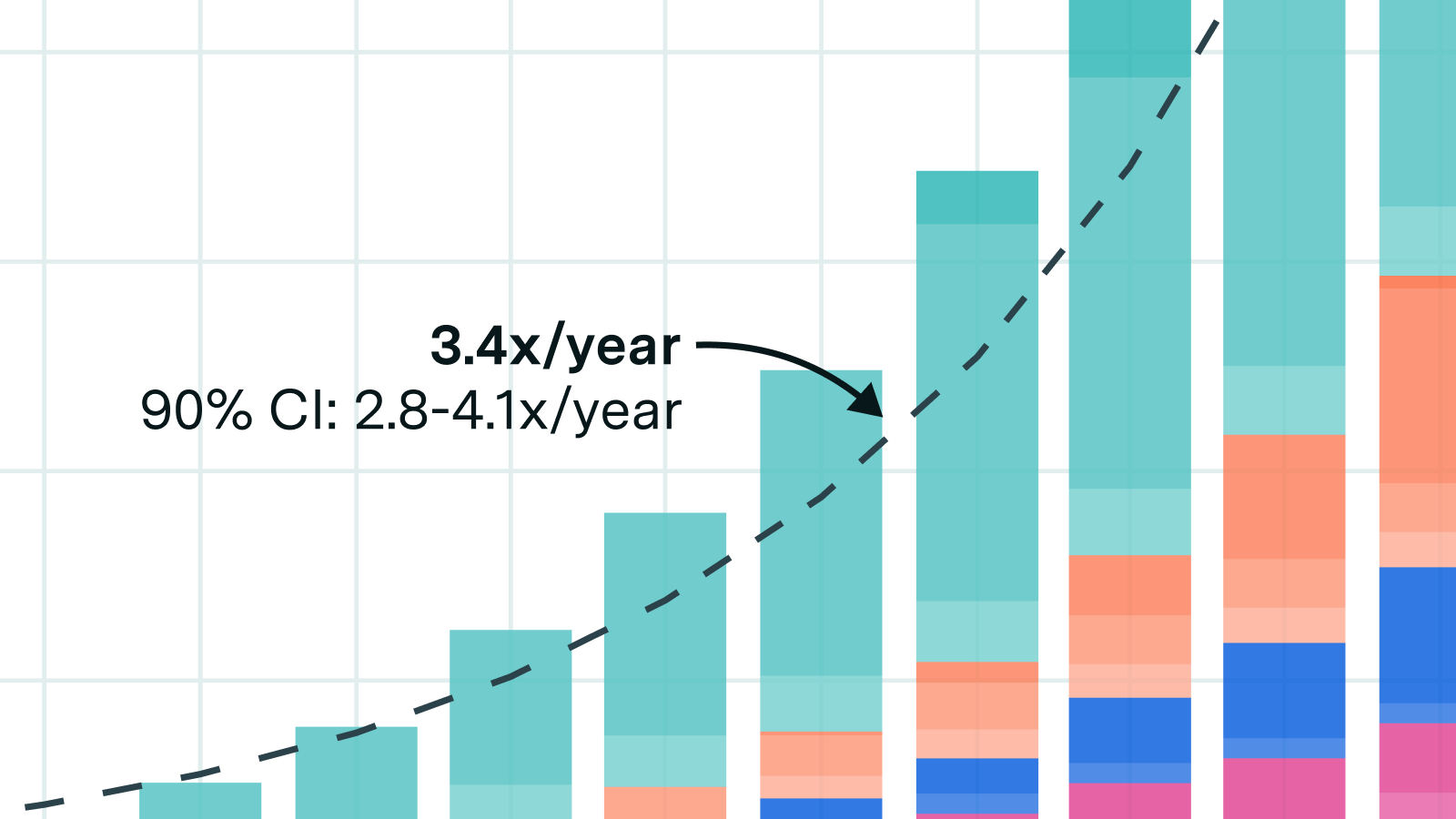

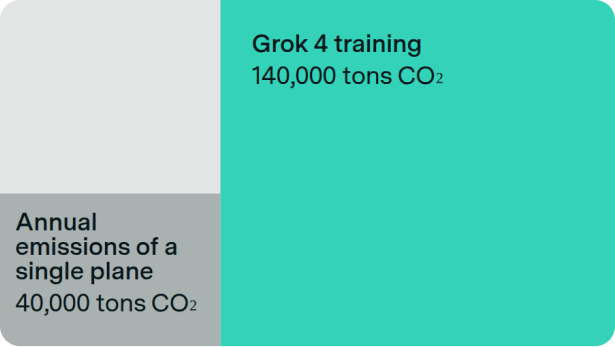

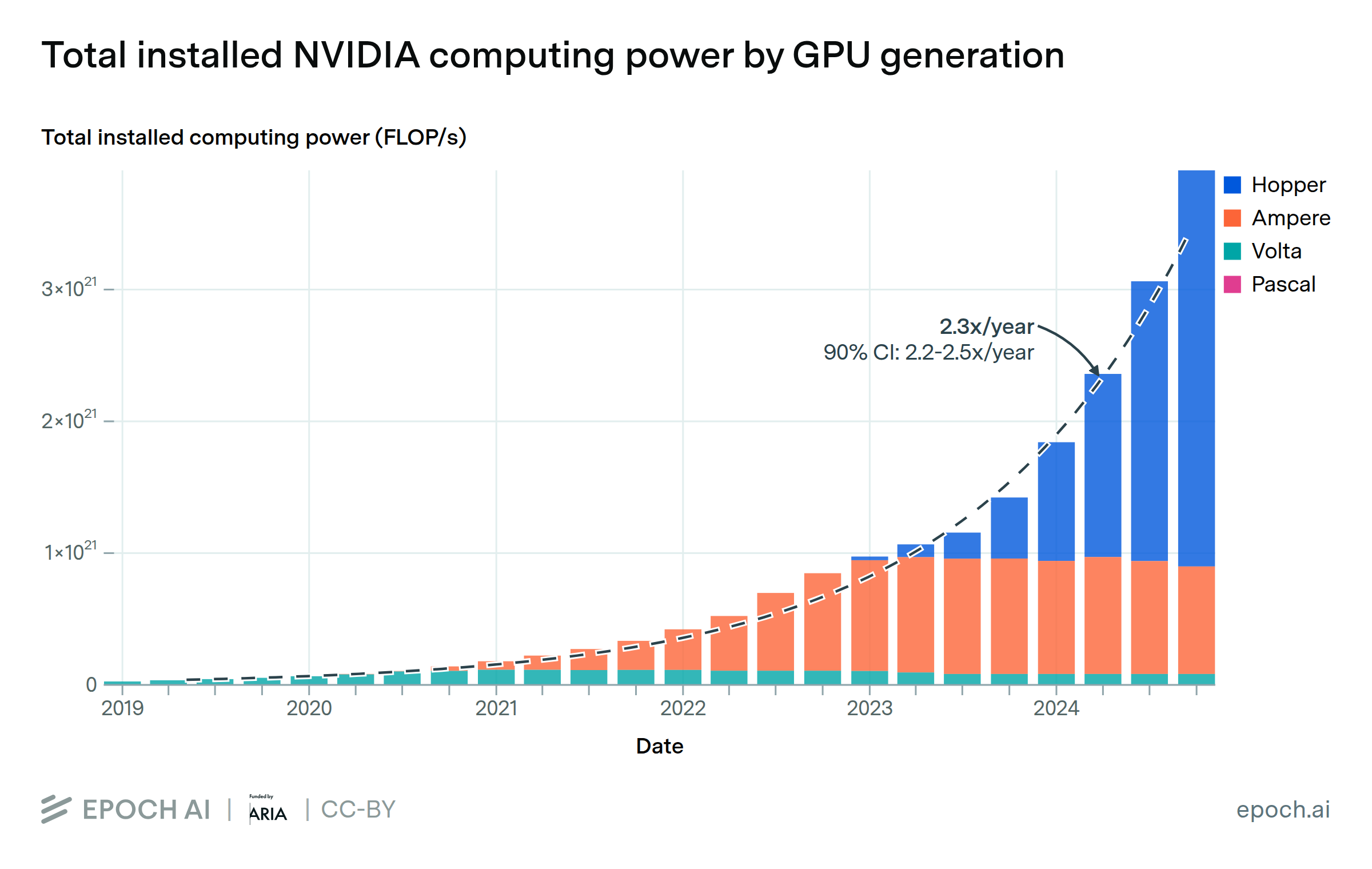

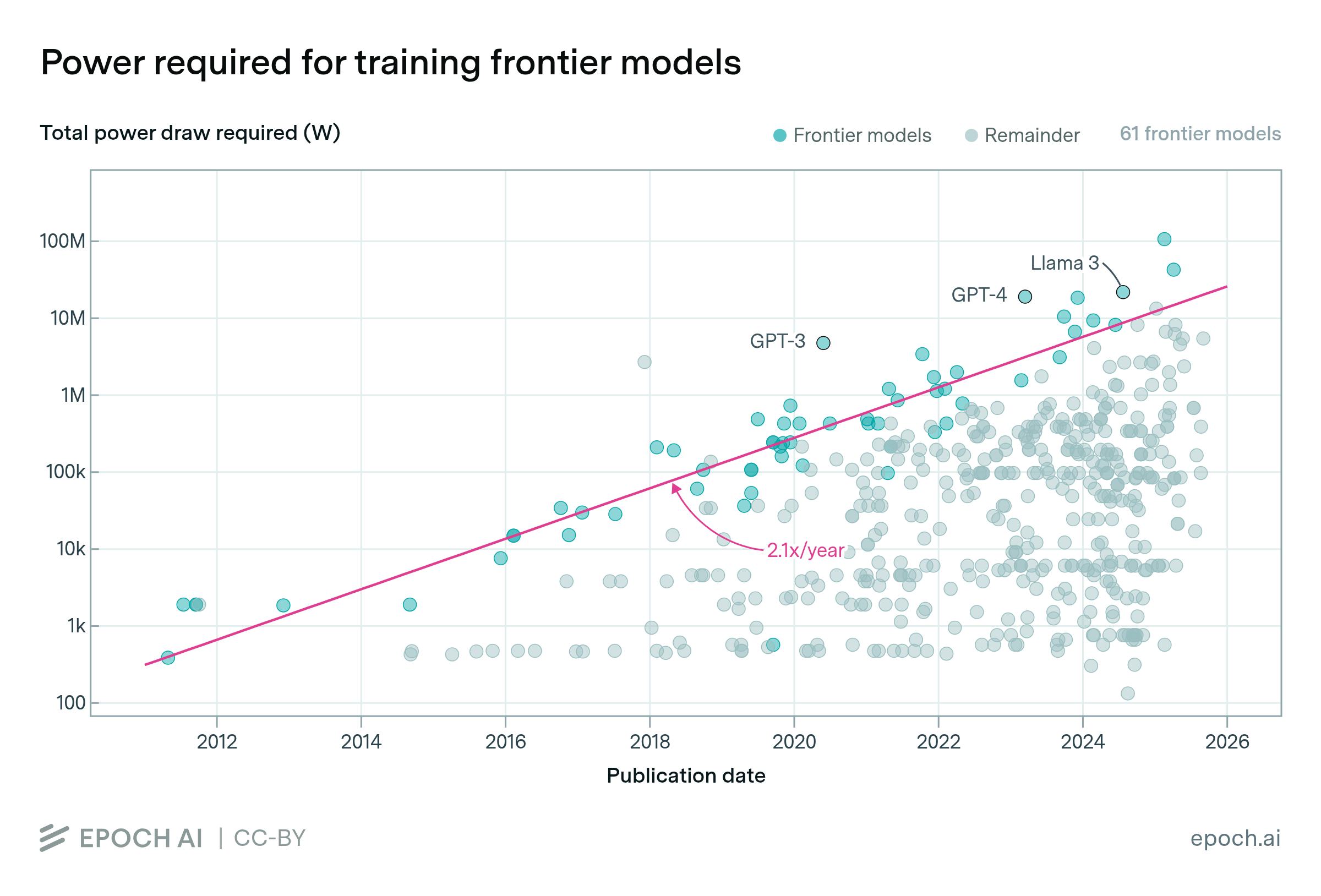

The power required to train the largest frontier models is growing by more than 2x per year, and is on trend to reaching multiple gigawatts by 2030.

Chinese hardware is closing the gap, but major bottlenecks remain

An AI Manhattan Project could accelerate compute scaling by two years.

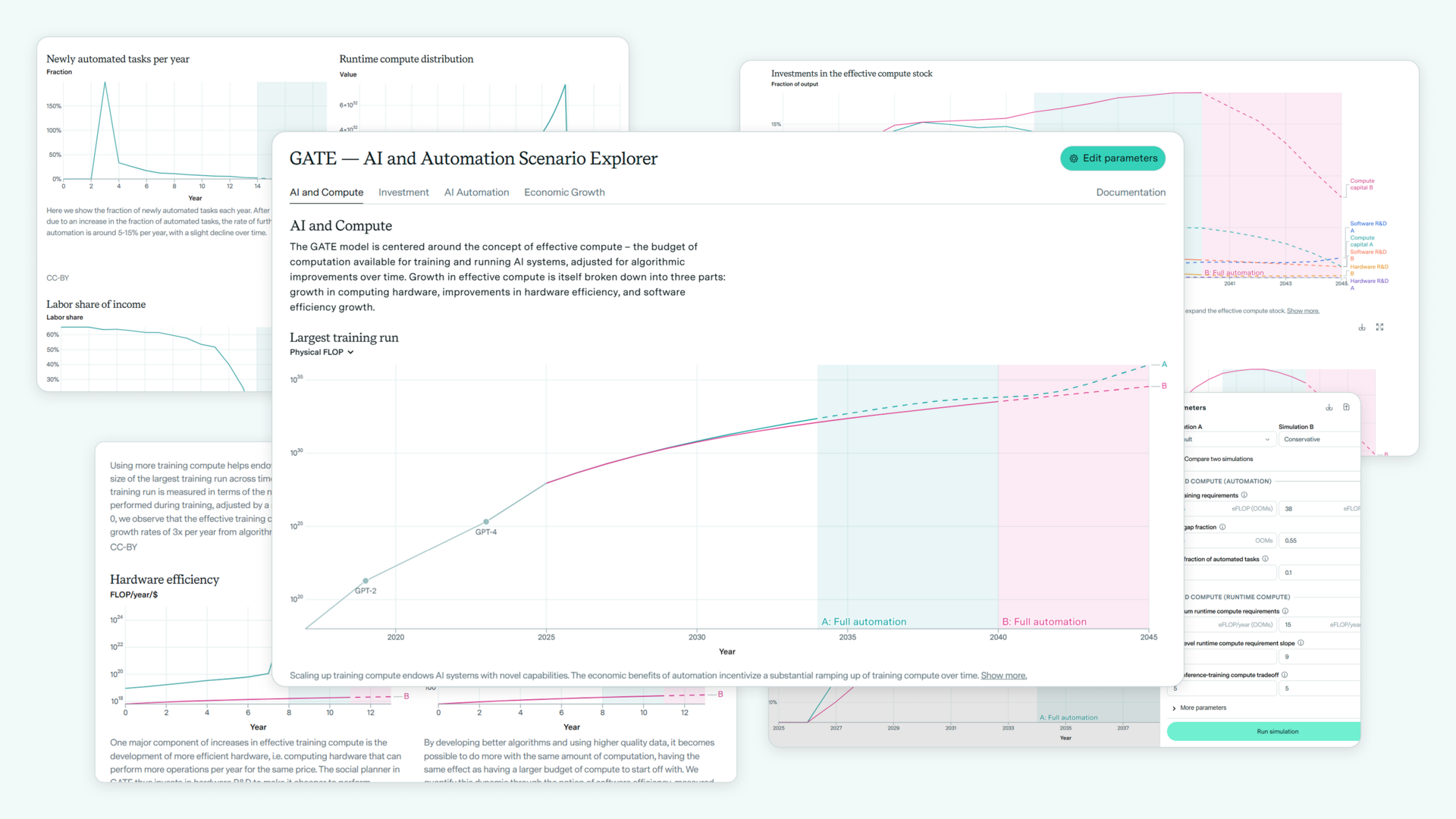

GATE model shows AI-driven growth surges more easily than expected and supports much larger investments—advocating moderate optimism.

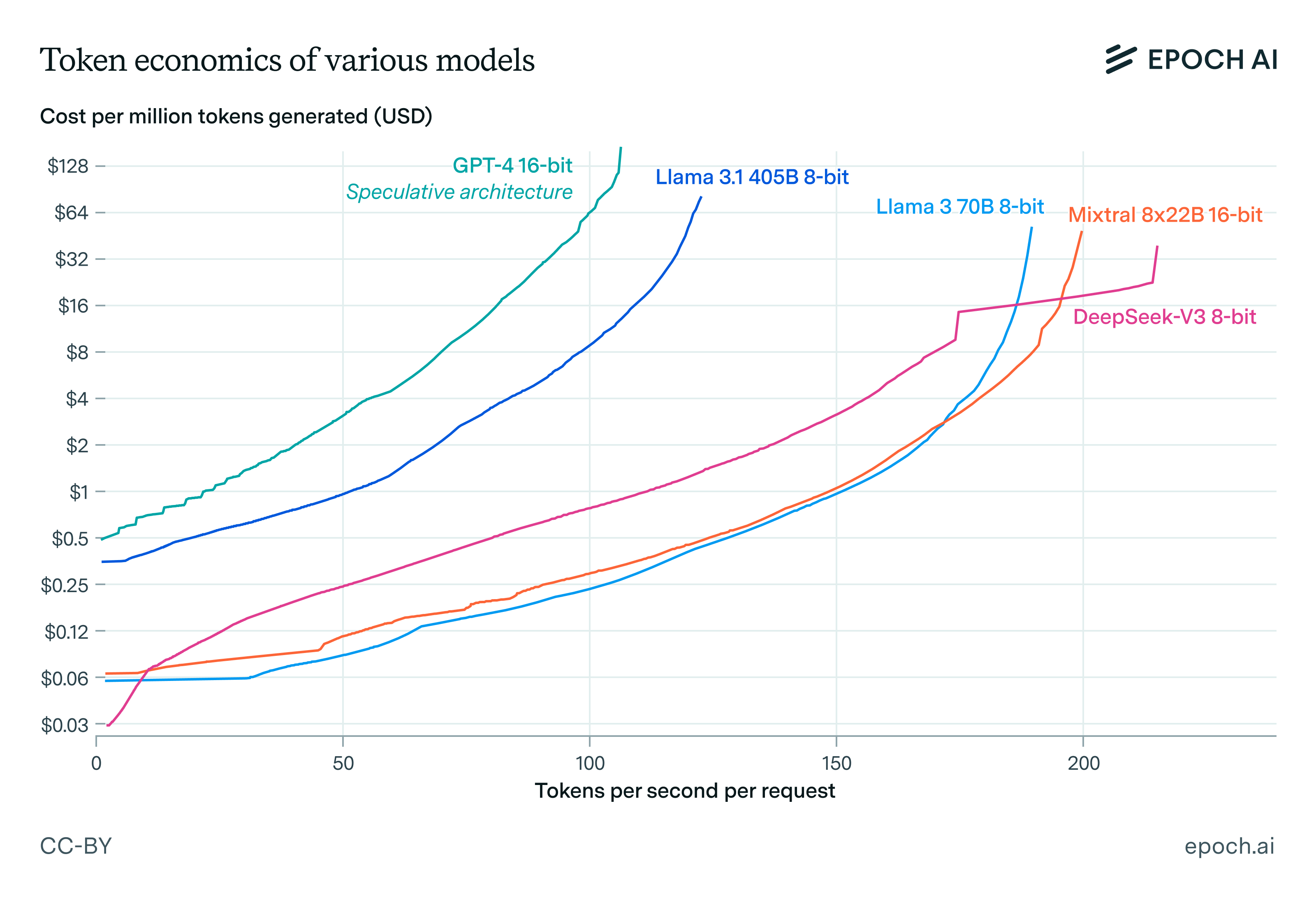

We investigate how speed trades off against cost in language model inference. We find that inference latency scales with the square root of model size and the cube root of memory bandwidth, and other results.

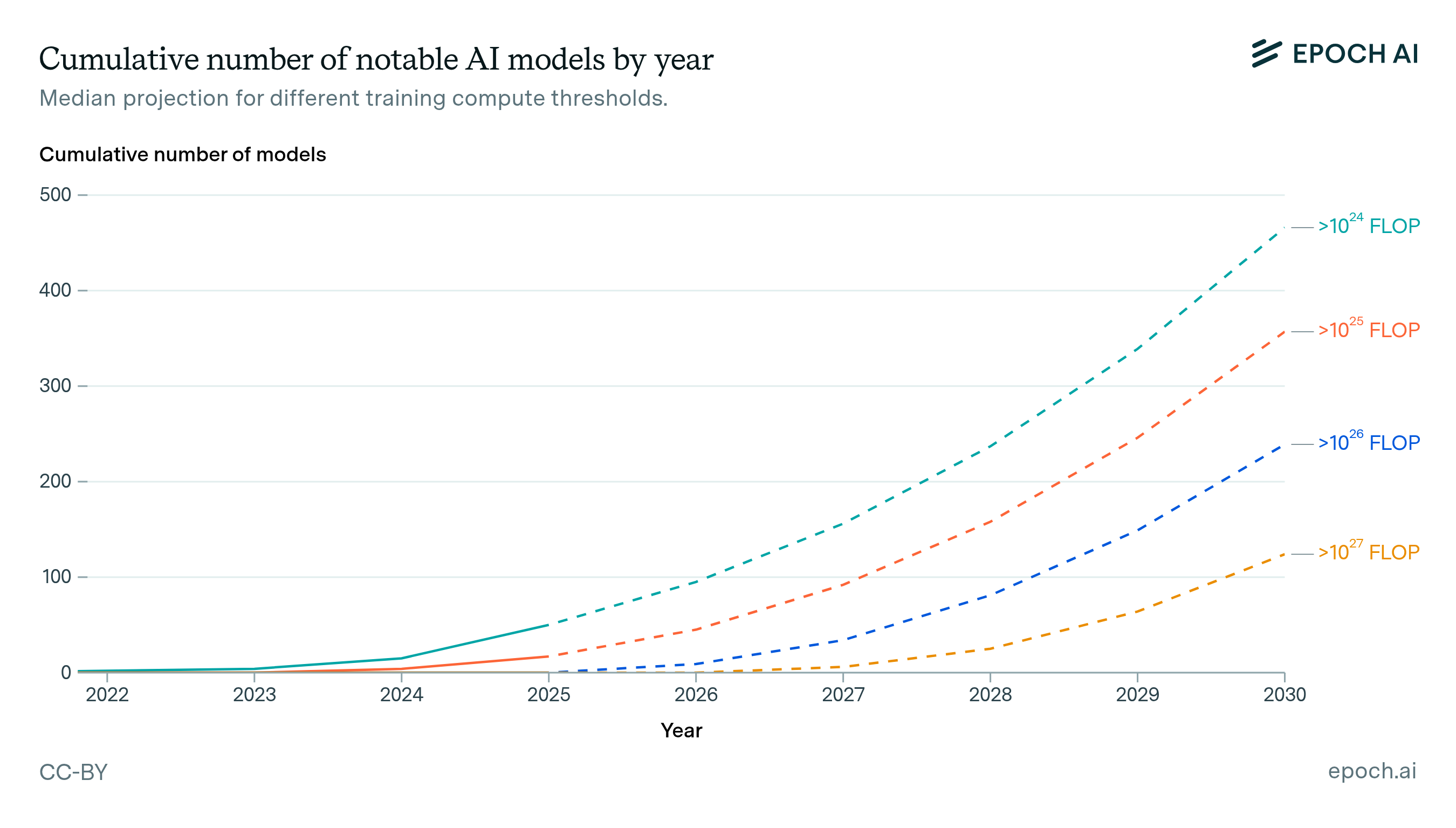

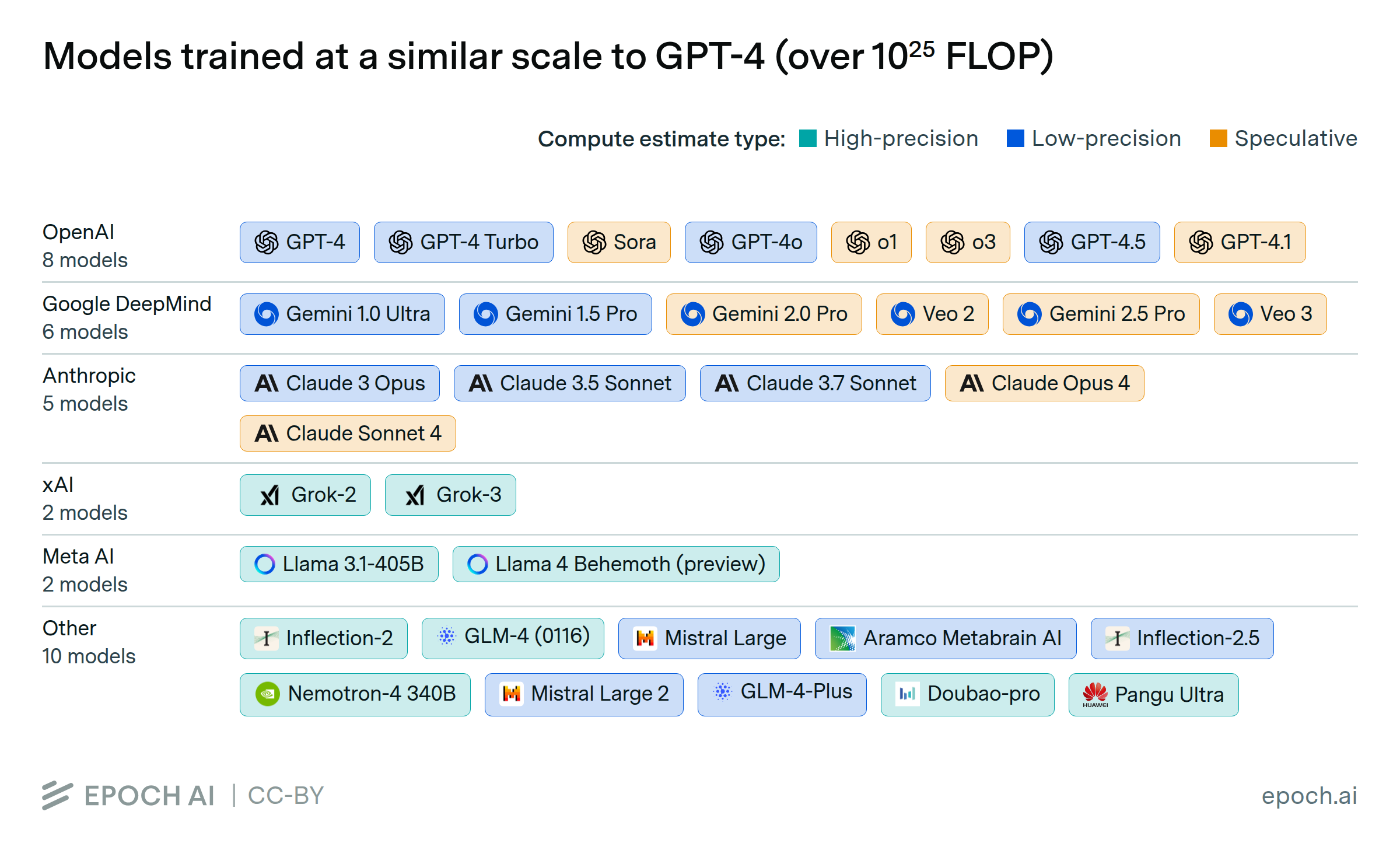

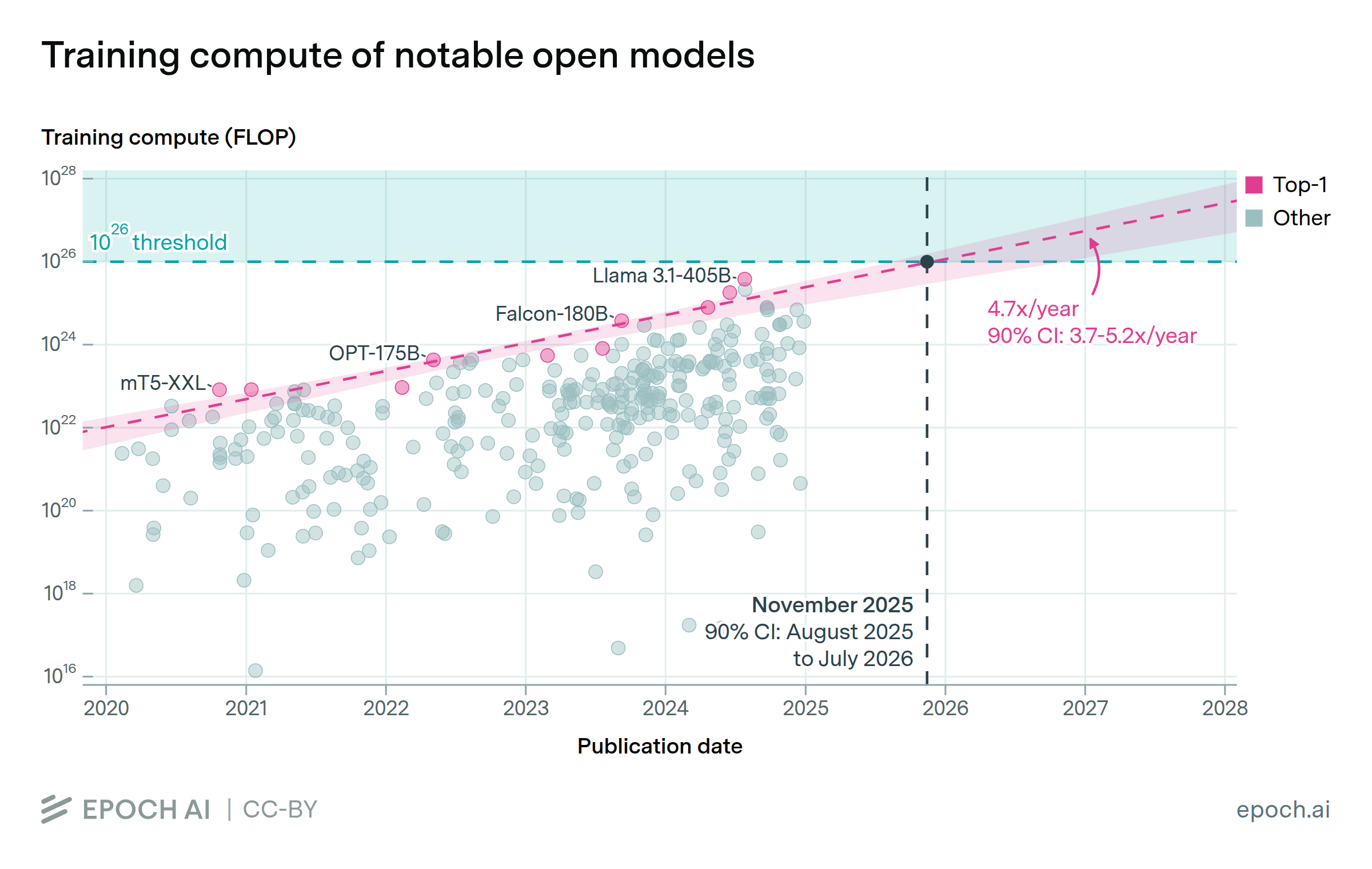

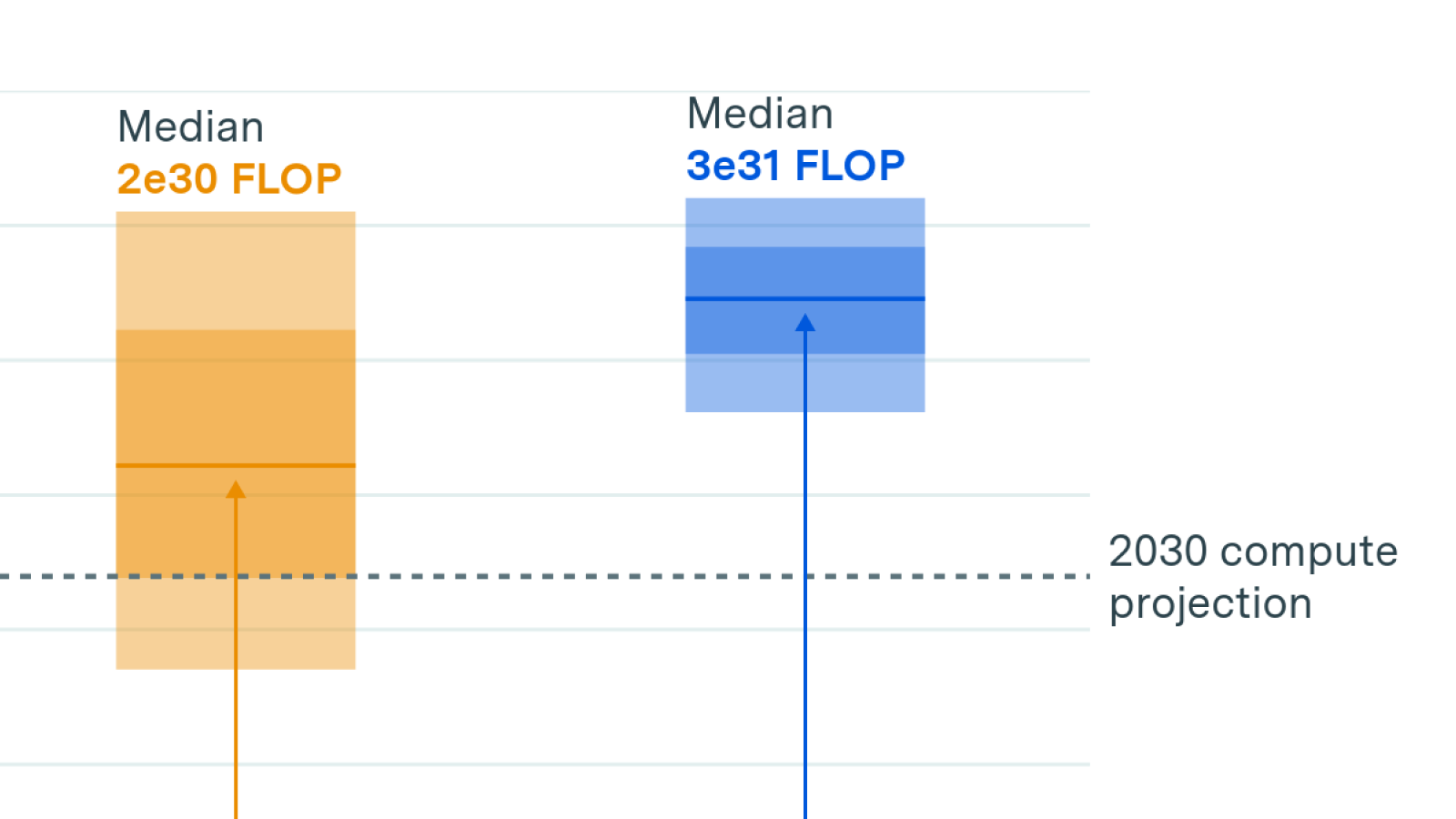

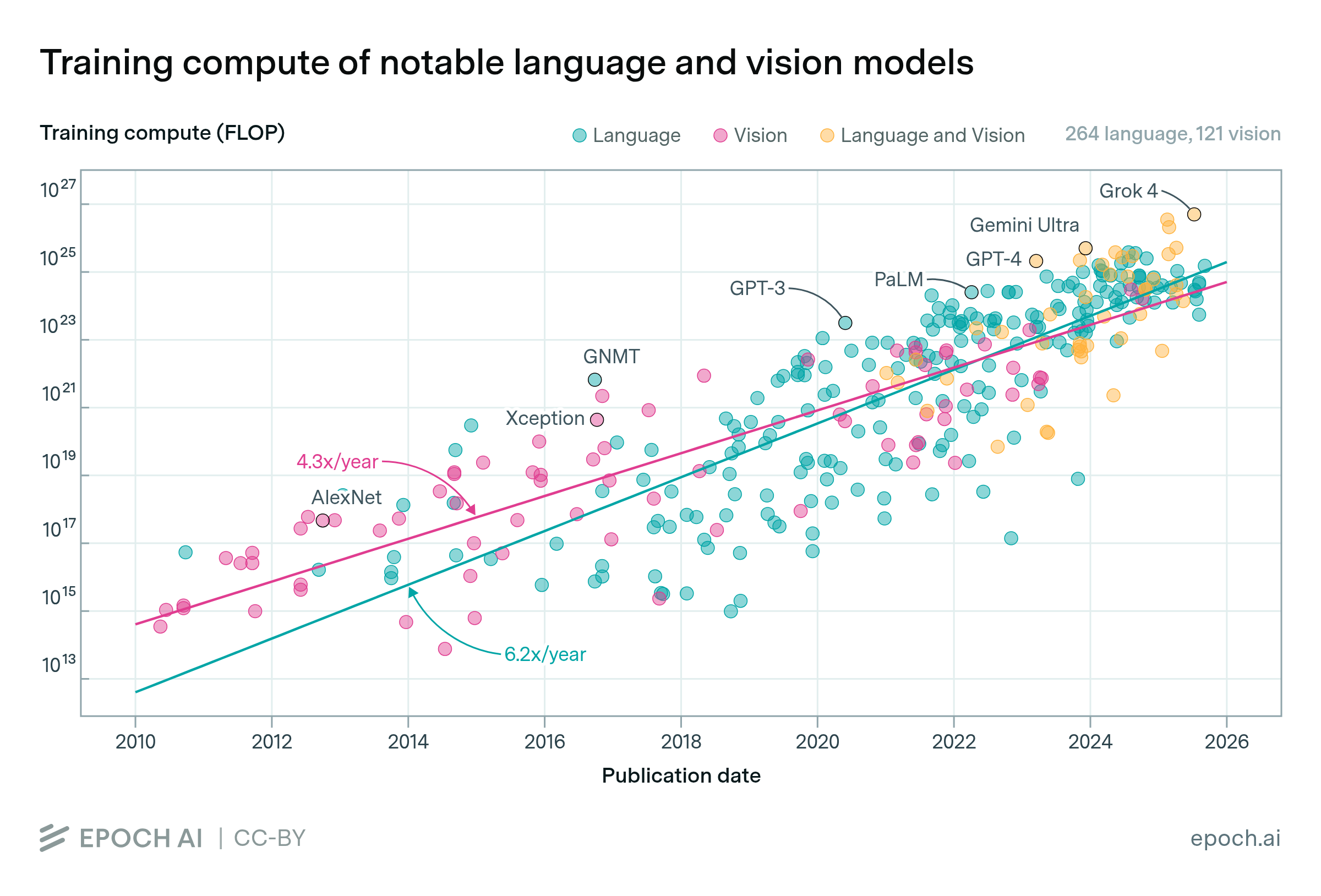

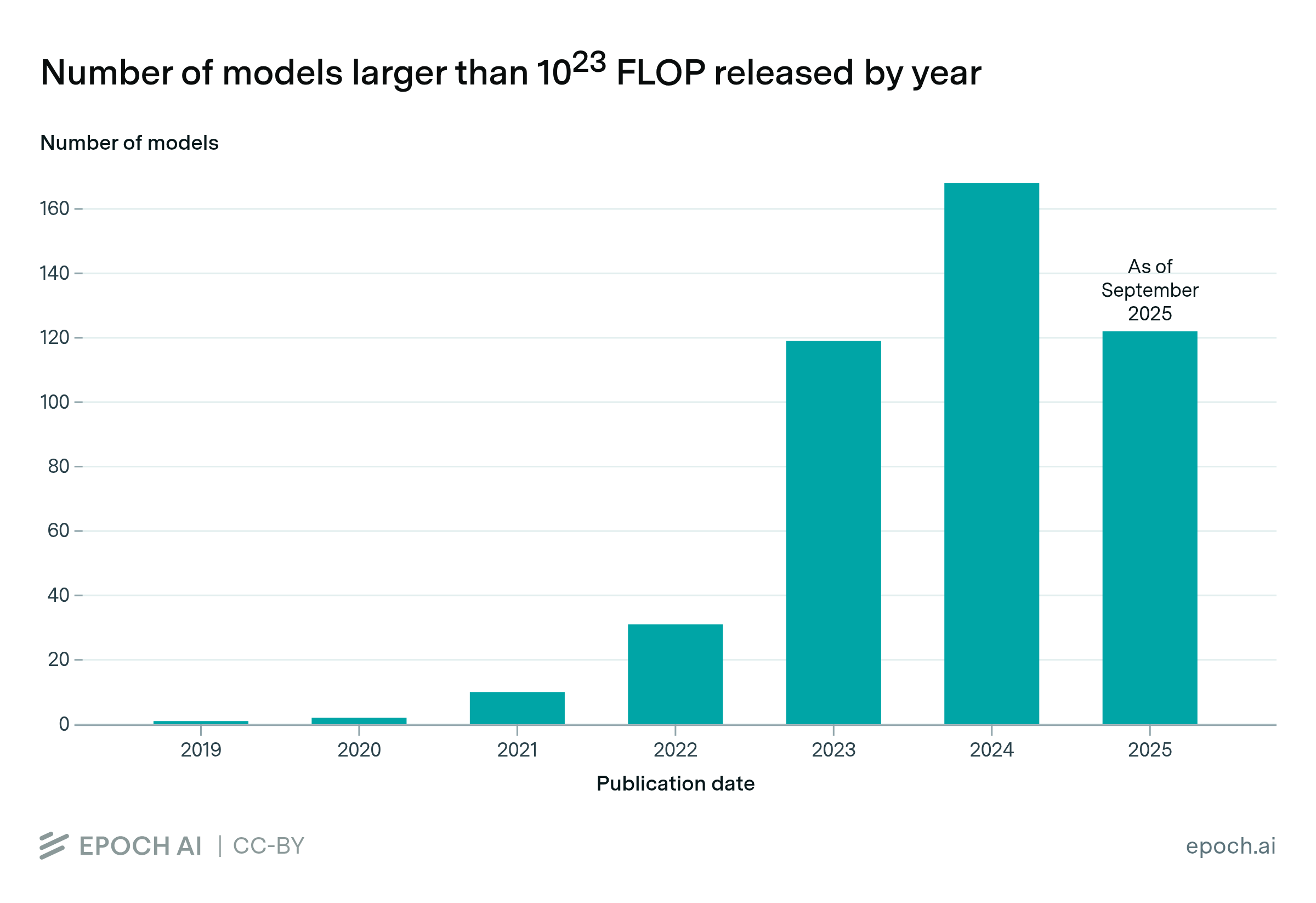

We project how many notable AI models will exceed training compute thresholds, with results accessible in an interactive tool. Model counts rapidly increase from 10 above 1e26 FLOP by 2026, to over 200 by 2030.

This week's issue is a guest post by Henry Josephson, who is a research manager at UChicago's XLab and an AI governance intern at Google DeepMind.

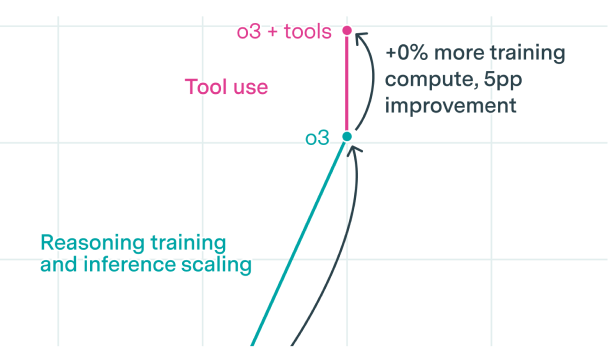

Available evidence suggests that rapid growth in reasoning training can continue for a year or so.

In this Gradient Updates weekly issue, Ege discusses the case for multi-decade AI timelines.

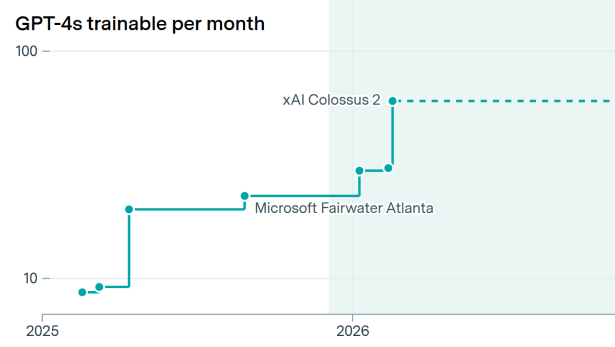

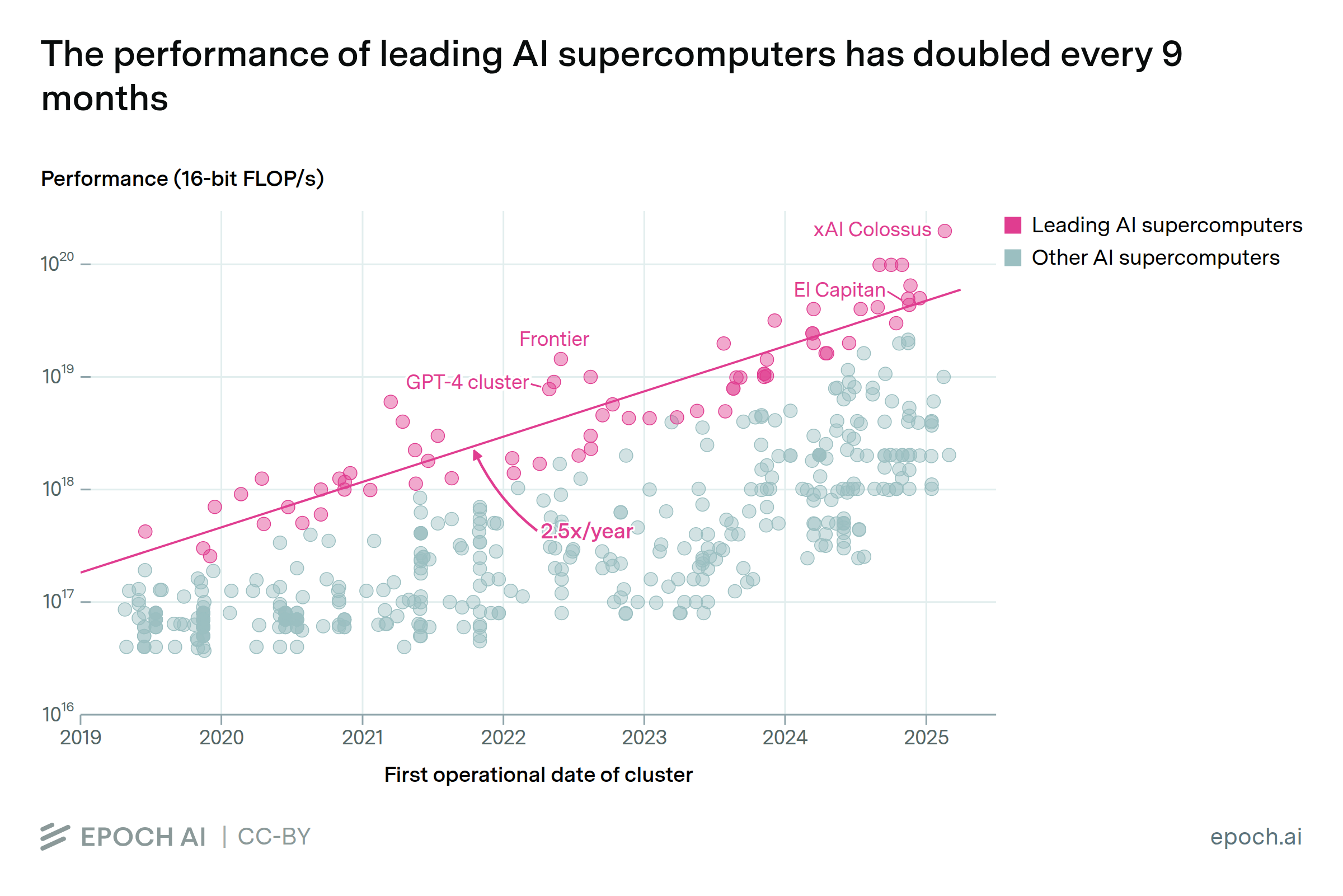

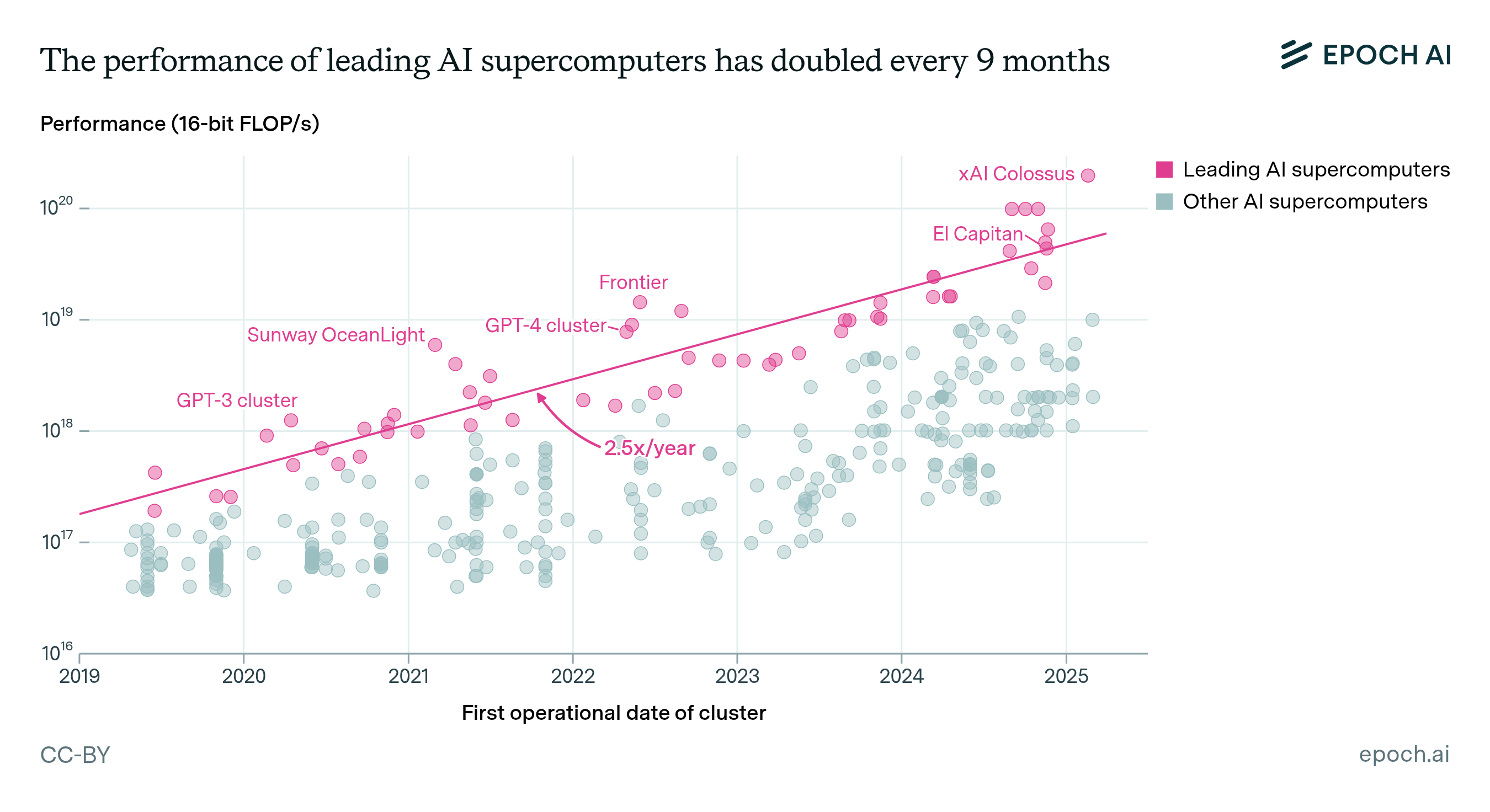

AI supercomputers double in performance every 9 months, cost billions of dollars, and require as much power as mid-sized cities. Companies now own 80% of all AI supercomputers, while governments’ share has declined.

In this podcast episode, two Epoch AI researchers with relatively long and short AGI timelines candidly examine the roots of their disagreements.

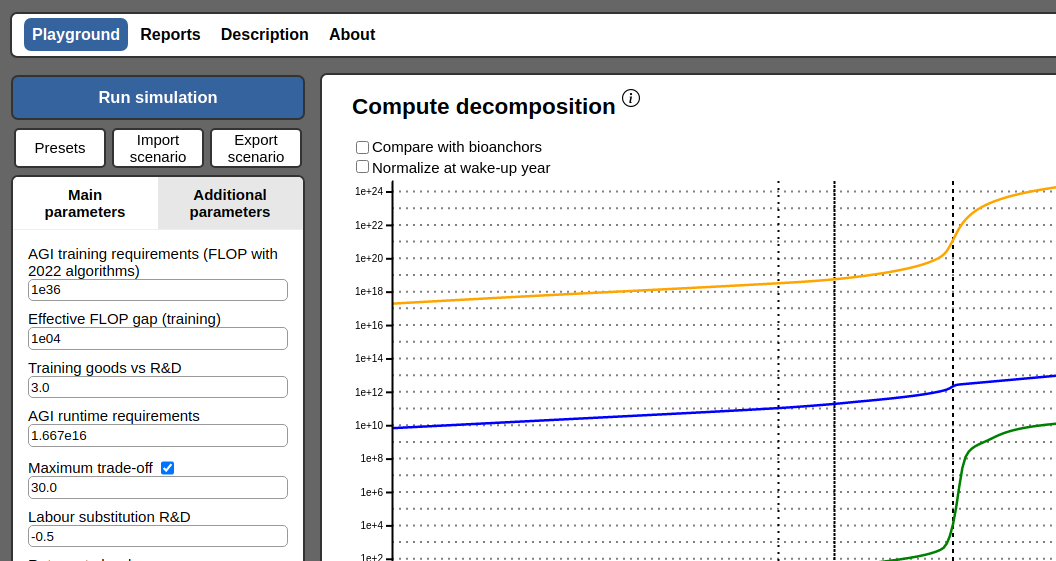

We introduce a compute-centric model of AI automation and its economic effects, illustrating key dynamics of AI development. The model suggests large AI investments and subsequent economic growth.

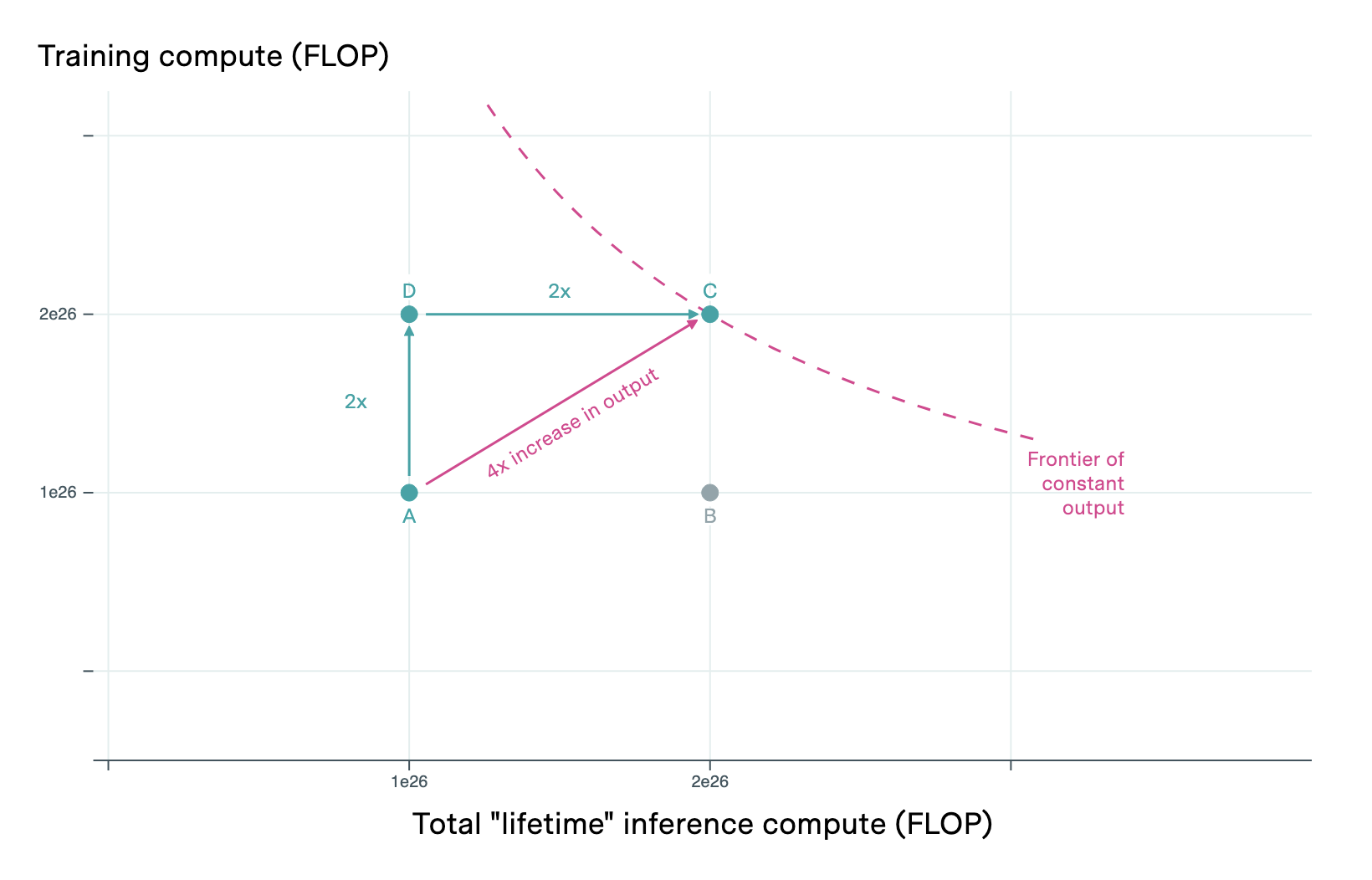

AI's “train-once-deploy-many” advantage yields increasing returns: doubling compute more than doubles output by increasing models' inference efficiency and enabling more deployed inference instances.

Forecasting AI progress requires more than extrapolating current capabilities; understanding fundamental task difficulty is key to predicting future breakthroughs.

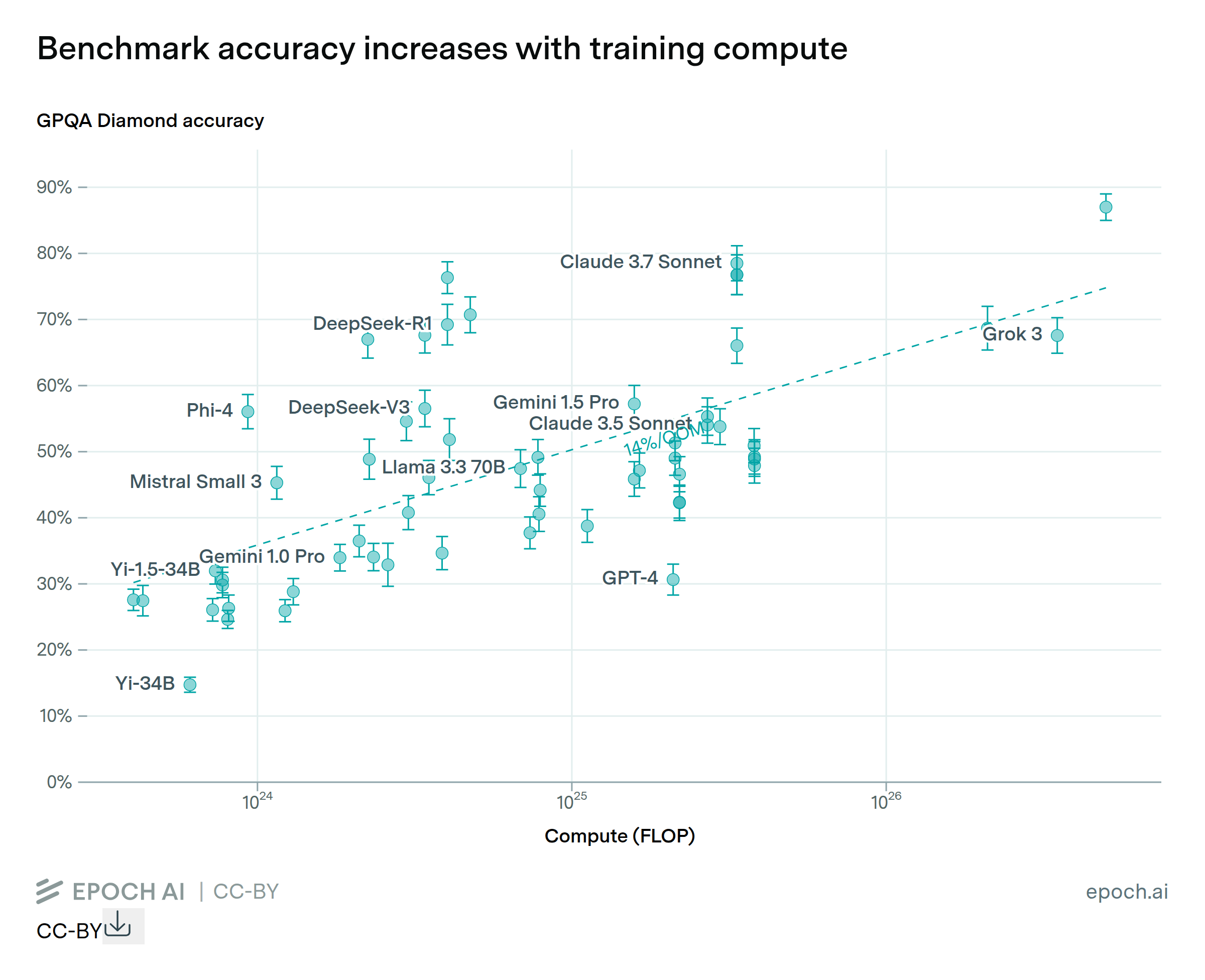

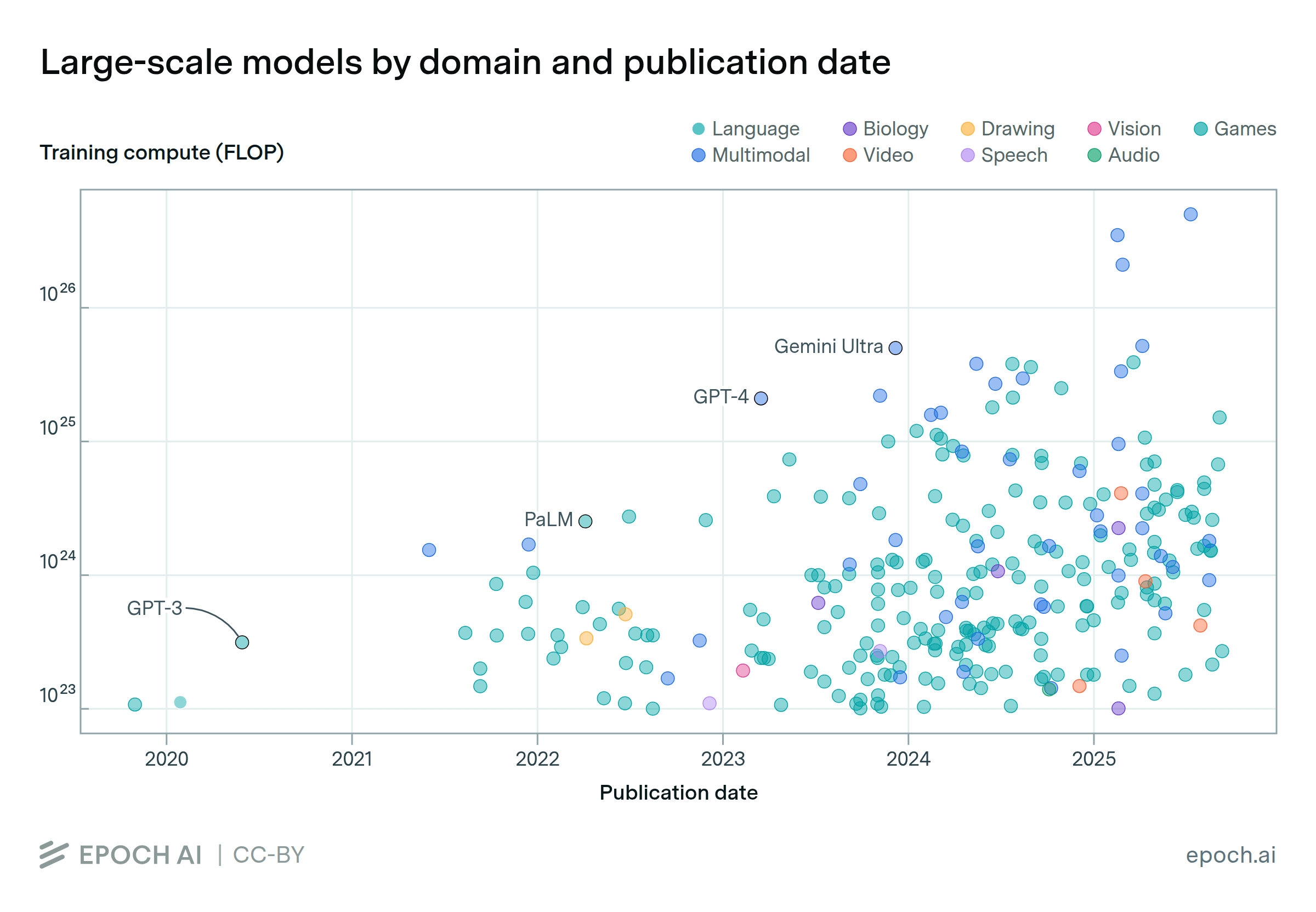

AI progress is accelerating, with next-gen models surpassing GPT-4 in compute power, driving major leaps in reasoning, coding, and math capabilities.

Algorithmic progress in AI may not reduce compute spending—instead, it could drive higher investment as efficiency unlocks new opportunities.

This Gradient Updates issue explores DeepSeek-R1's architecture, training cost, and pricing, showing how it rivals OpenAI's o1 at 30x lower cost.

Epoch AI presents their first podcast, exploring AI scaling trends, discussing power demands, chip production, data needs, and how continued progress could transform labor markets and potentially accelerate global economic growth to unprecedented levels.

This Gradient Updates issue explores how mixture-of-experts models compare to dense models in inference, focusing on costs, efficiency, and decoding dynamics.

In this Gradient Updates weekly issue, Ege discusses how frontier language models have unexpectedly reversed course on scaling, with current models an order of magnitude smaller than GPT-4.

We introduce an interactive simulation tool which can simulate distributed training runs of large language models under ideal conditions.

Our analysis shows hardware failures won't limit AI training scale. GPU memory-based checkpointing enables training beyond millions of GPUs.

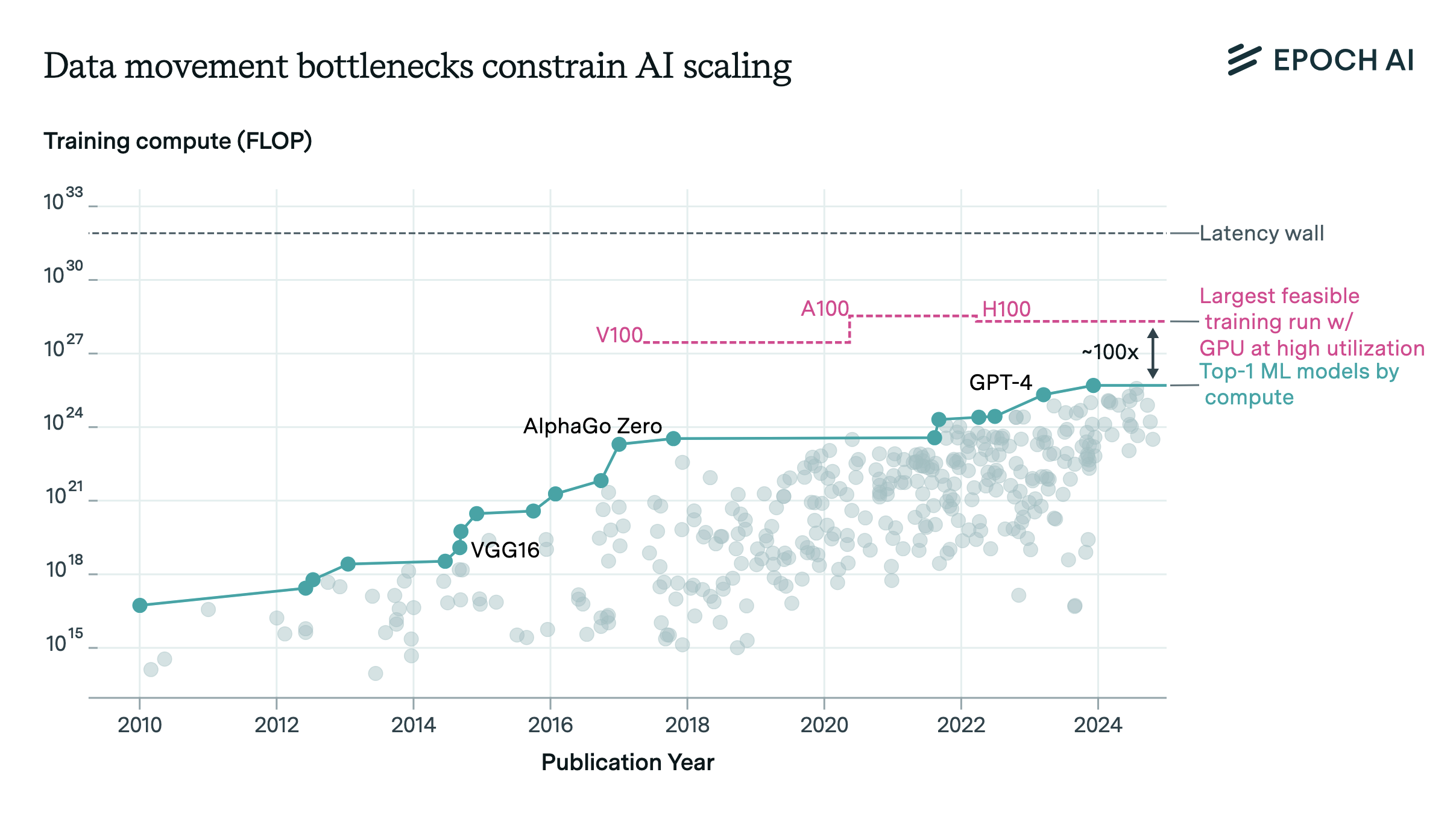

Data movement bottlenecks limit LLM scaling beyond 2e28 FLOP, with a "latency wall" at 2e31 FLOP. We may hit these in ~3 years. Aggressive batch size scaling could potentially overcome these limits.

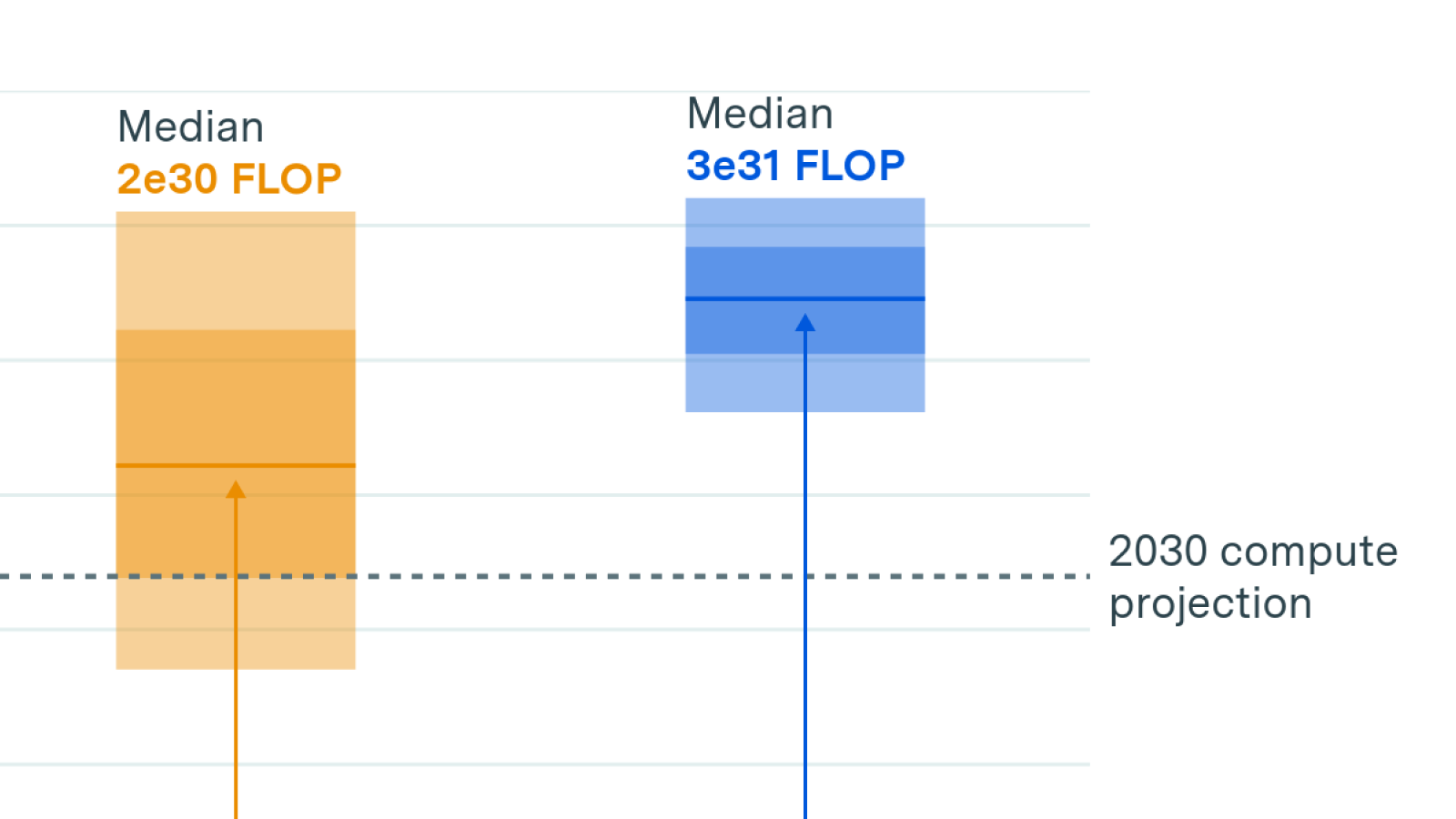

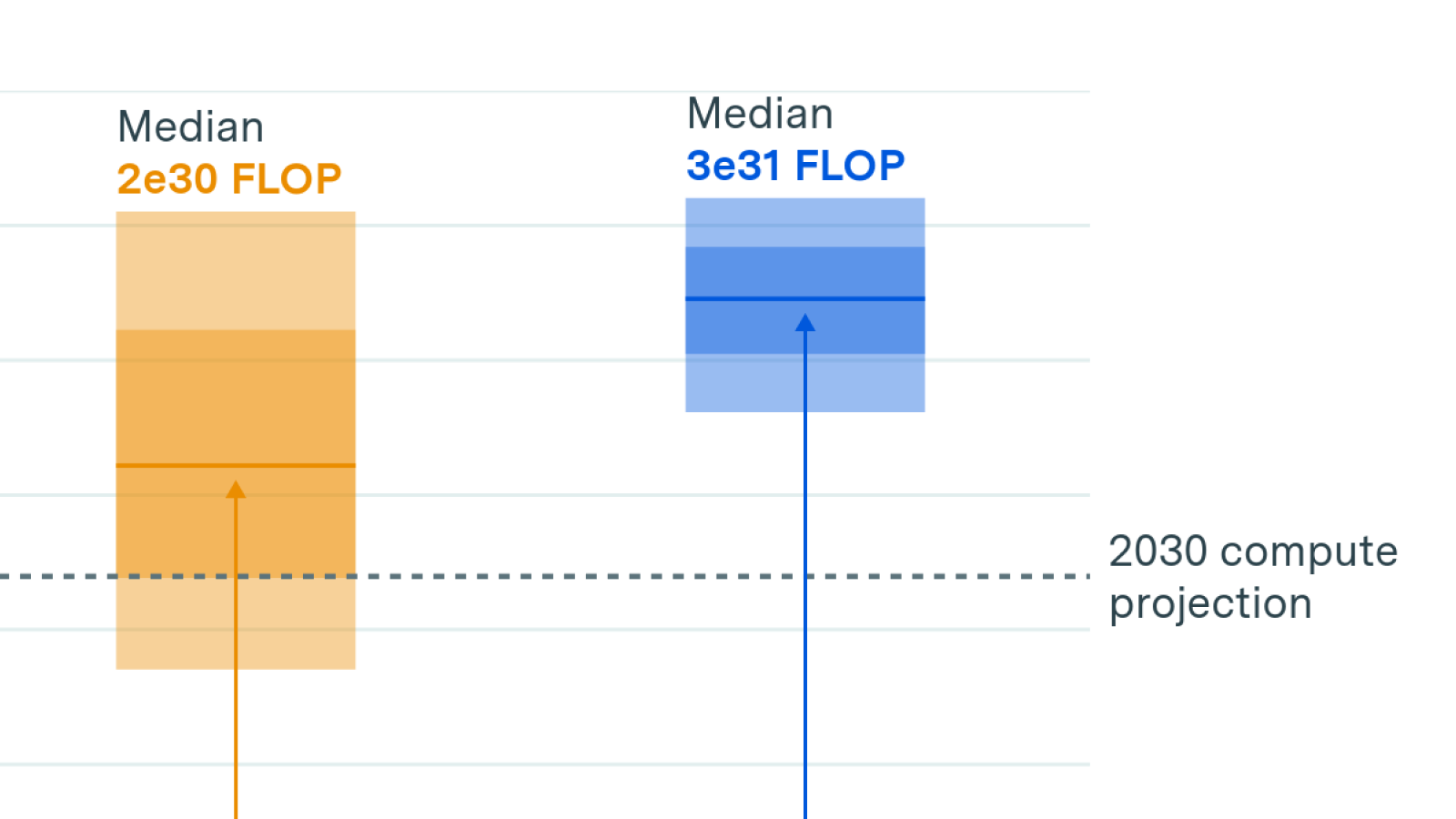

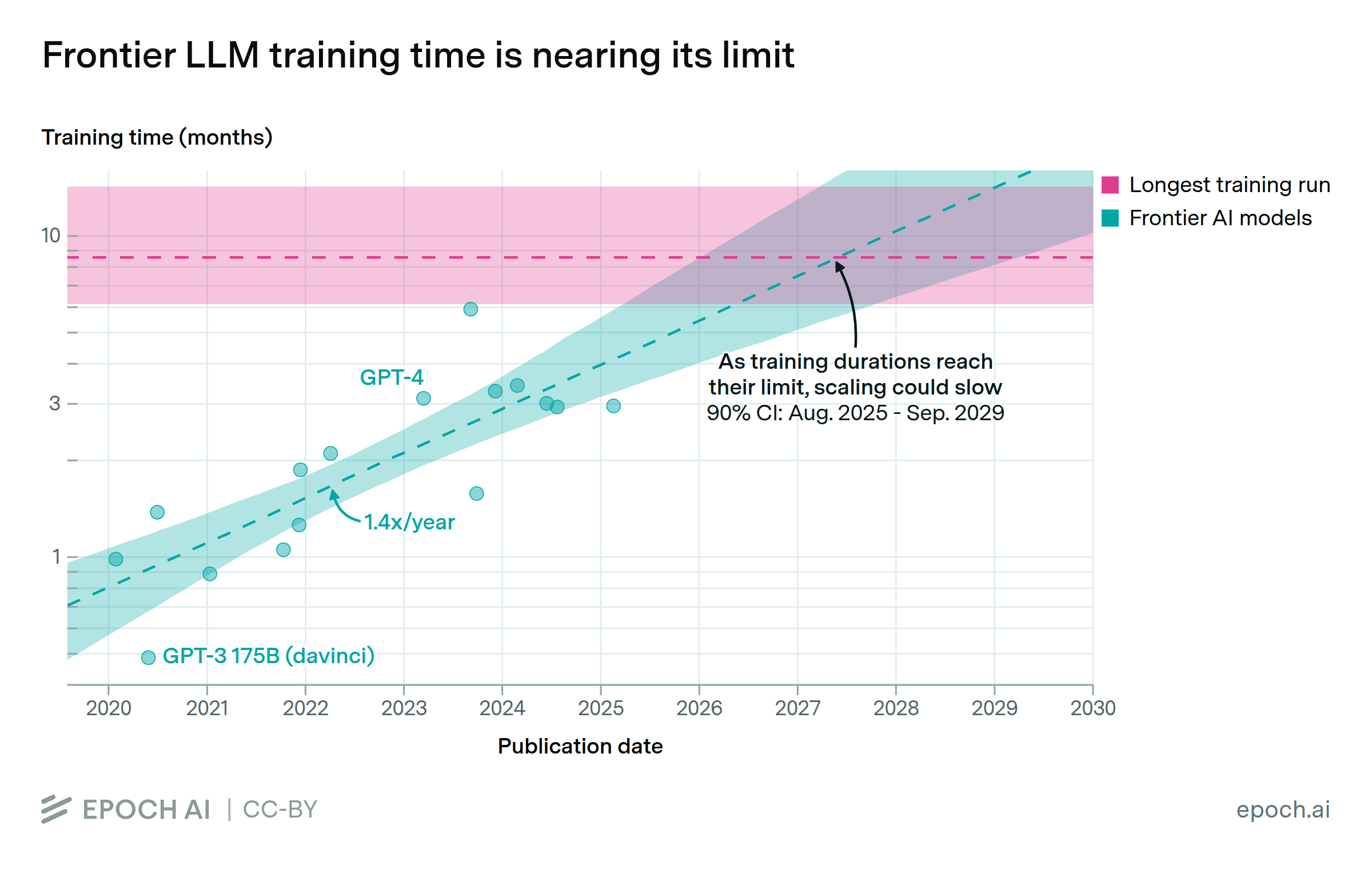

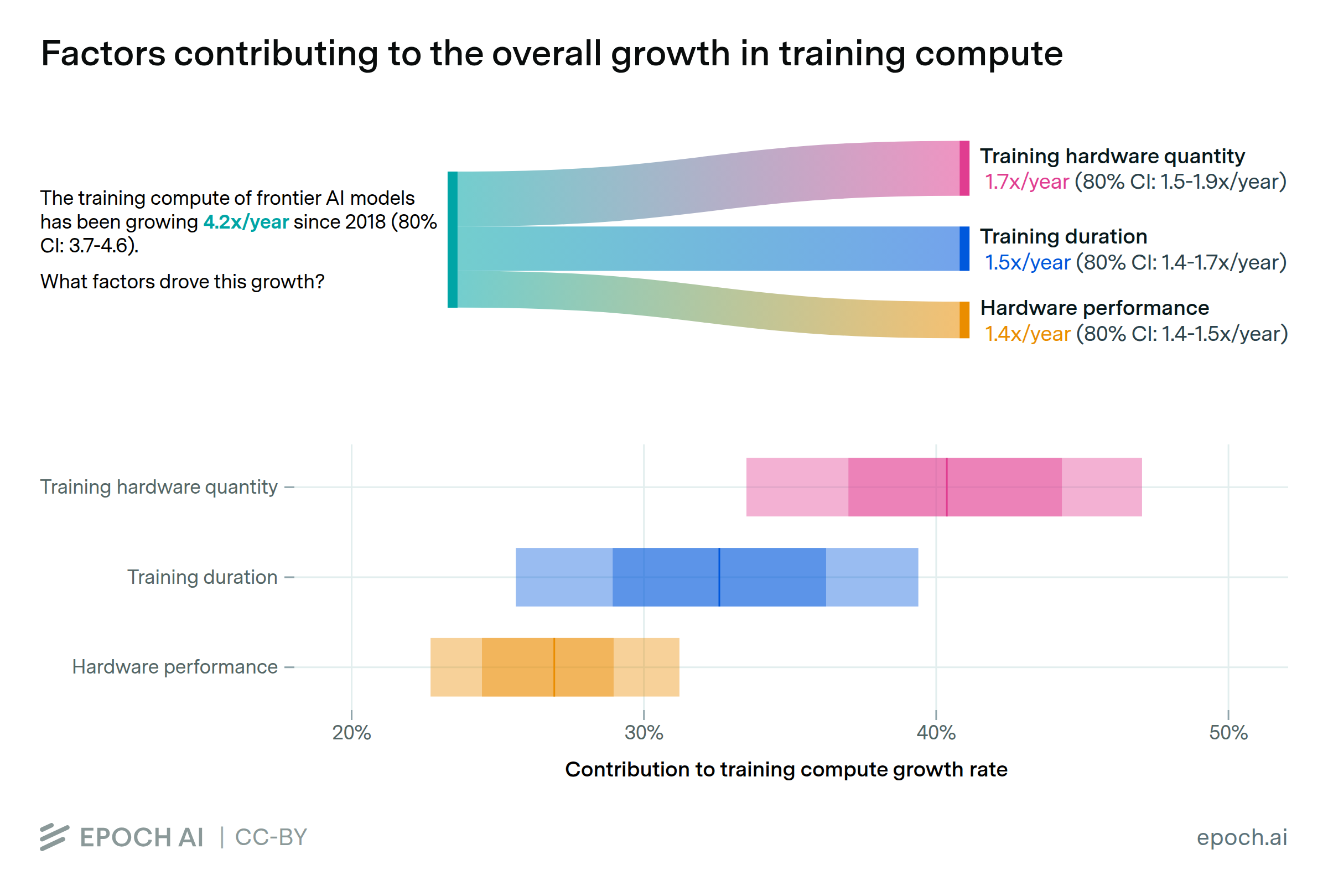

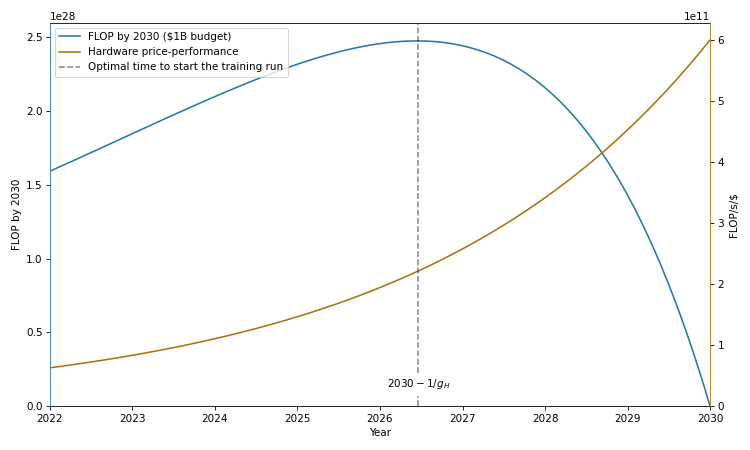

We investigate the scalability of AI training runs. We identify electric power, chip manufacturing, data and latency as constraints. We conclude that 2e29 FLOP training runs will likely be feasible by 2030.

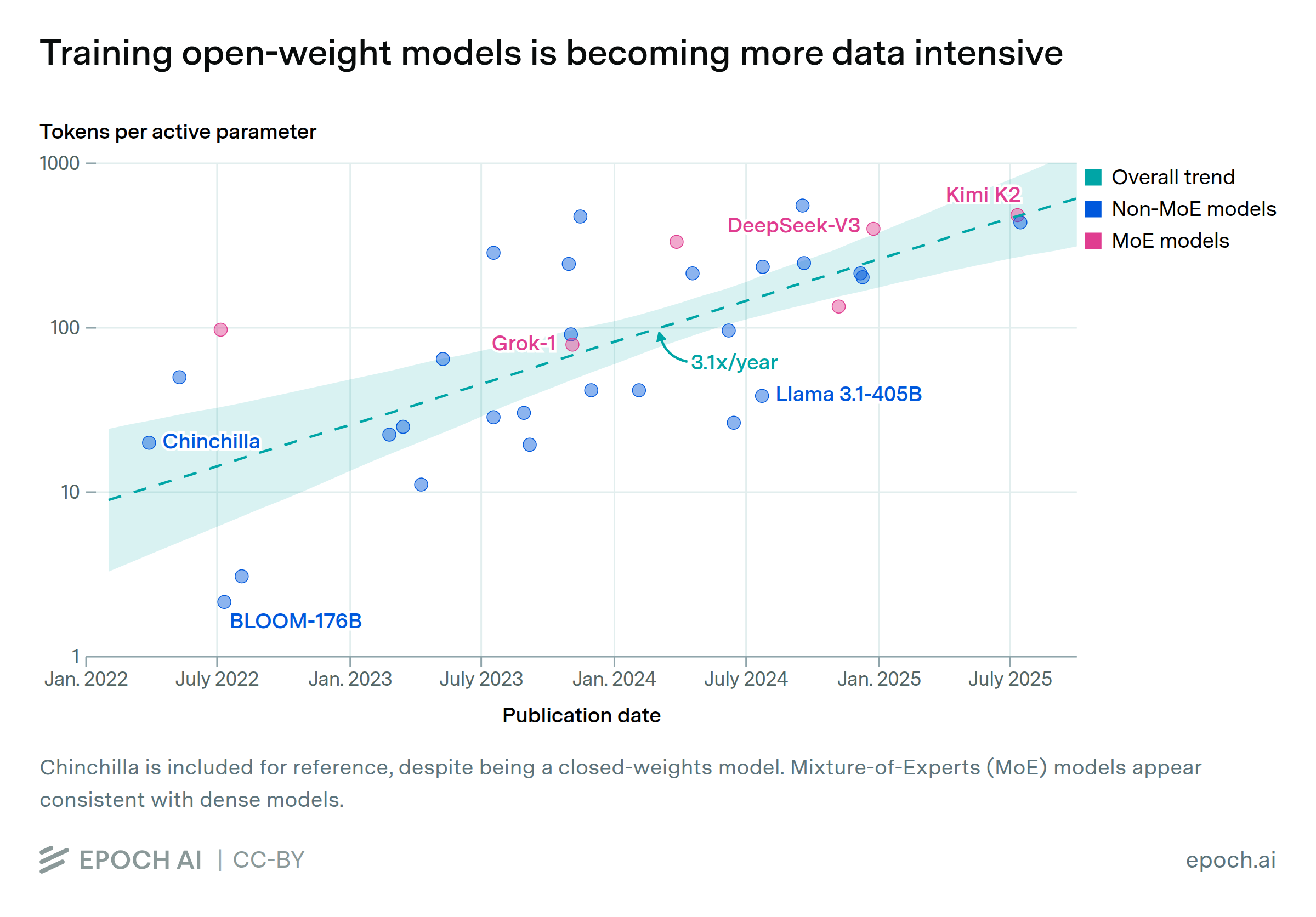

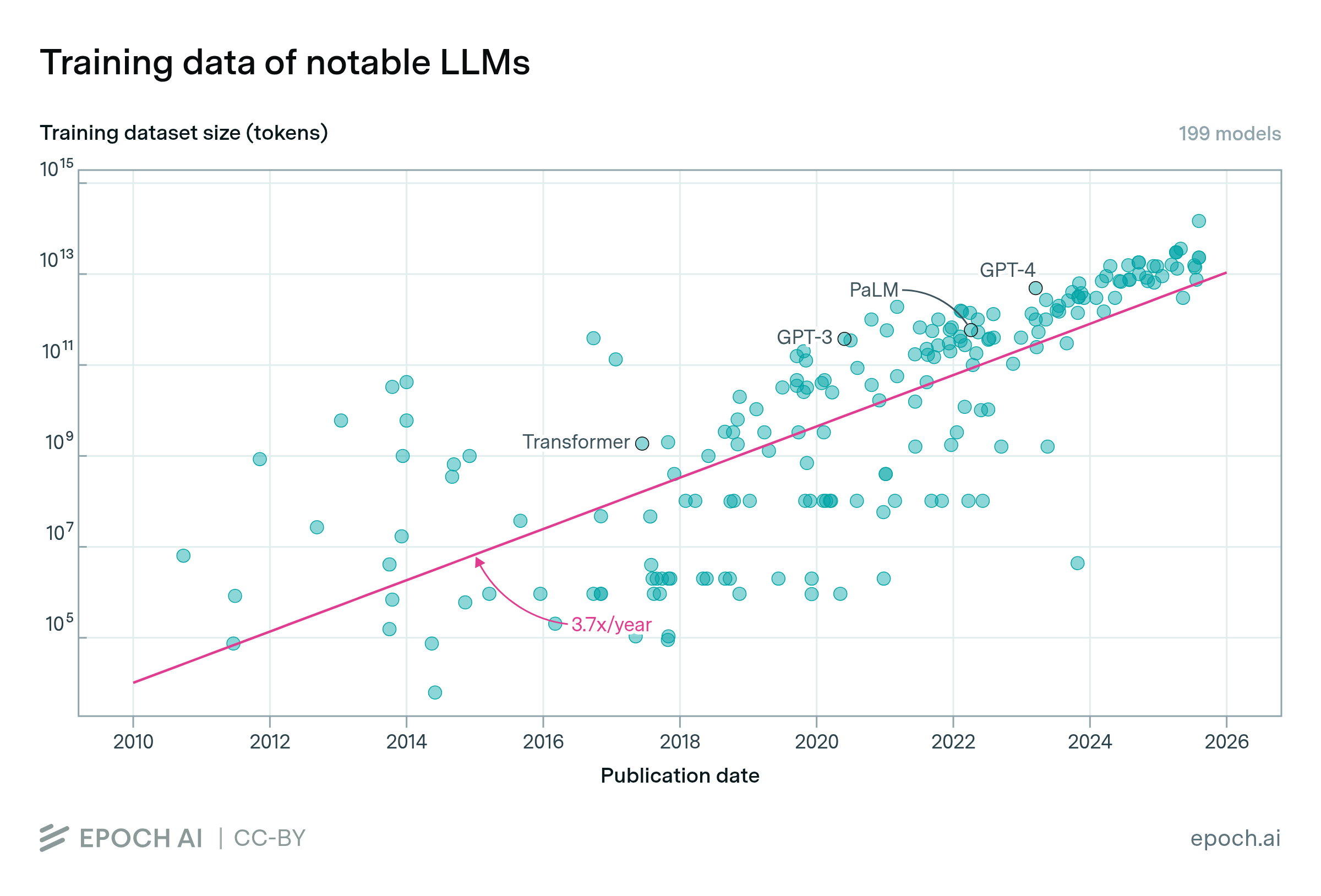

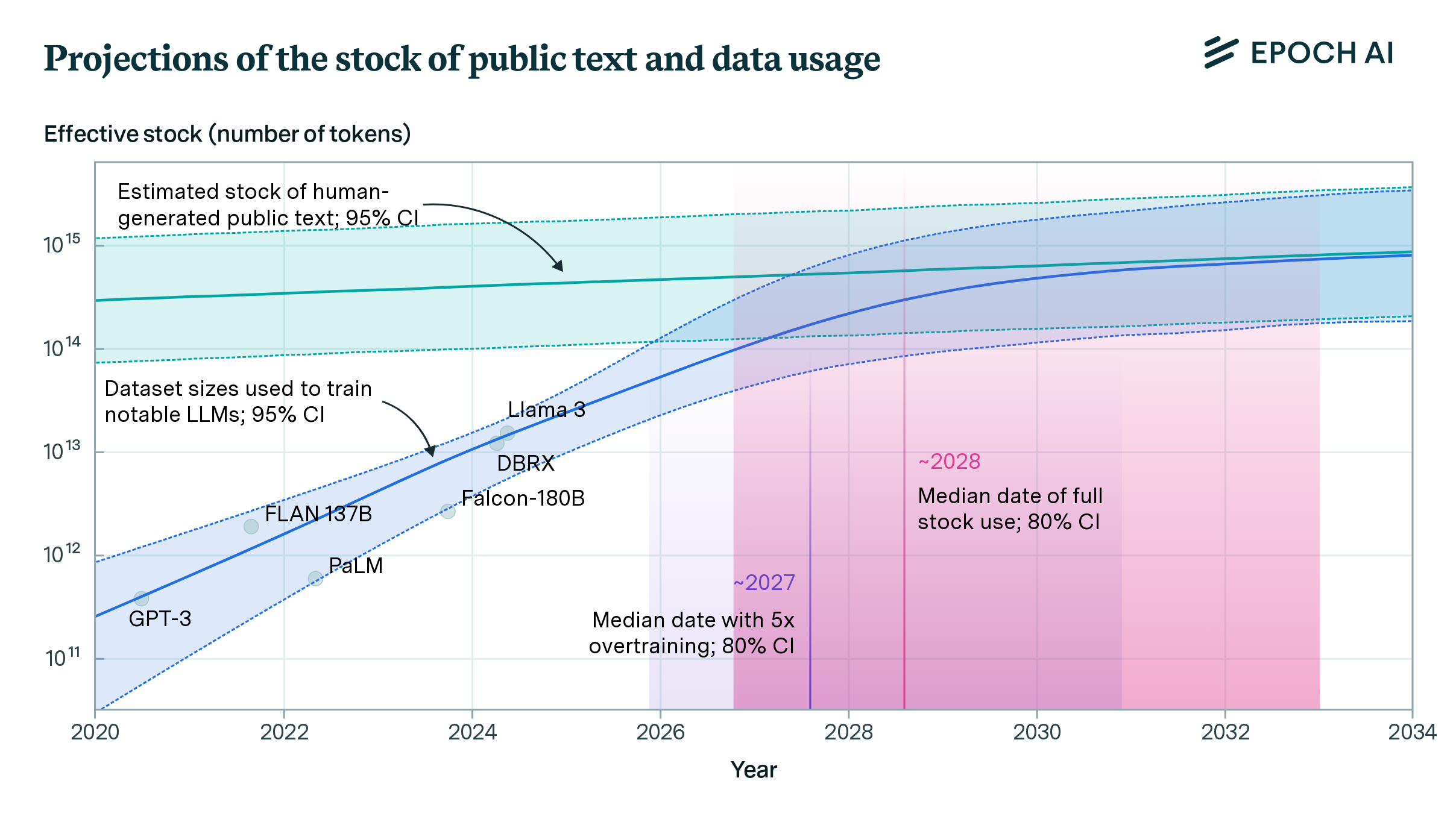

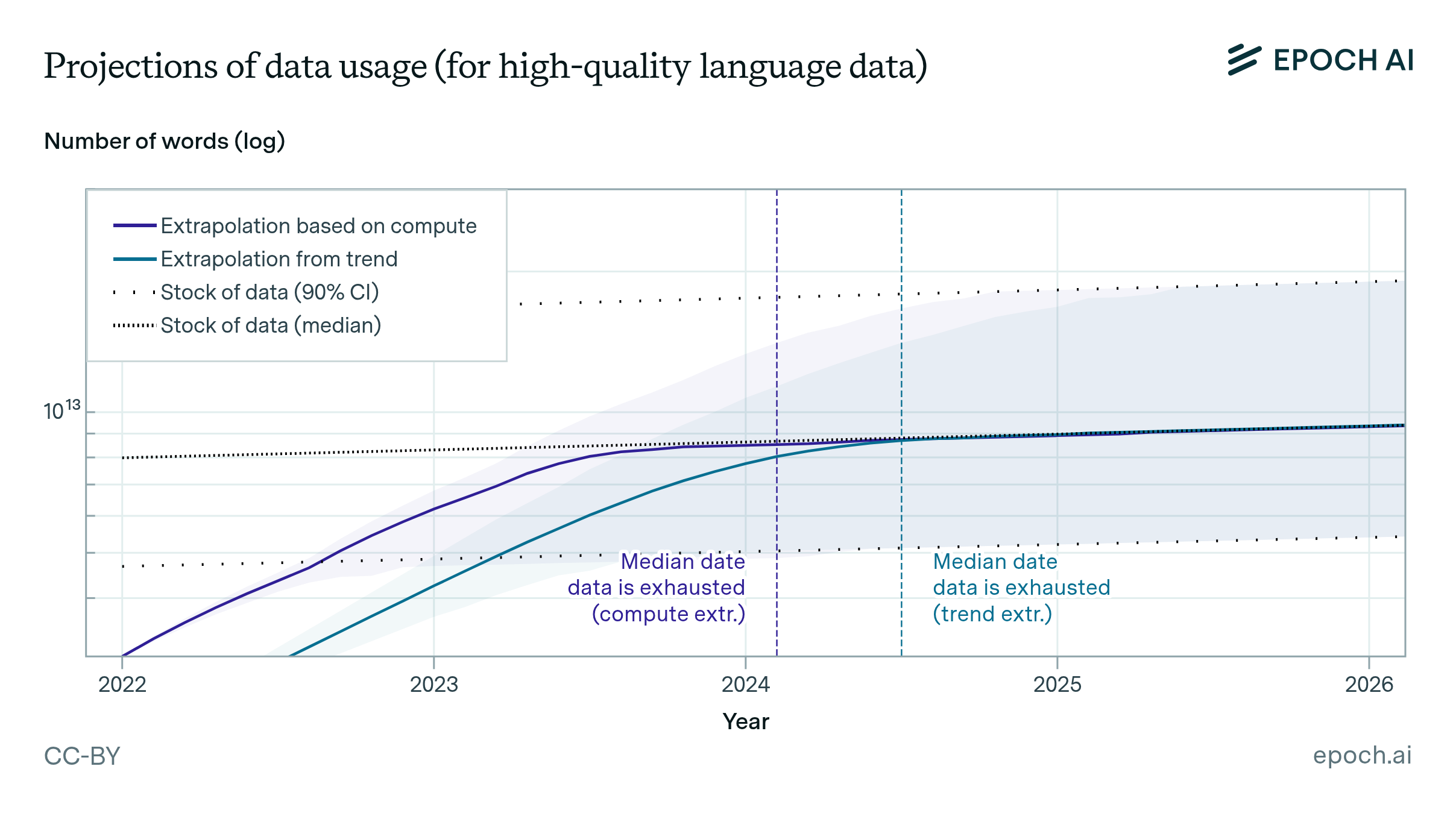

We estimate the effective stock of quality and repetition adjusted human-generated public text for AI training at around 300 trillion tokens. If trends continue, language models will fully utilize this stock between 2026 and 2032, or even earlier if intensely overtrained.

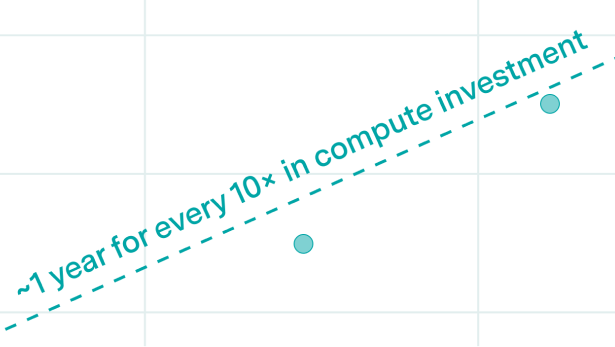

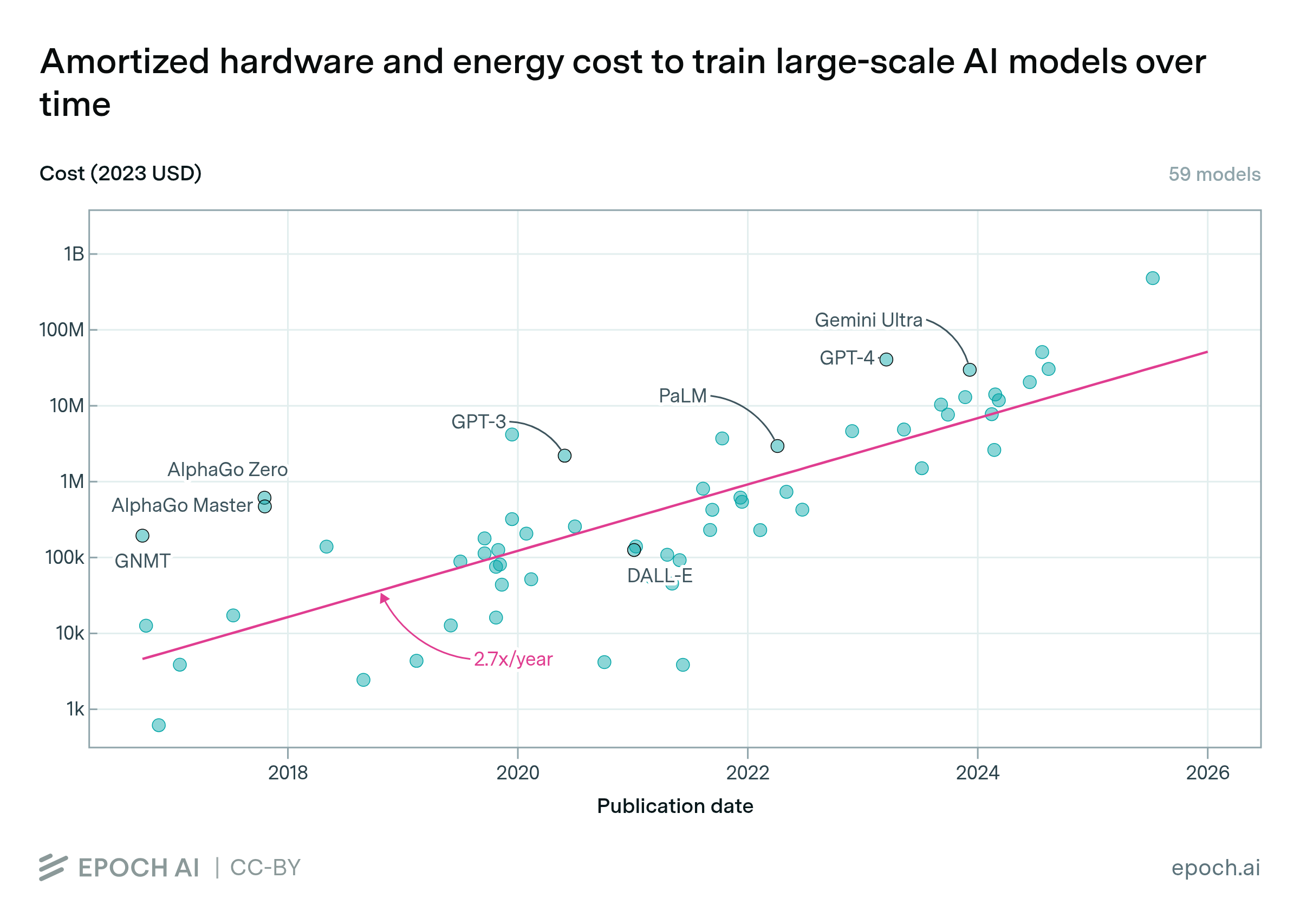

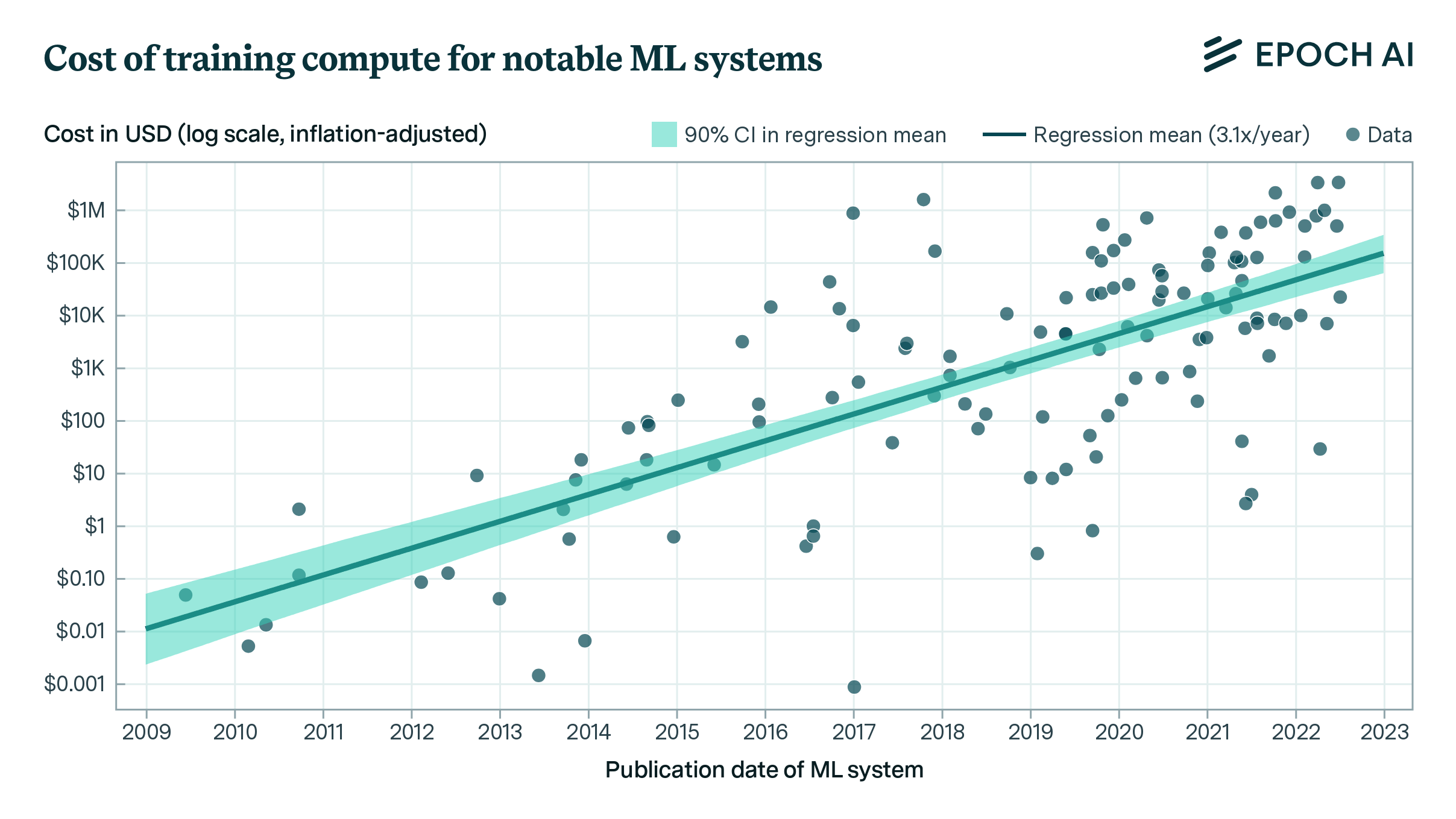

The cost of training frontier AI models has grown by a factor of 2 to 3x per year for the past eight years, suggesting that the largest models will cost over a billion dollars by 2027.

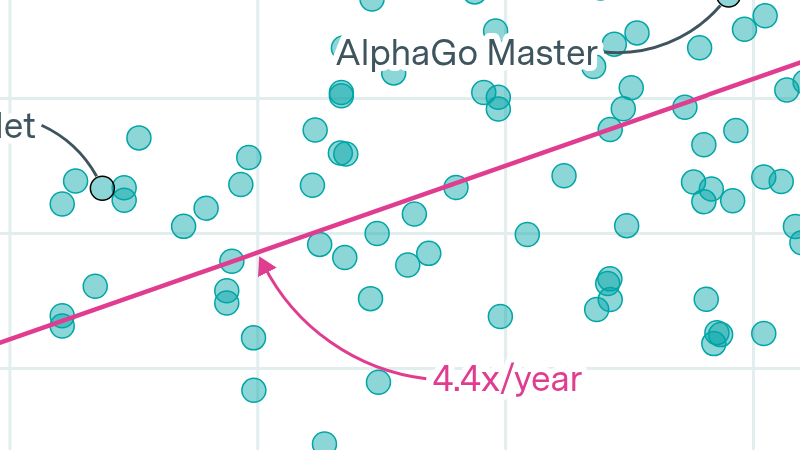

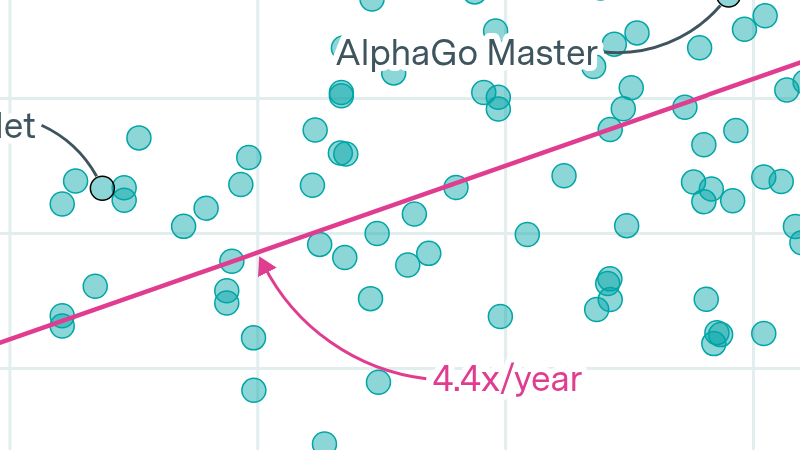

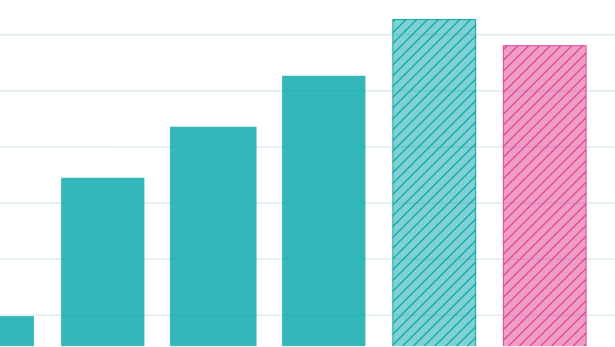

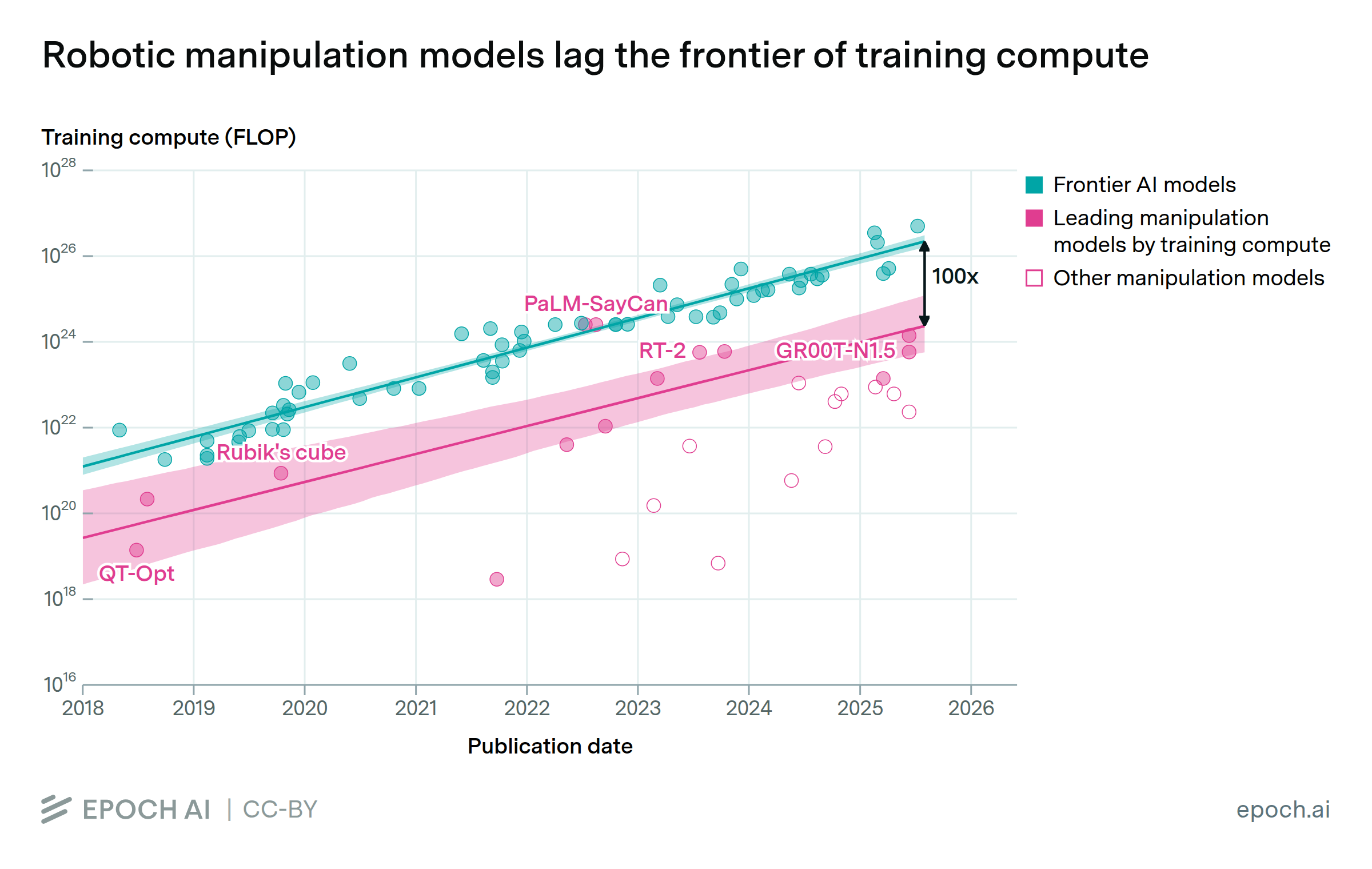

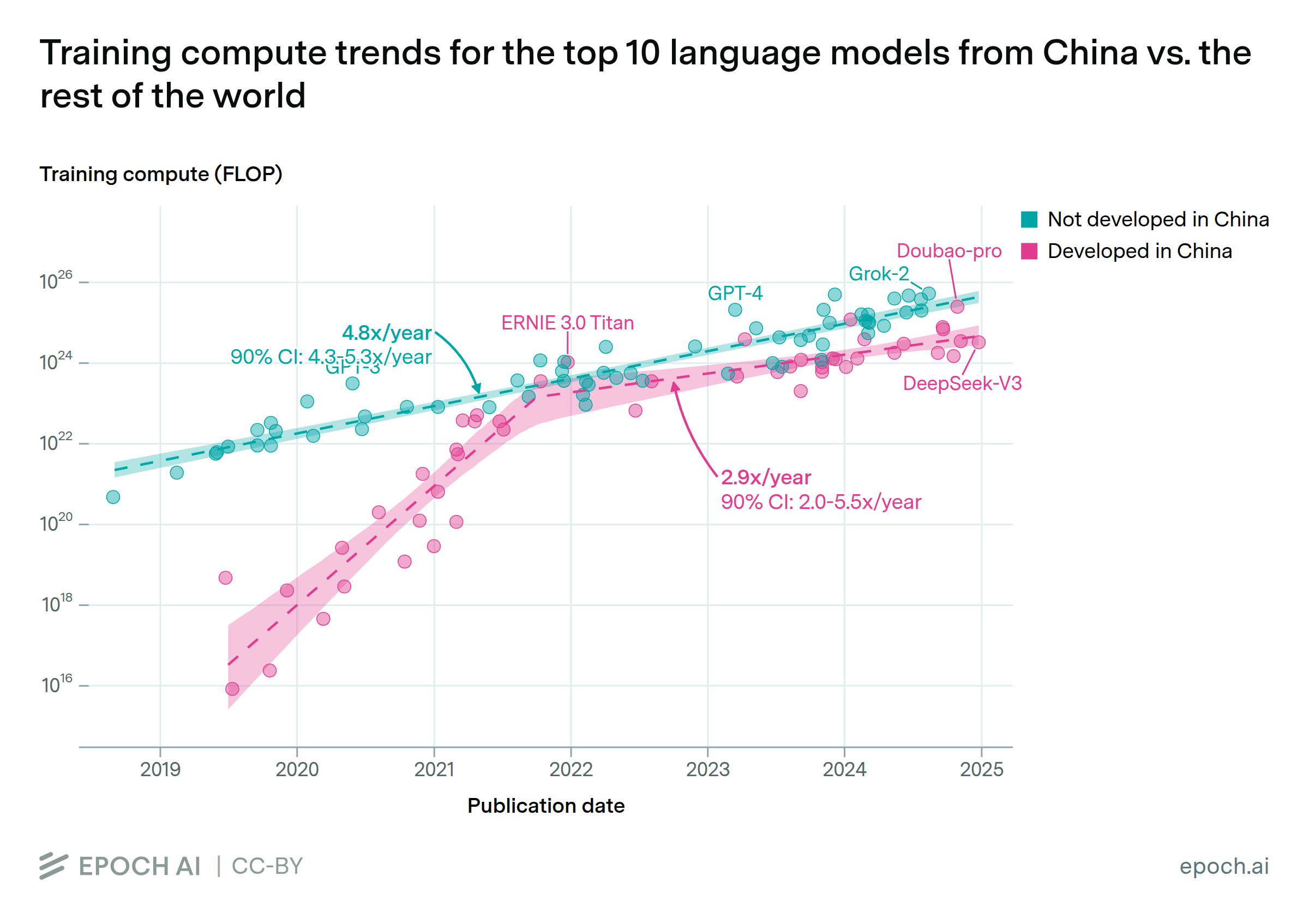

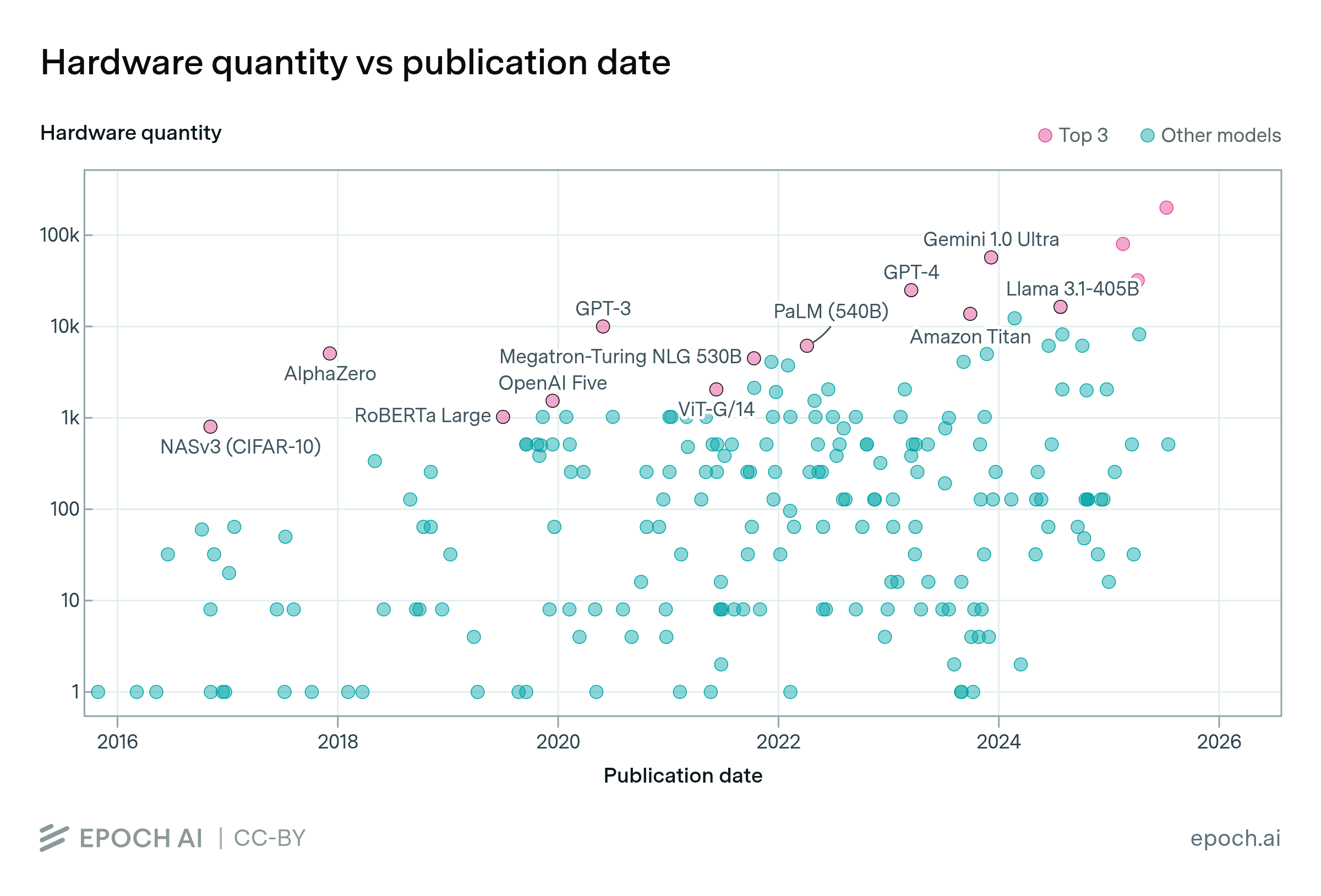

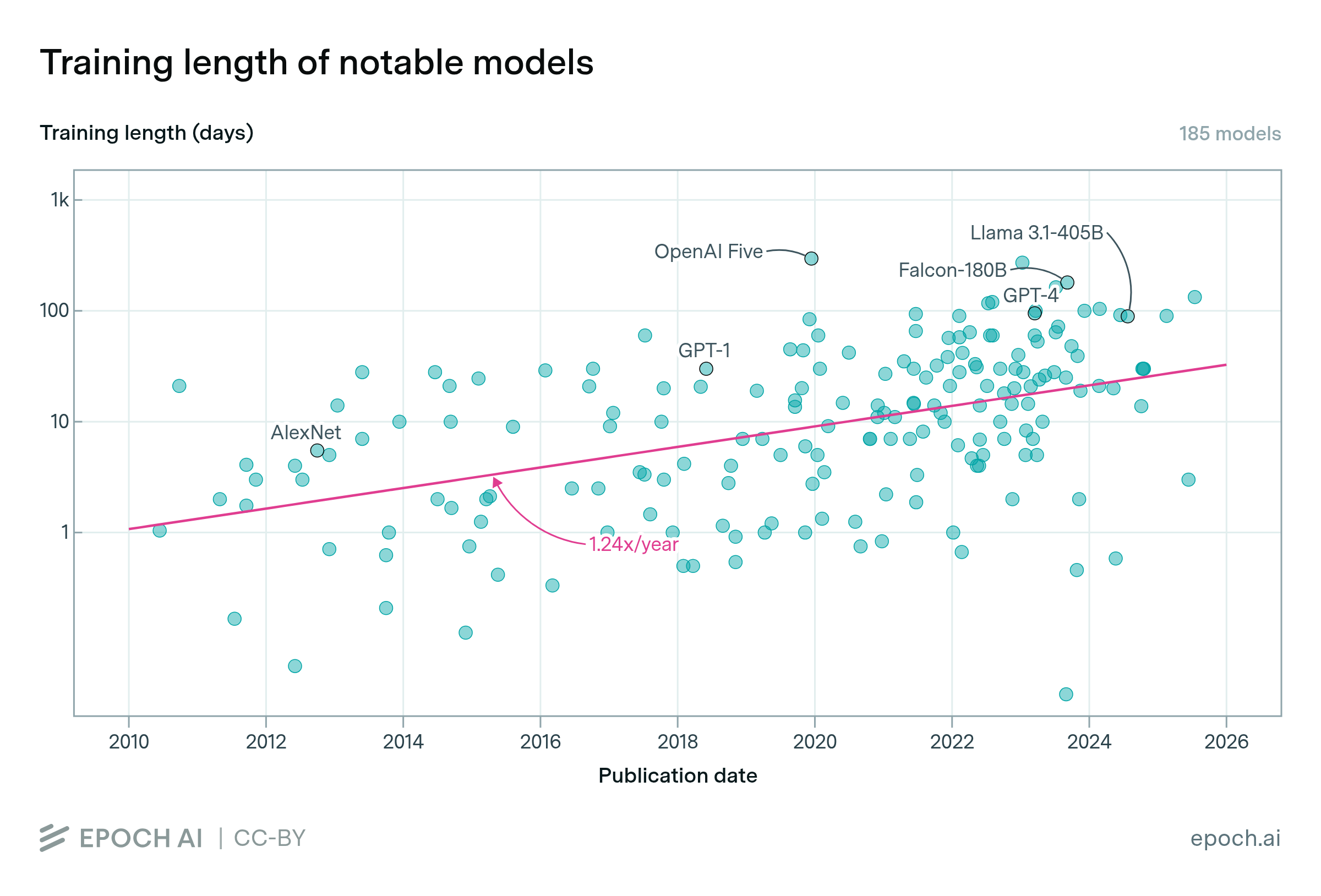

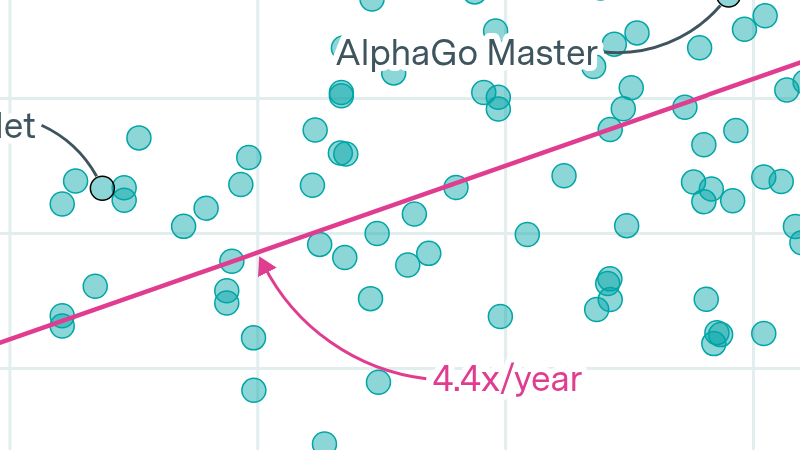

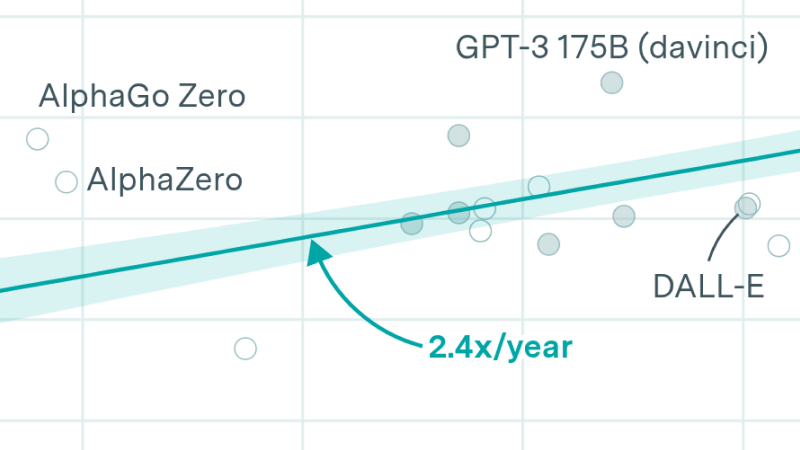

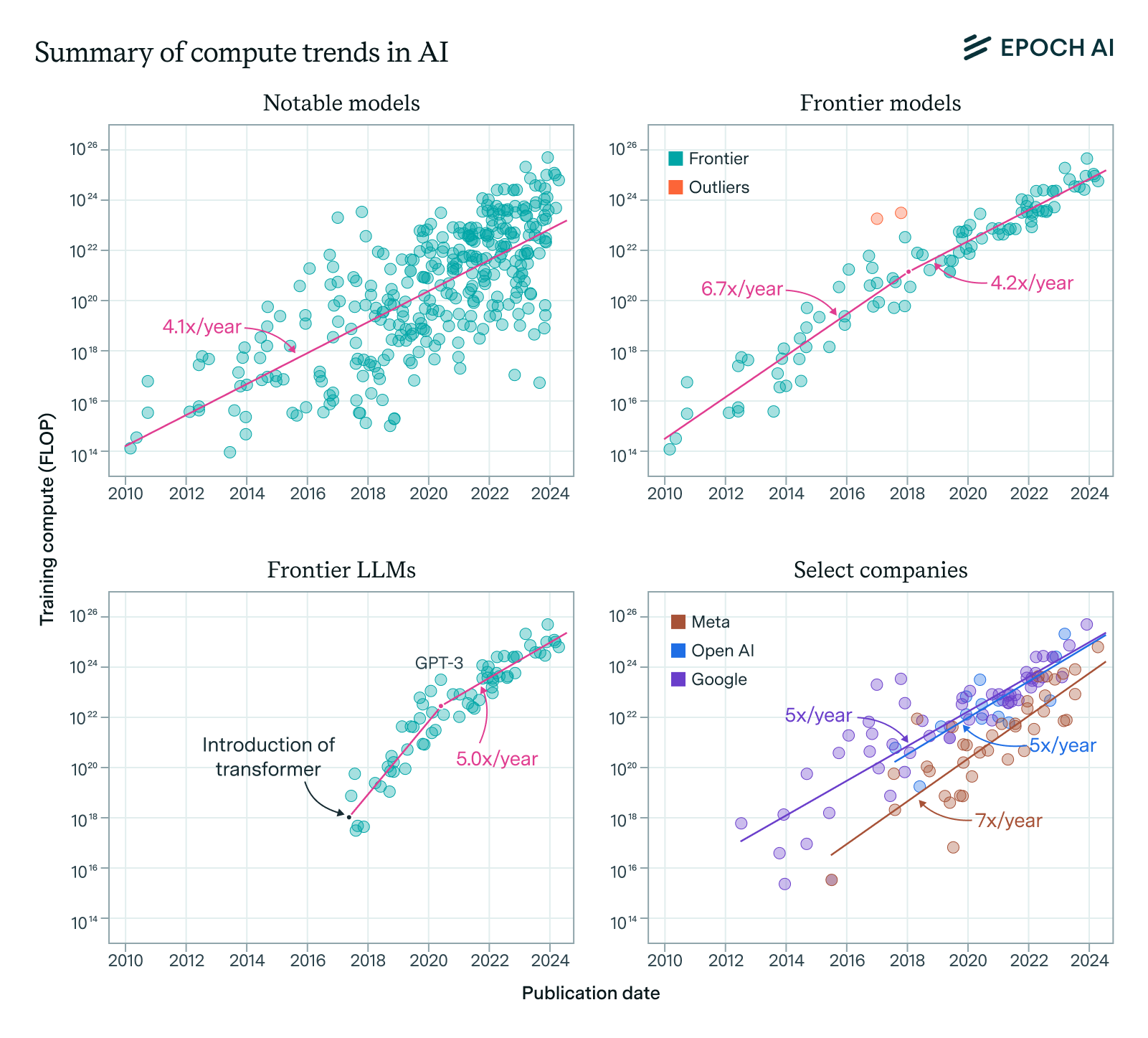

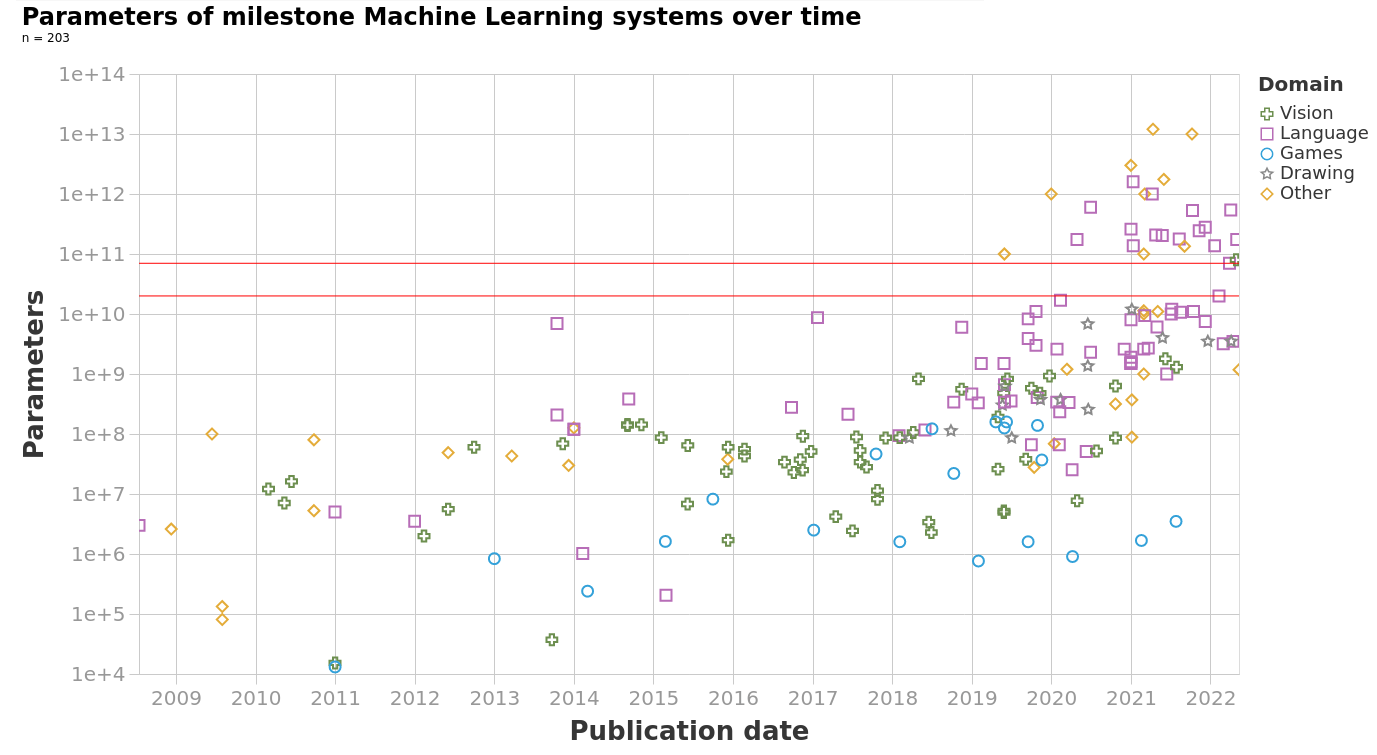

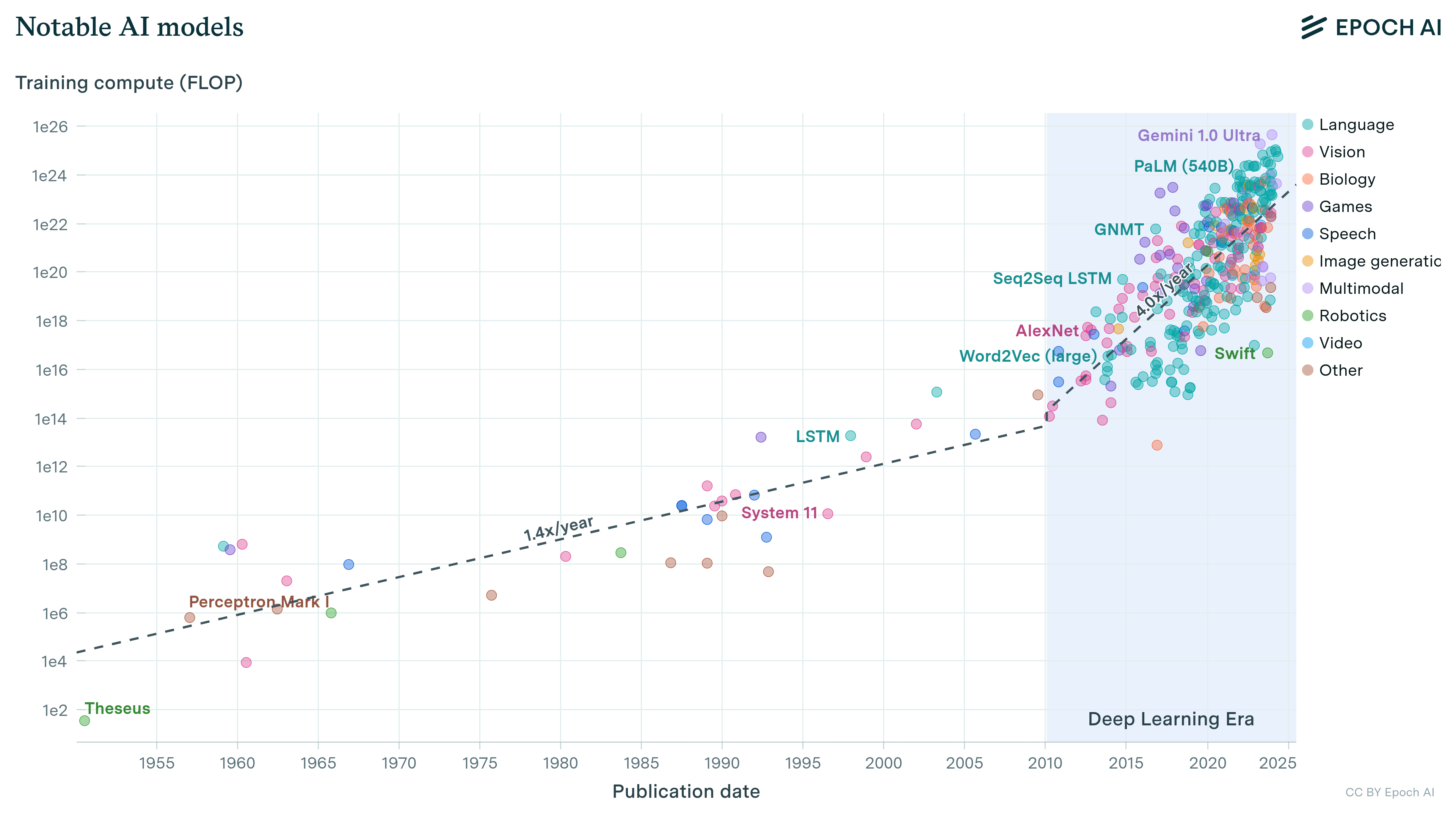

Our expanded AI model database shows that the compute used to train recent models grew 4-5x yearly from 2010 to May 2024. We find similar growth in frontier models, recent large language models, and models from leading companies.

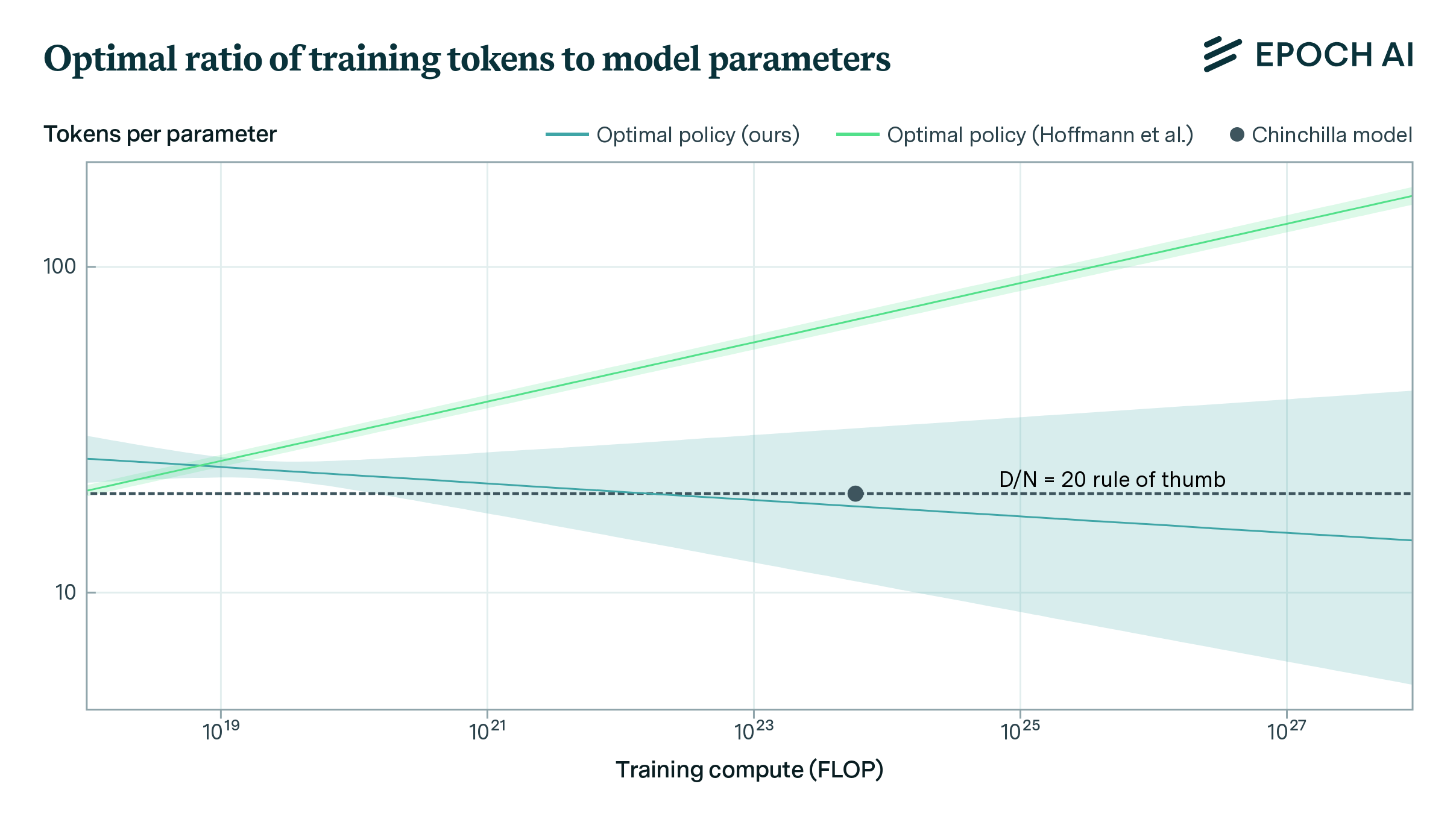

We replicate Hoffmann et al.’s estimation of a parametric scaling law and find issues with their estimates. Our estimates fit the data better and align with Hoffmann’s other approaches.

We present a dataset of 81 large-scale models, from AlphaGo to Gemini, developed across 18 countries, at the leading edge of scale and capabilities.

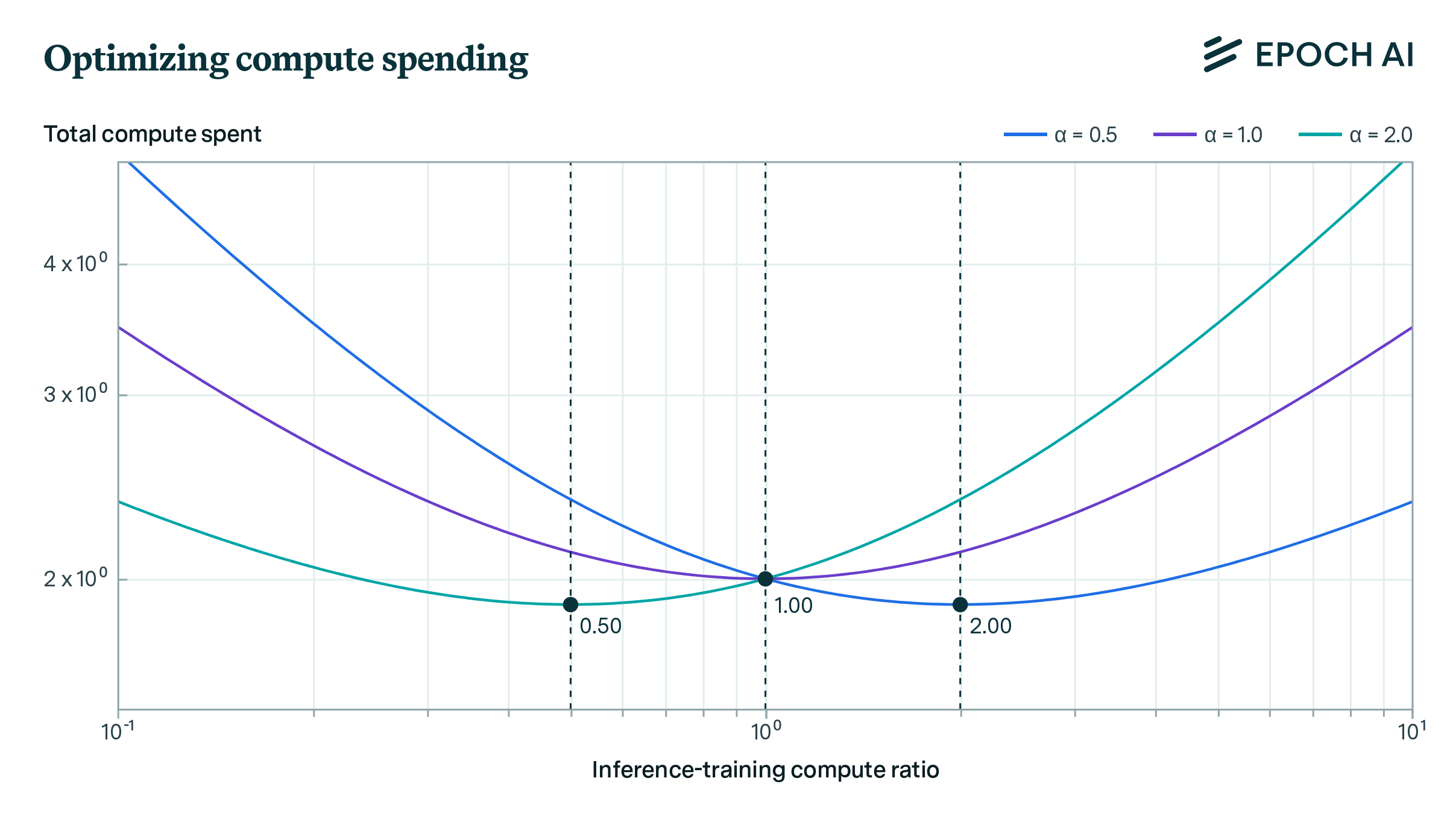

Our analysis indicates that AI labs should spend comparable resources on training and running inference, assuming they can flexibly balance compute between these tasks to maintain model performance.

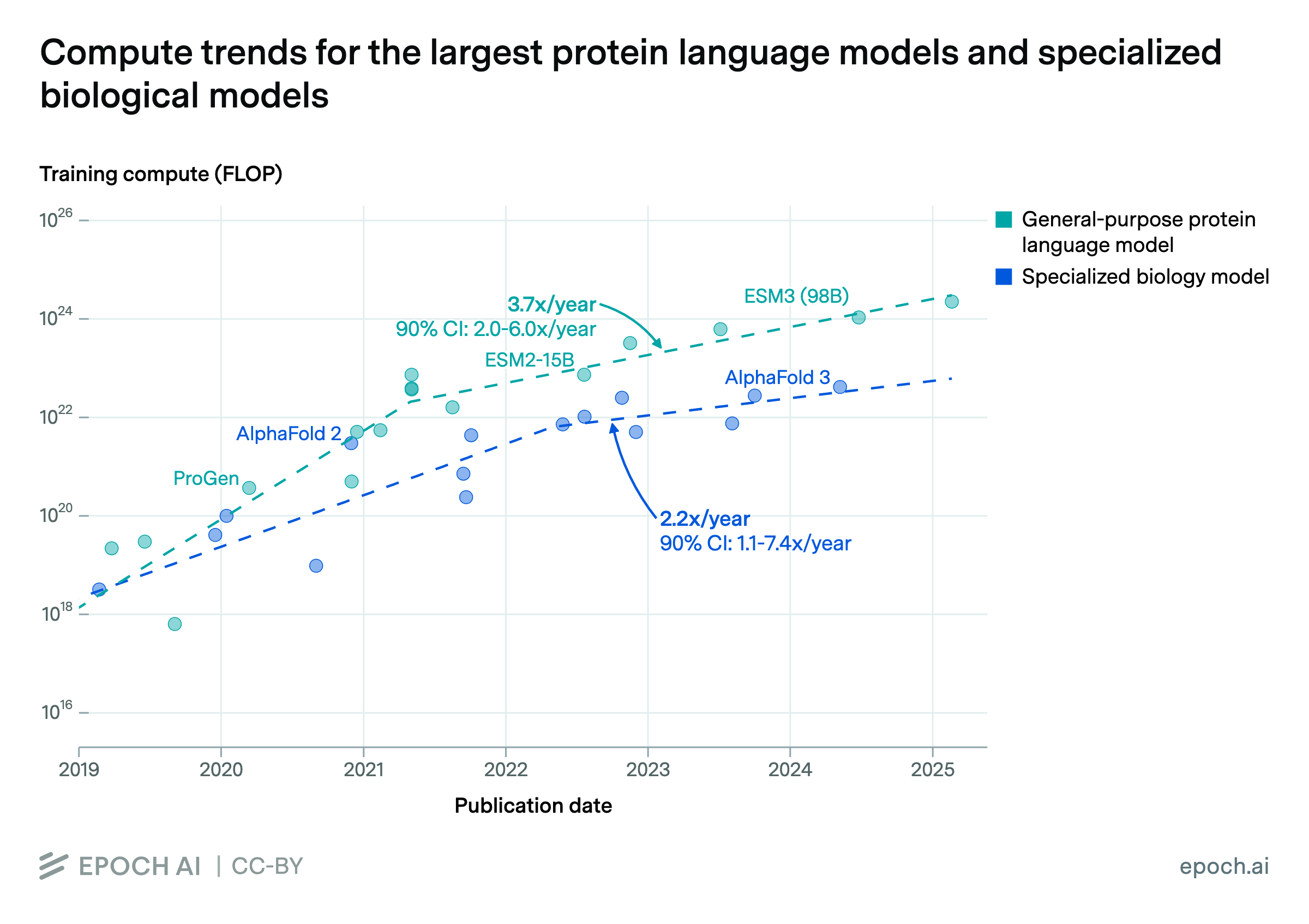

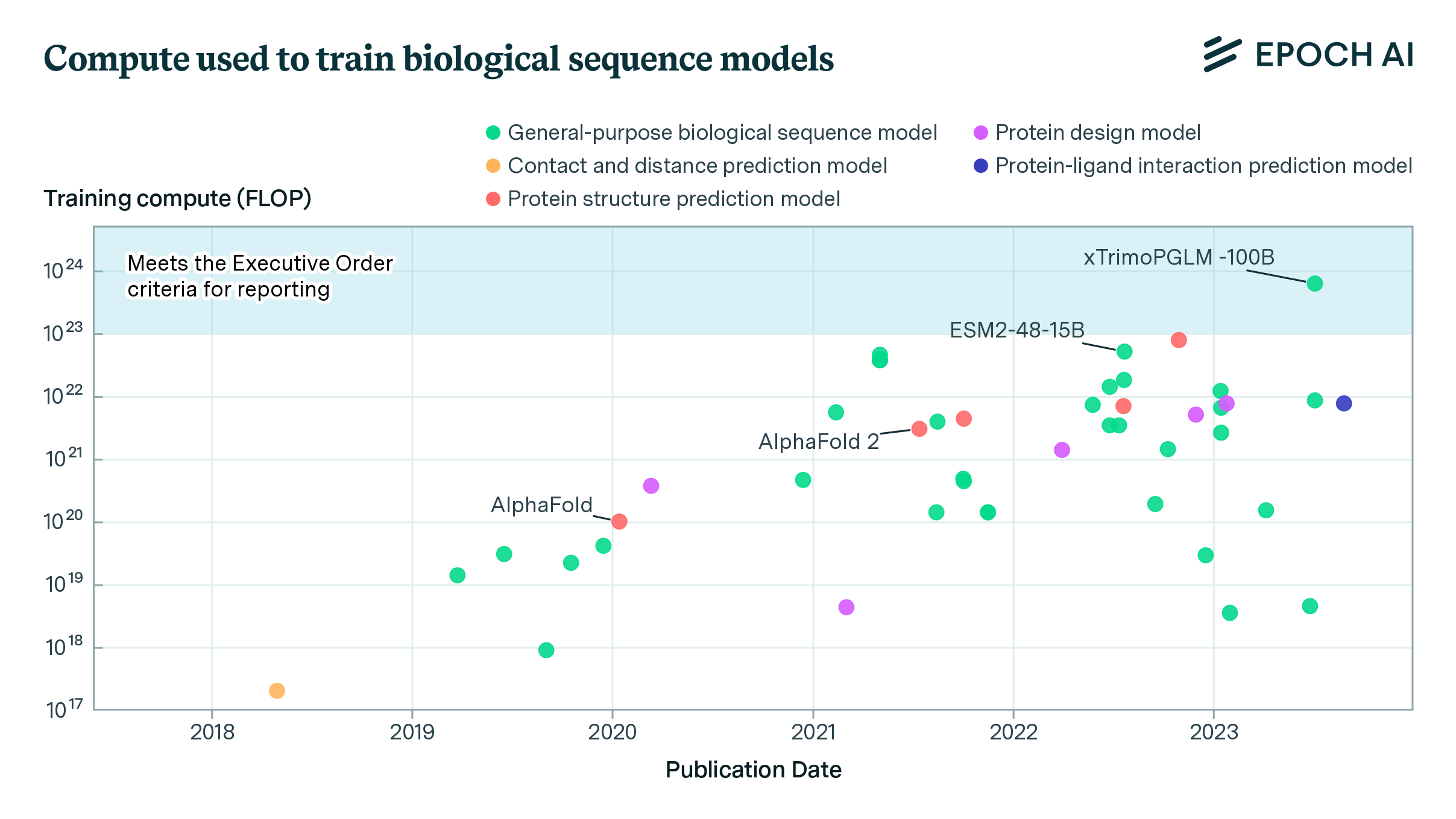

The expanded Epoch database now includes biological sequence models, revealing potential regulatory gaps in the White House’s Executive Order on AI and the growth of the compute used in their training.

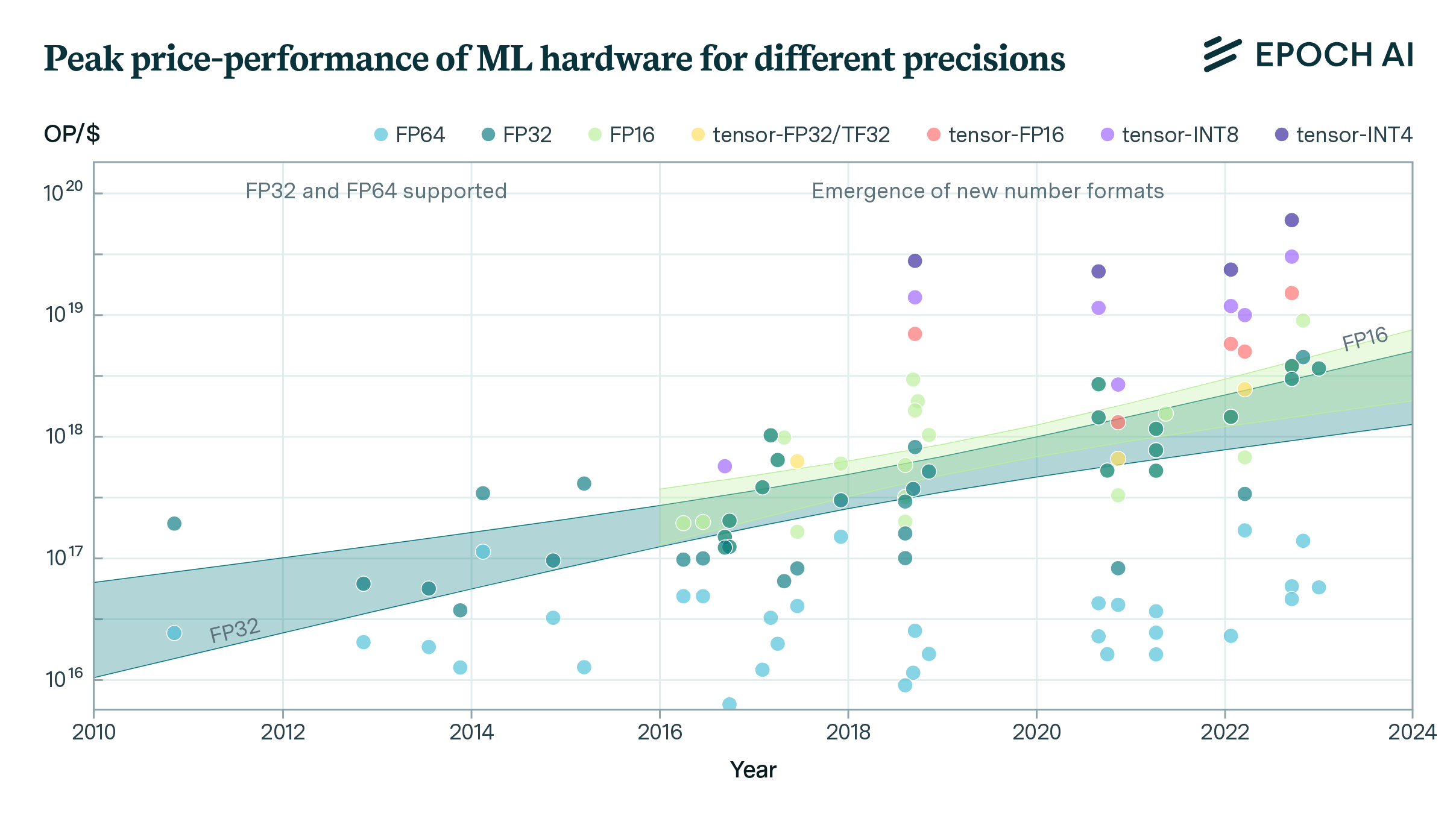

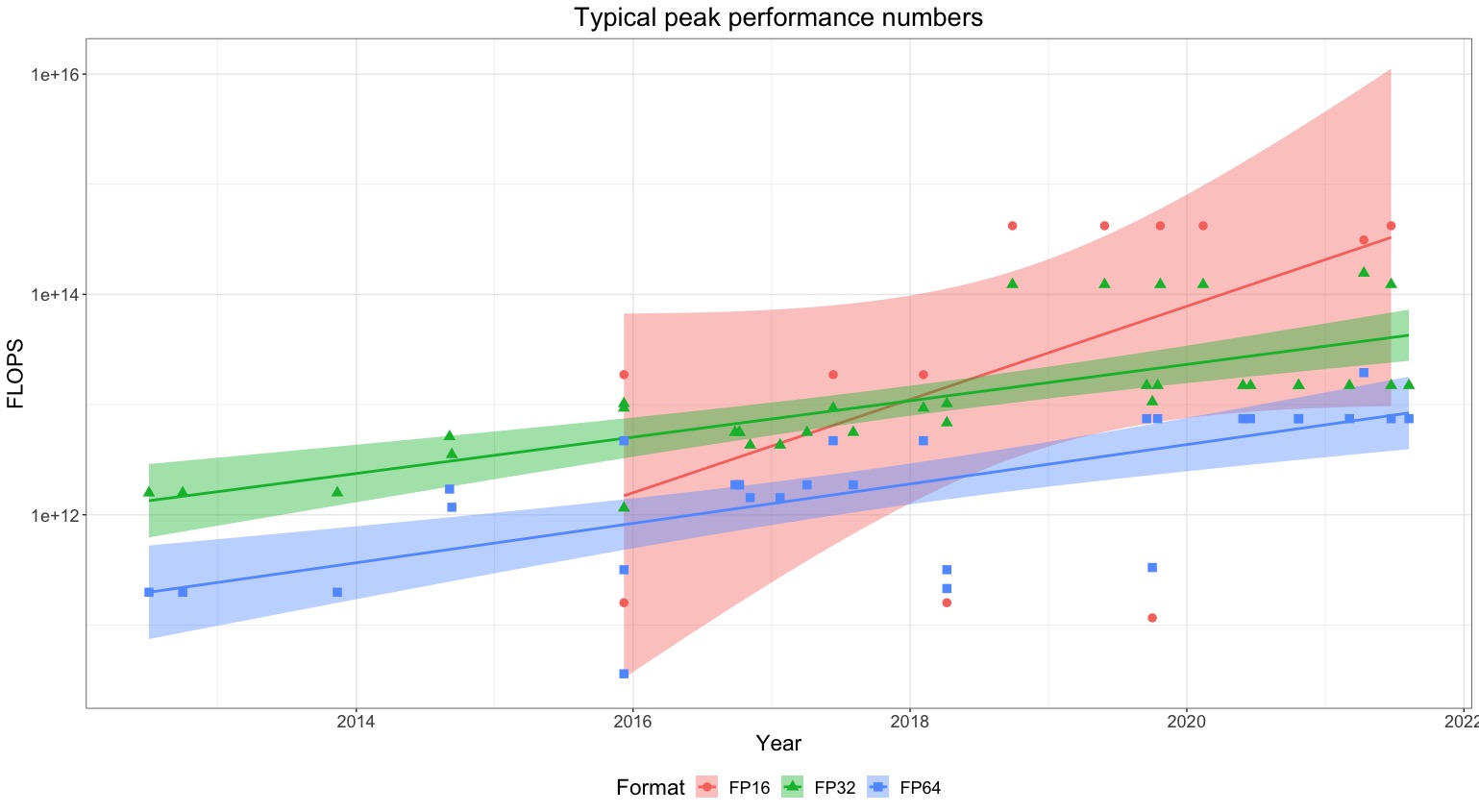

FLOP/s performance in 47 ML hardware accelerators doubled every 2.3 years. Switching from FP32 to tensor-FP16 led to a further 10x performance increase. Memory capacity and bandwidth doubled every 4 years.

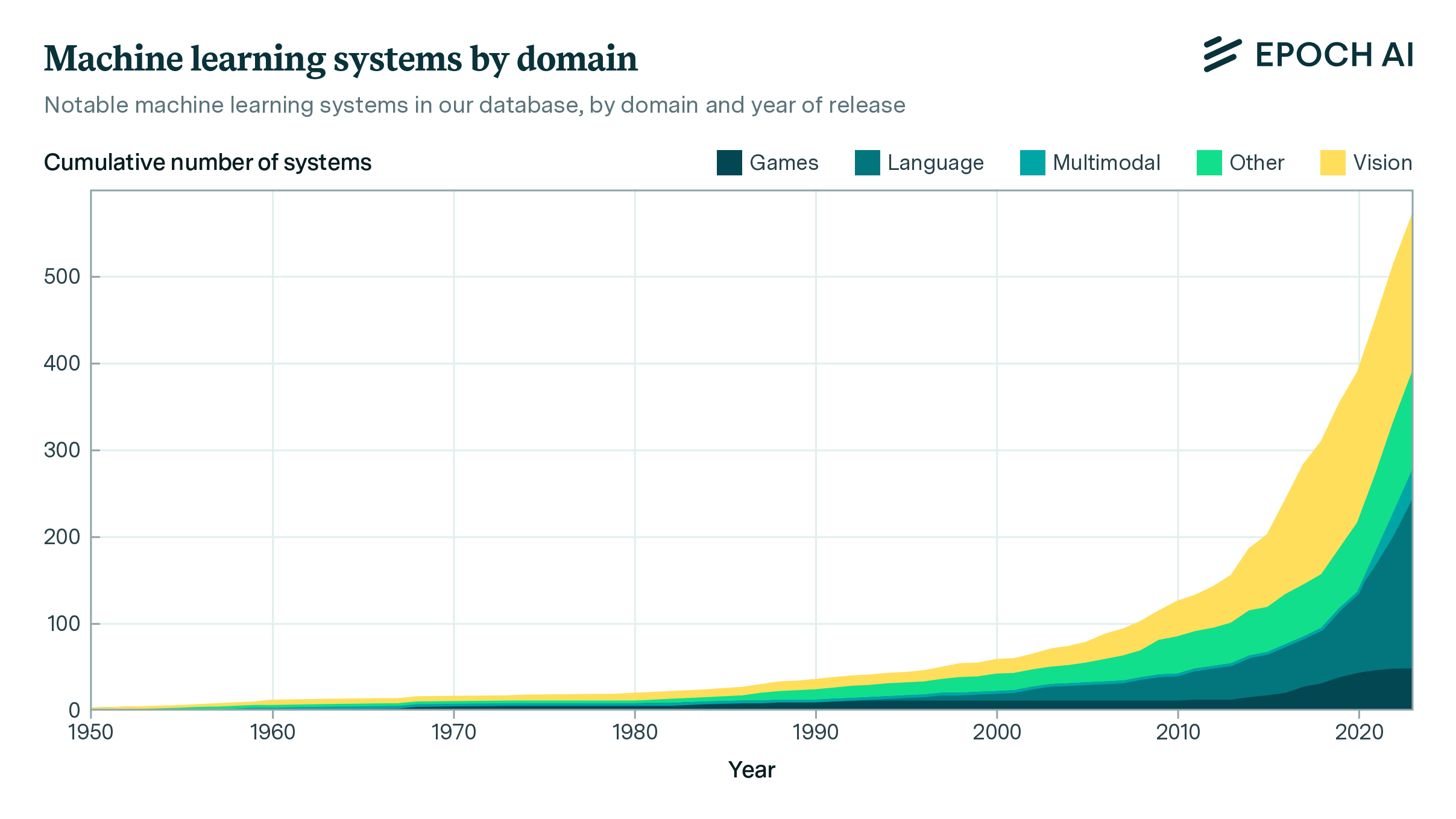

Our expanded database, which tracks the parameters, datasets, training compute, and other details of notable machine learning systems, now spans over 700 notable machine learning models.

Our new article examines why we might (or might not) expect growth on the order of ten-fold the growth rates common in today’s frontier economies once advanced AI systems are widely deployed.

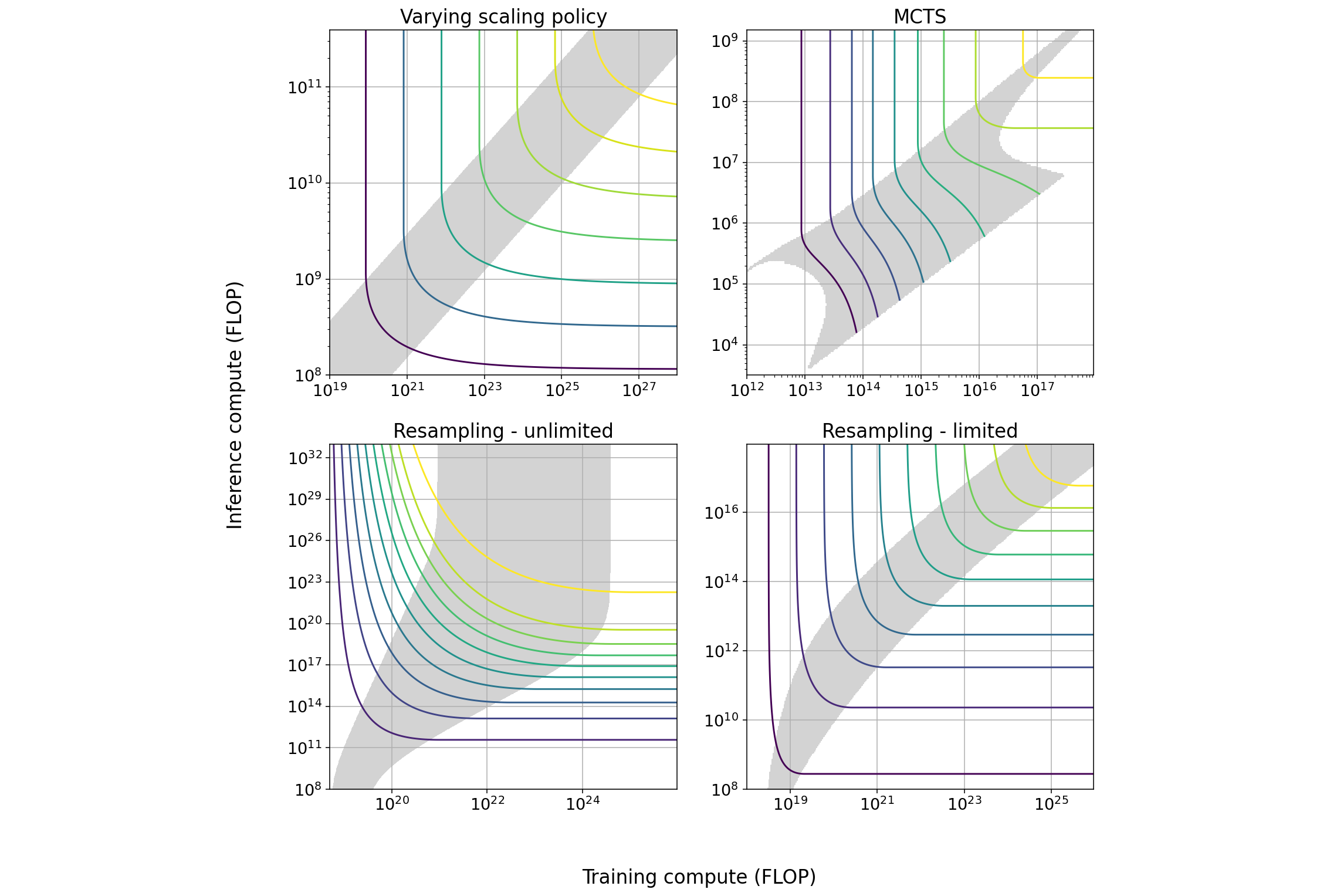

We explore several techniques that induce a tradeoff between spending more resources on training or on inference and characterize the properties of this tradeoff. We outline some implications for AI governance.

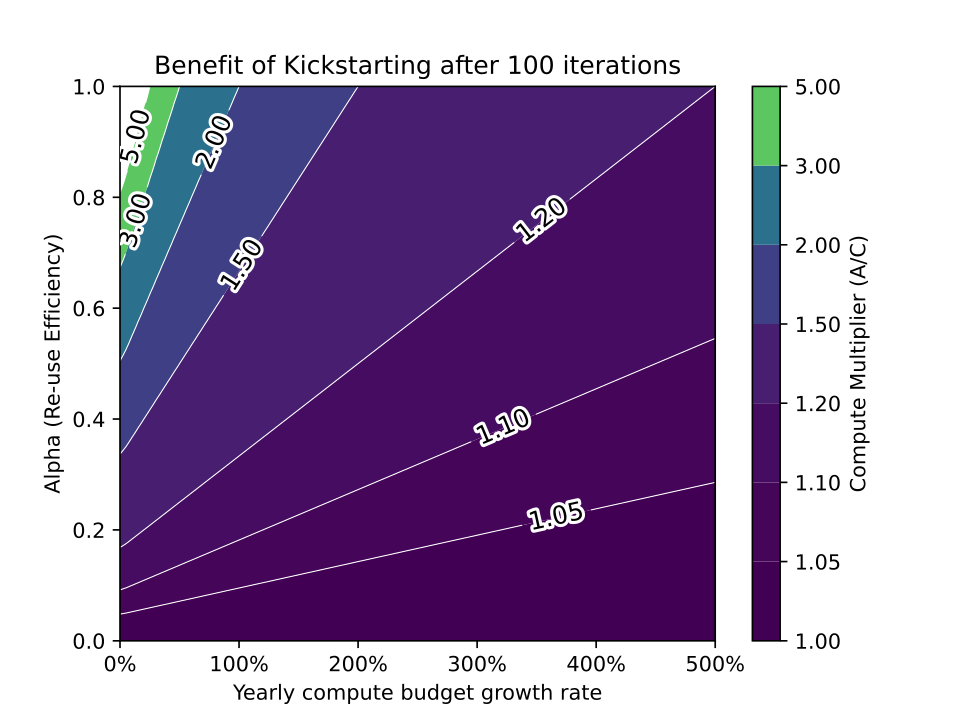

While reusing pretrained models often saves training costs on large training runs, it is unlikely that model recycling will result in more than a modest increase in AI capabilities.

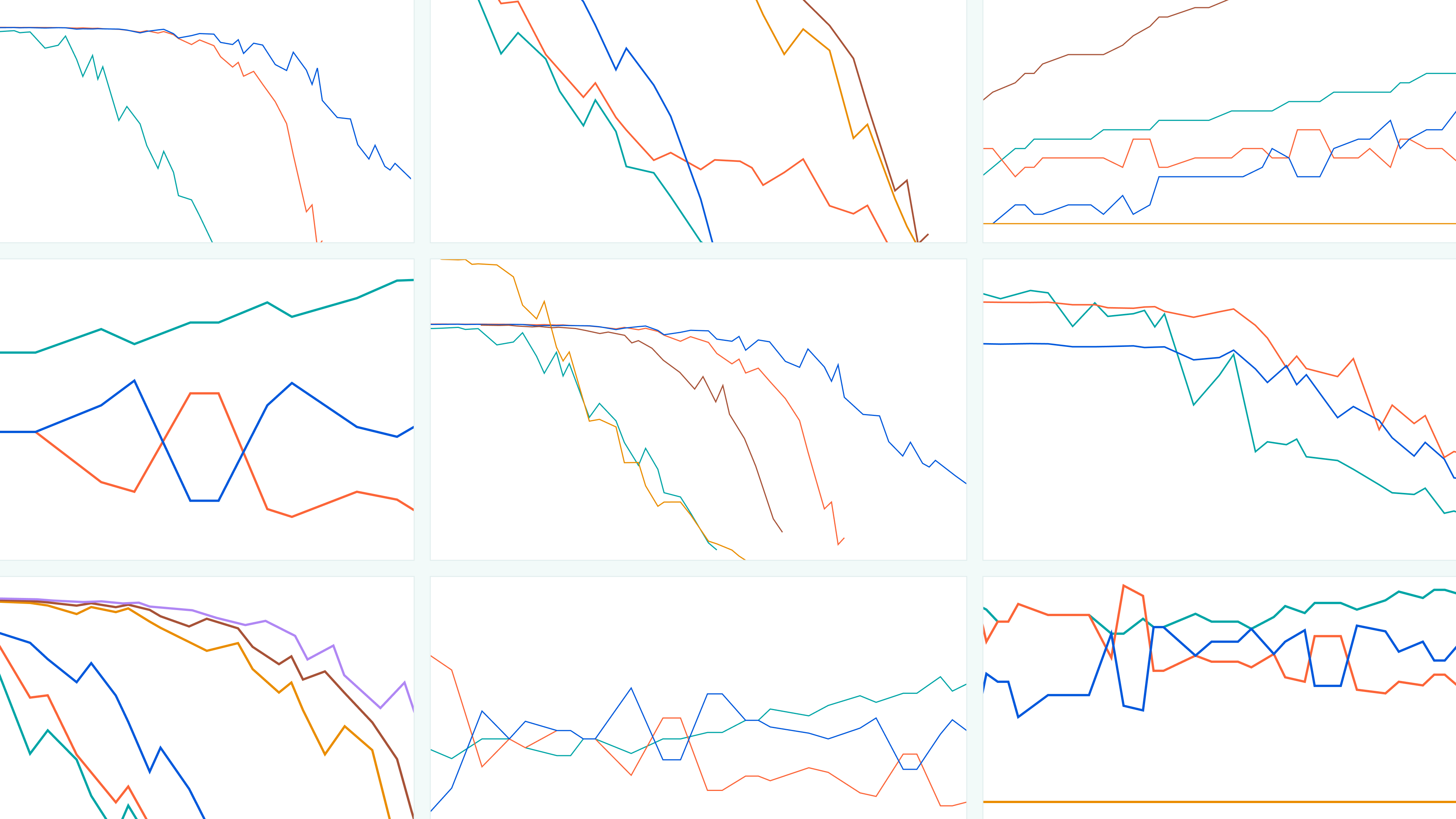

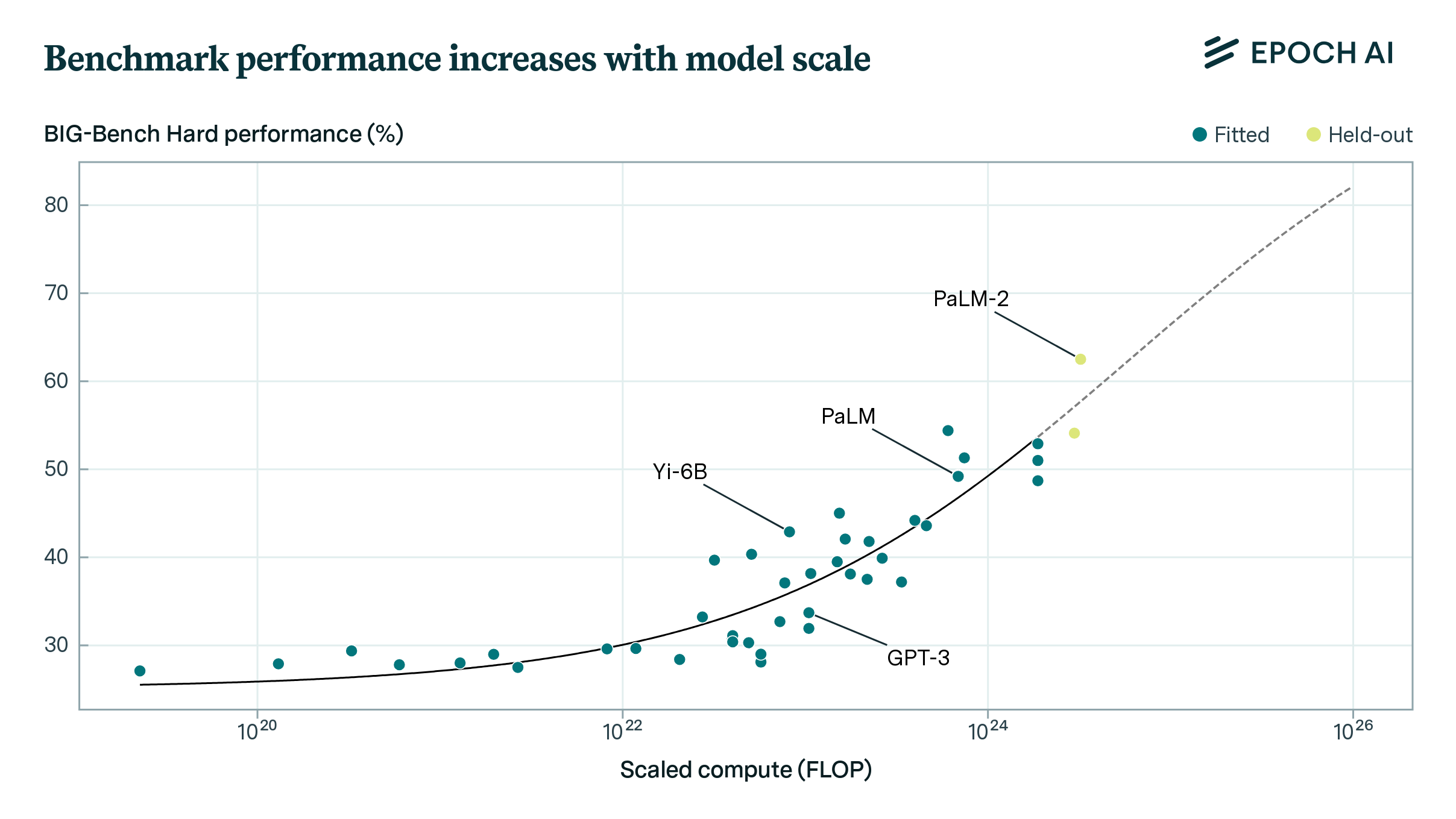

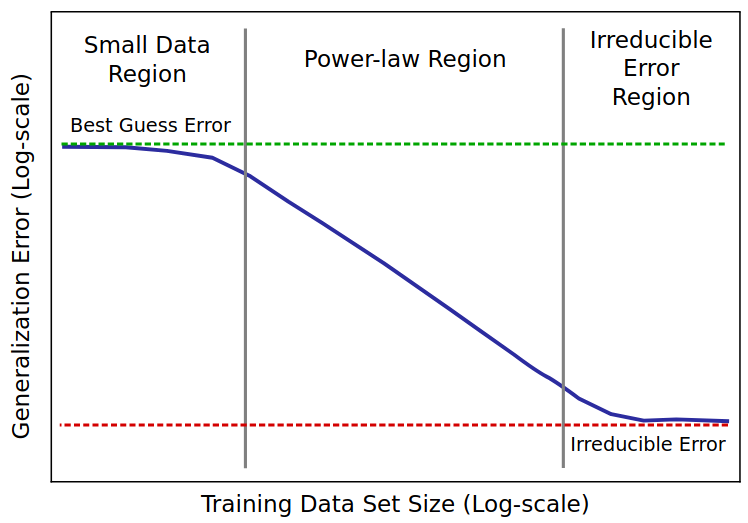

We investigate large language model performance across five orders of magnitude of compute scaling, finding that compute-focused extrapolations are a promising way to forecast AI capabilities.

AI’s potential to automate labor is likely to alter the course of human history within decades, with the availability of compute being the most important factor driving rapid progress in AI capabilities.

Compute is essential for AI performance, but researchers often fail to report it. Adopting reporting norms would support research, enhance forecasts of AI’s impacts and developments, and assist policymakers.

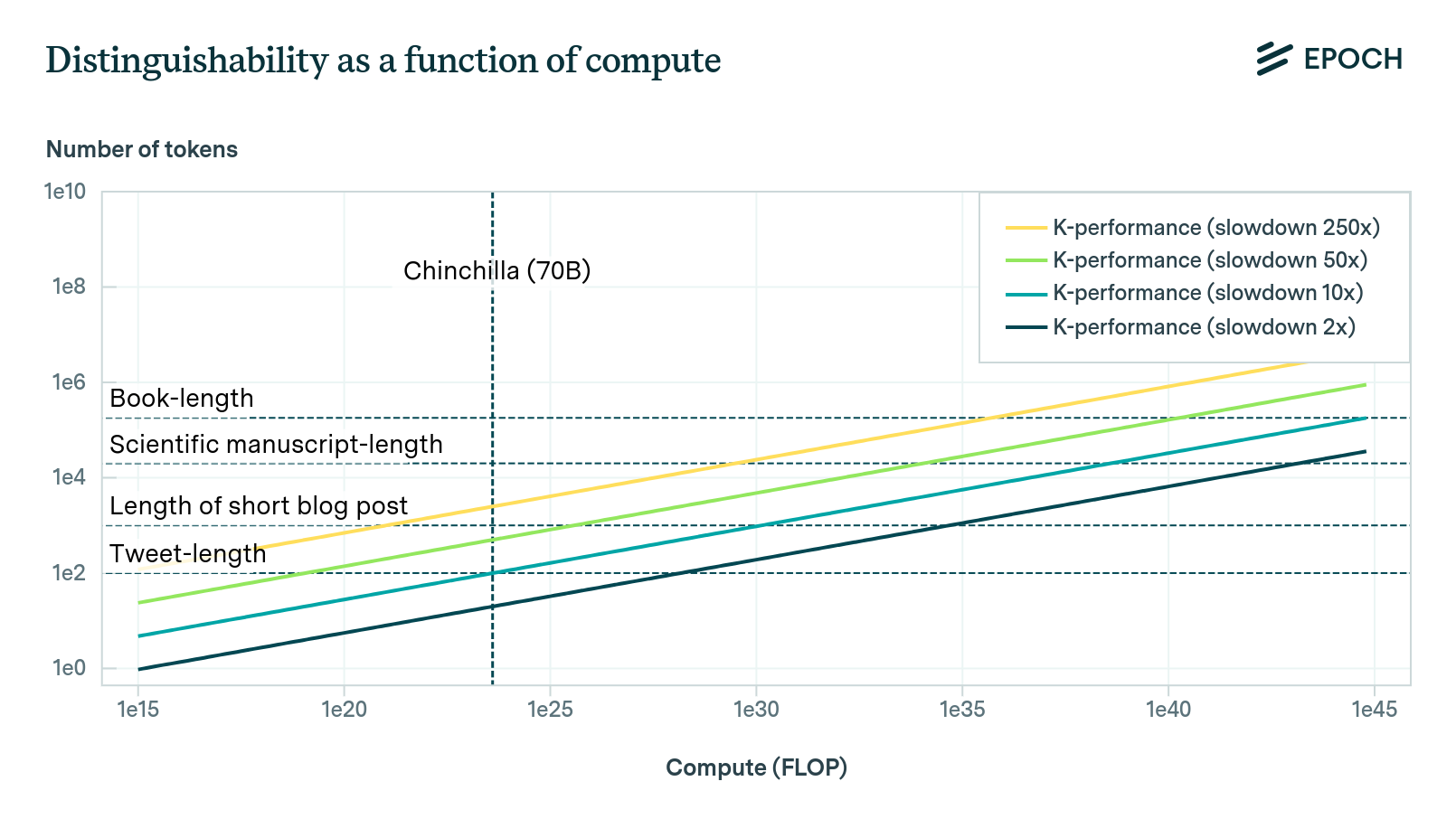

Empirical scaling laws can help predict the cross-entropy loss associated with training inputs, such as compute and data. However, in order to predict when AI will achieve some subjective level of performance, it is necessary to devise a way of interpreting the cross-entropy loss of a model. This blog post provides a discussion of one such theoretical method, which we call the Direct Approach.

I combine training compute and GPU price-performance data to estimate the cost of compute in US dollars for the final training run of 124 machine learning systems published between 2009 and 2022, and find that the cost has grown by approximately 0.5 orders of magnitude per year.

I have collected a database of scaling laws for different tasks and architectures, and reviewed dozens of papers in the scaling law literature.

We have developed an interactive website showcasing a new model of AI takeoff speeds.

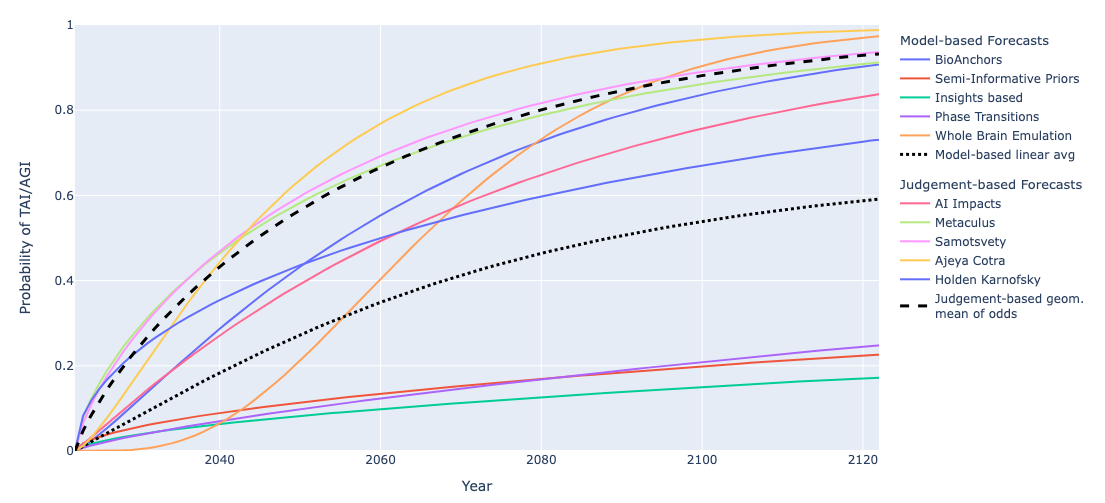

We summarize and compare several models and forecasts predicting when transformative AI will be developed.

Based on our previous analysis of trends in dataset size, we project the growth of dataset size in the language and vision domains. We explore the limits of this trend by estimating the total stock of available unlabeled data over the next decades.

We collected a database of notable ML models and their training dataset sizes. We use this database to find historical growth trends in dataset size for different domains, particularly language and vision.

Training runs of large machine learning systems are likely to last less than 14-15 months. This is because longer runs will be outcompeted by runs that start later and therefore use better hardware and better algorithms.

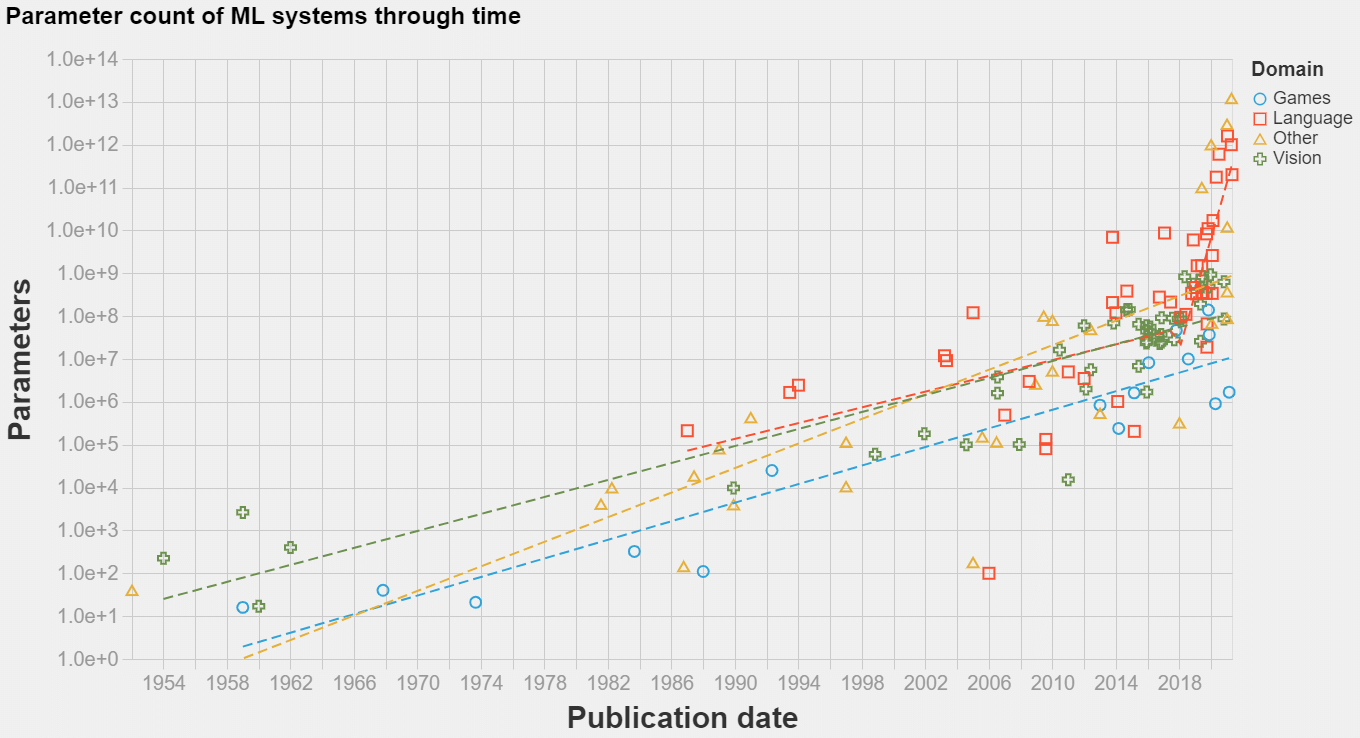

The model size of notable machine learning systems has grown ten times faster than before since 2018. After 2020 growth has not been entirely continuous: there was a jump of one order of magnitude which persists until today. This is relevant for forecasting model size and thus AI capabilities.

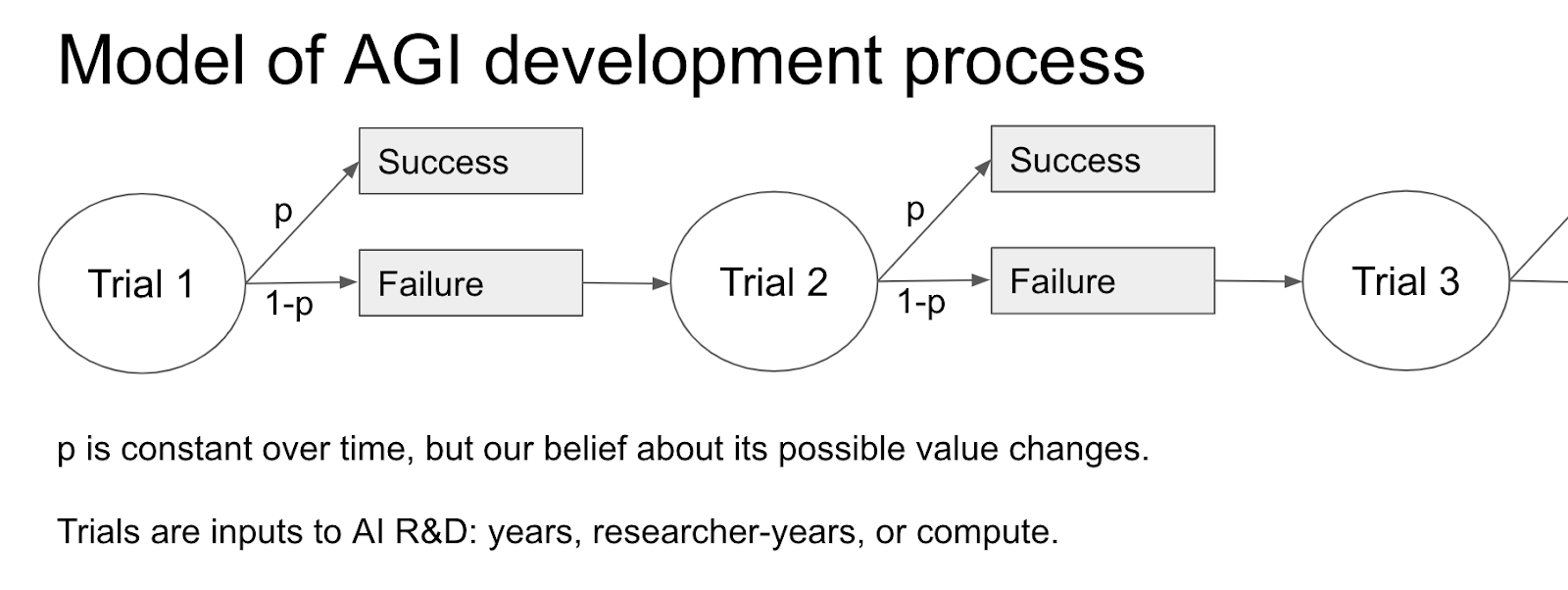

I give visual explanations for Tom Davidson’s report, Semi-informative priors over AI timelines, and summarise the key assumptions and intuitions

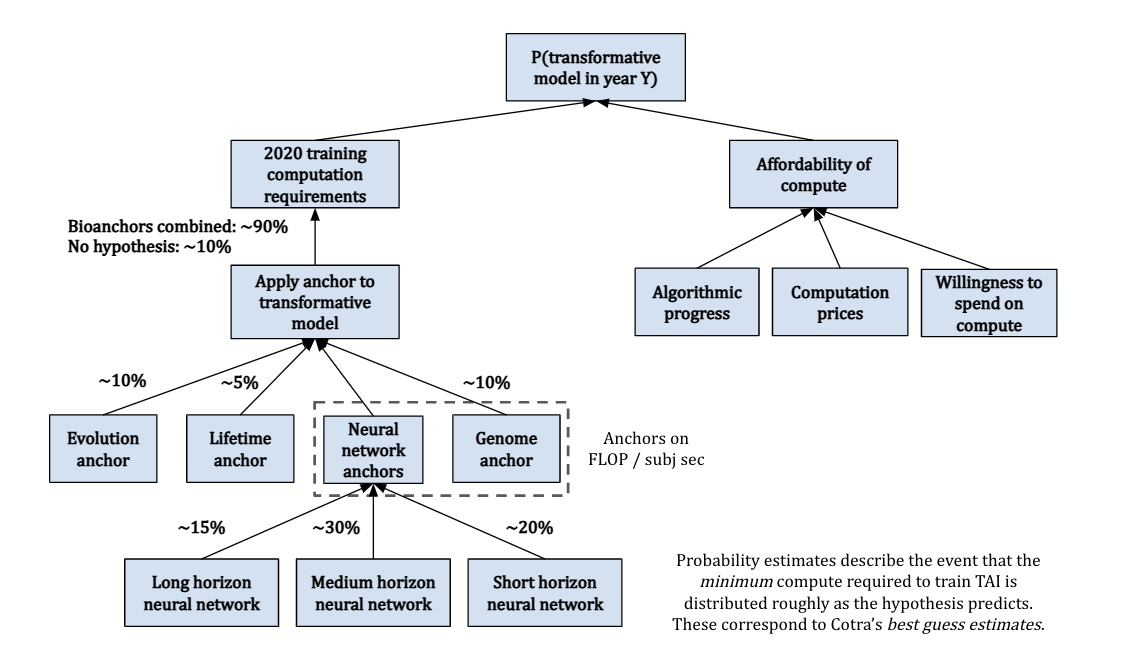

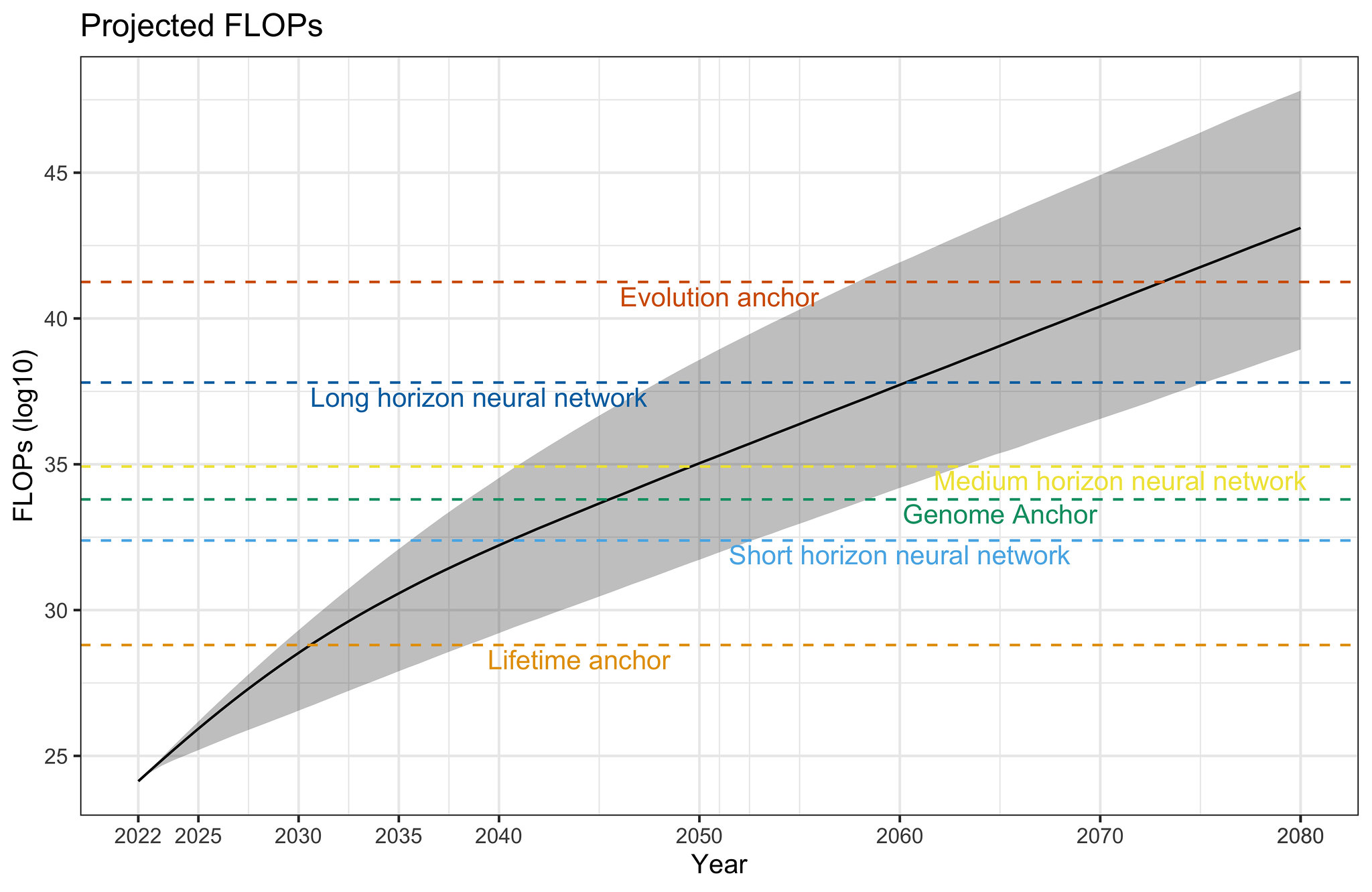

I give a visual explanation of Ajeya Cotra’s draft report, Forecasting TAI with biological anchors, summarising the key assumptions, intuitions, and conclusions.

Projecting forward 70 years' worth of trends in the amount of compute used to train machine learning models.

We’ve compiled a dataset of the training compute for over 120 machine learning models, highlighting novel trends and insights into the development of AI since 1952, and what to expect going forward."

We describe two approaches for estimating the training compute of Deep Learning systems, by counting operations and looking at GPU time.

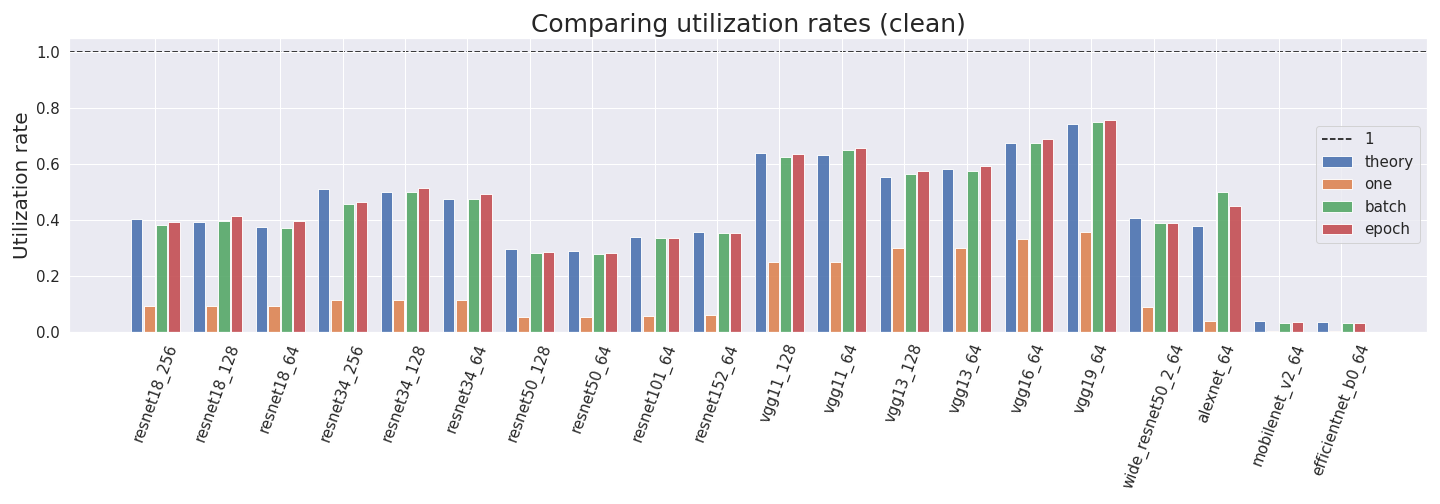

Computing the utilization rate for multiple Neural Network architectures.

Compiling a large dataset of machine learning models to determine changes in the parameters counts of systems since 1952.