Featured

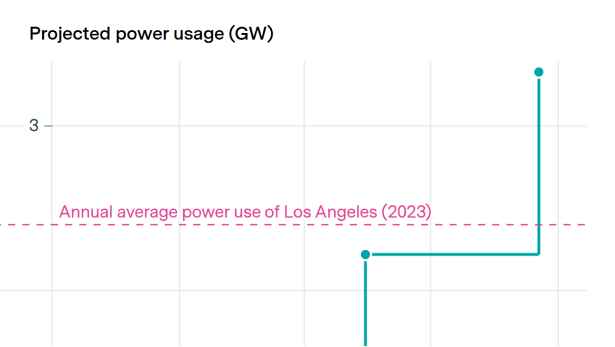

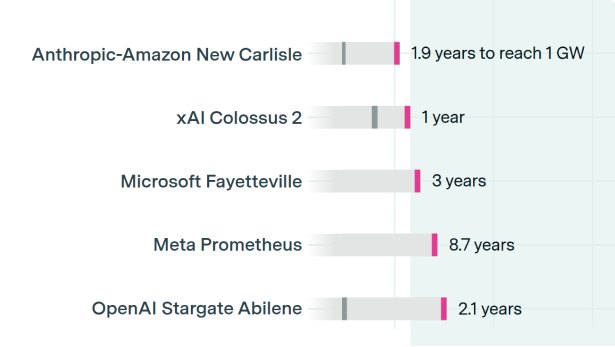

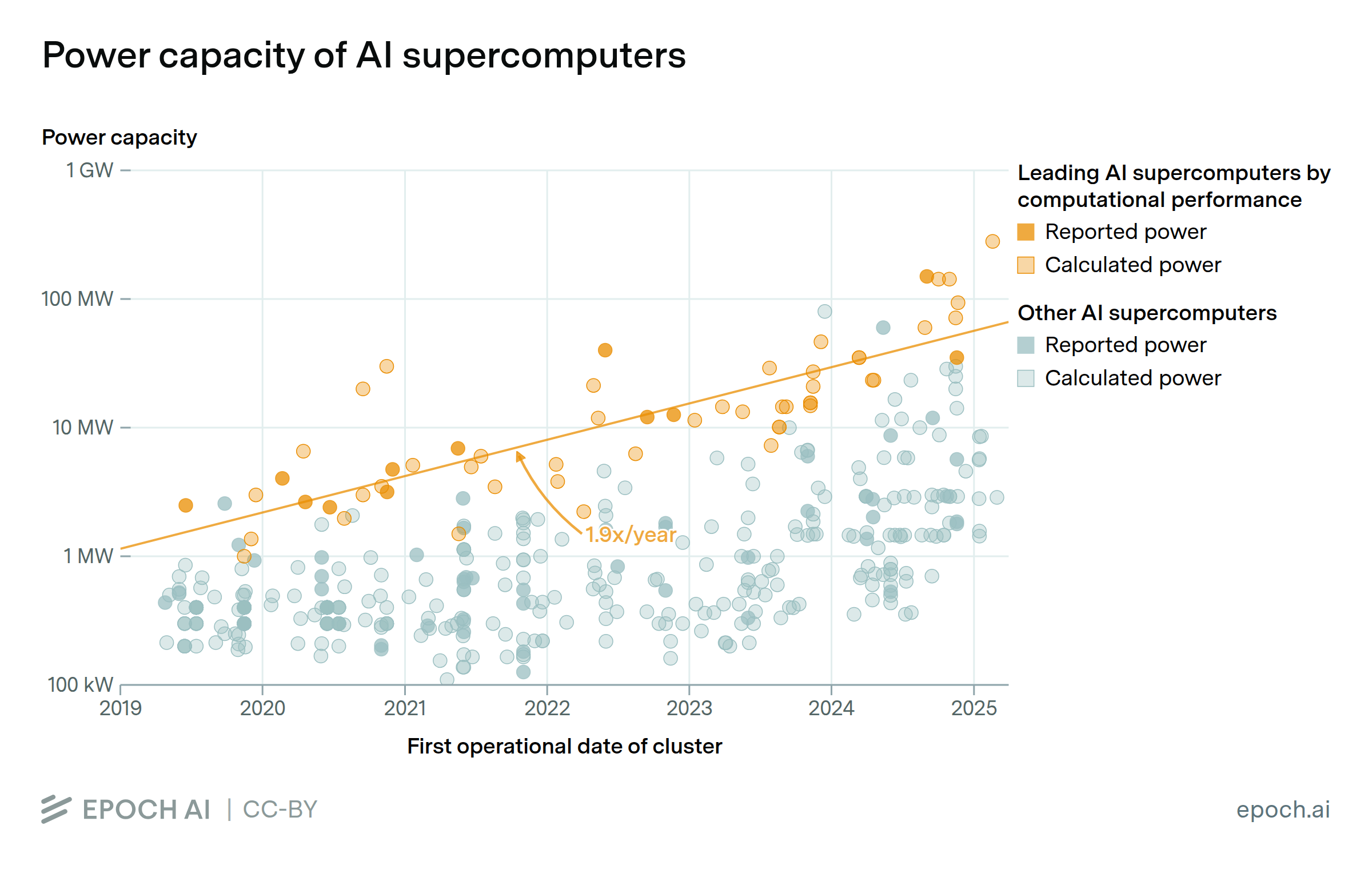

Training and running frontier AI models requires enormous physical infrastructure: warehouses packed with specialized chips consuming as much power as small cities. The largest are being built at extraordinary speed, going from empty land to operational in under two years. Using satellite imagery and permit data, Epoch tracks the scale and growth of AI data centers and supercomputers, from build times and costs to compute capacity and power demands.

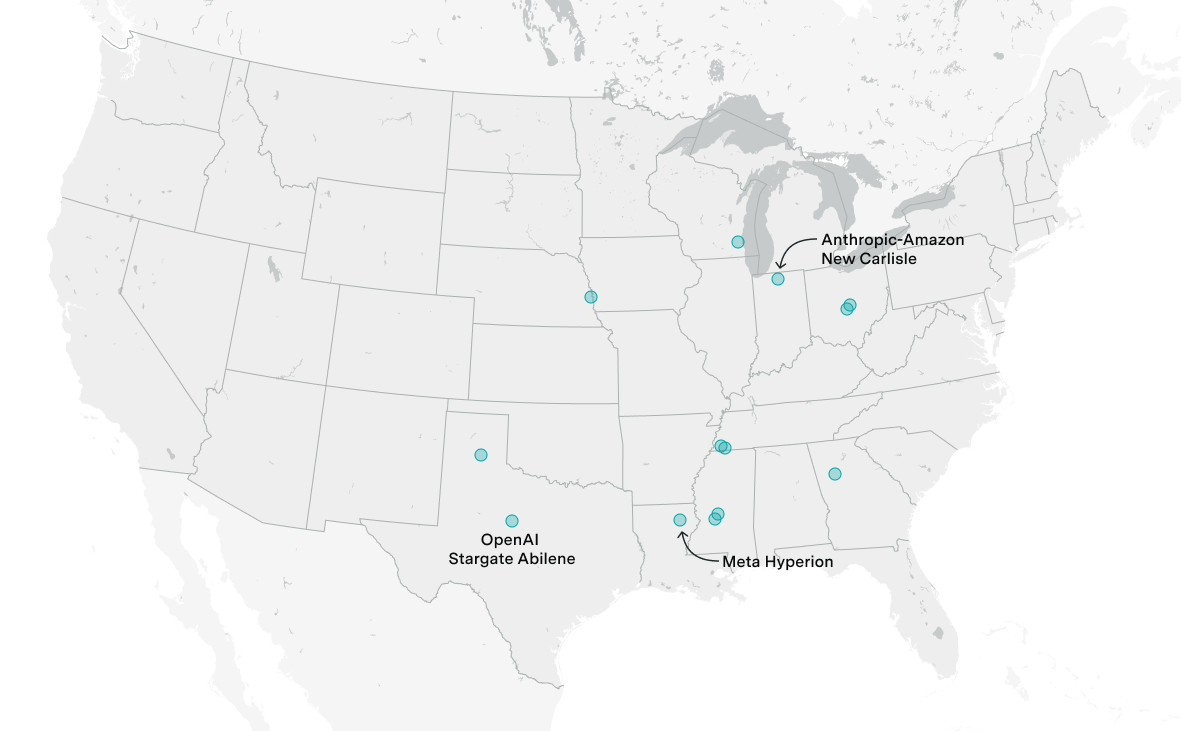

The $500 billion AI data center initiative is projected to exceed 9 gigawatts of capacity by 2029, with 0.3 gigawatts already operational in Abilene and six more US sites under active construction.

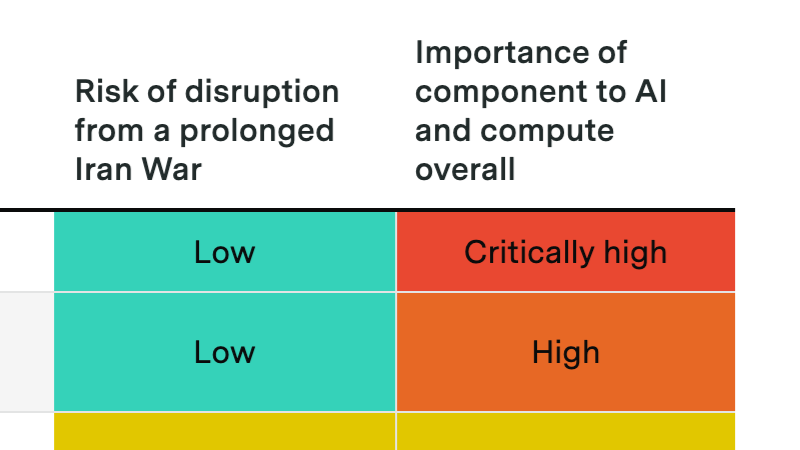

A prolonged Hormuz crisis probably won't derail the compute buildout, but it could slow data center expansion and disrupt Gulf investment flows into AI.

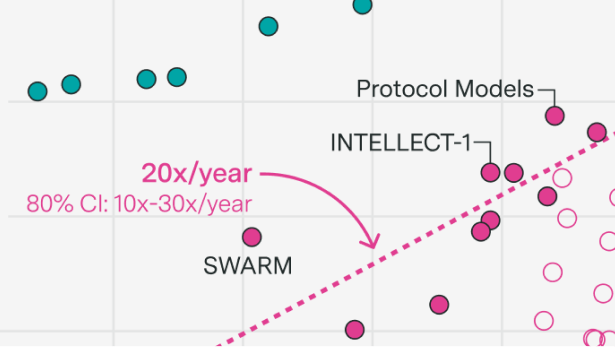

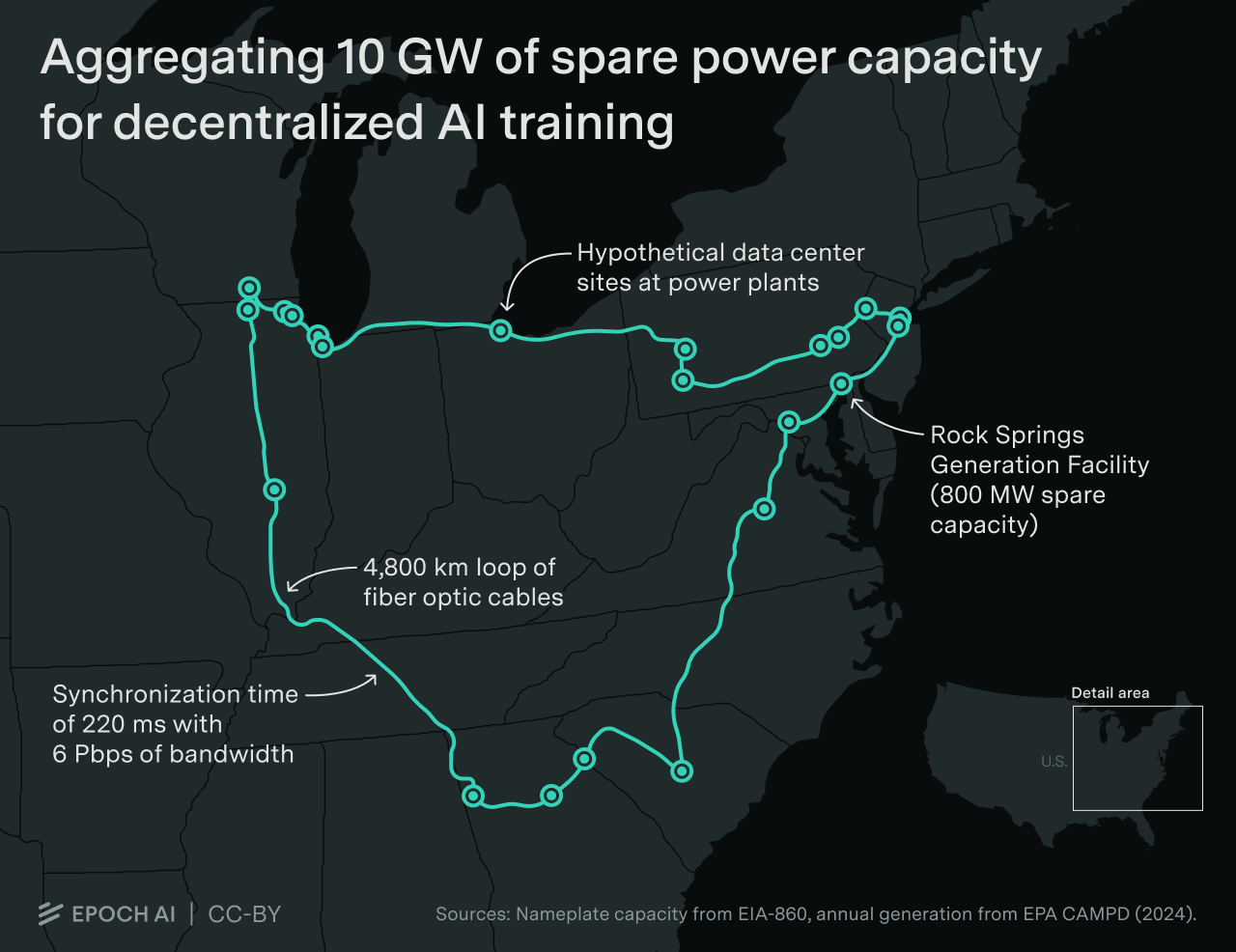

Decentralized training over the internet promises to scale training to the limits of the internet.

Why power is less of a bottleneck than you think.

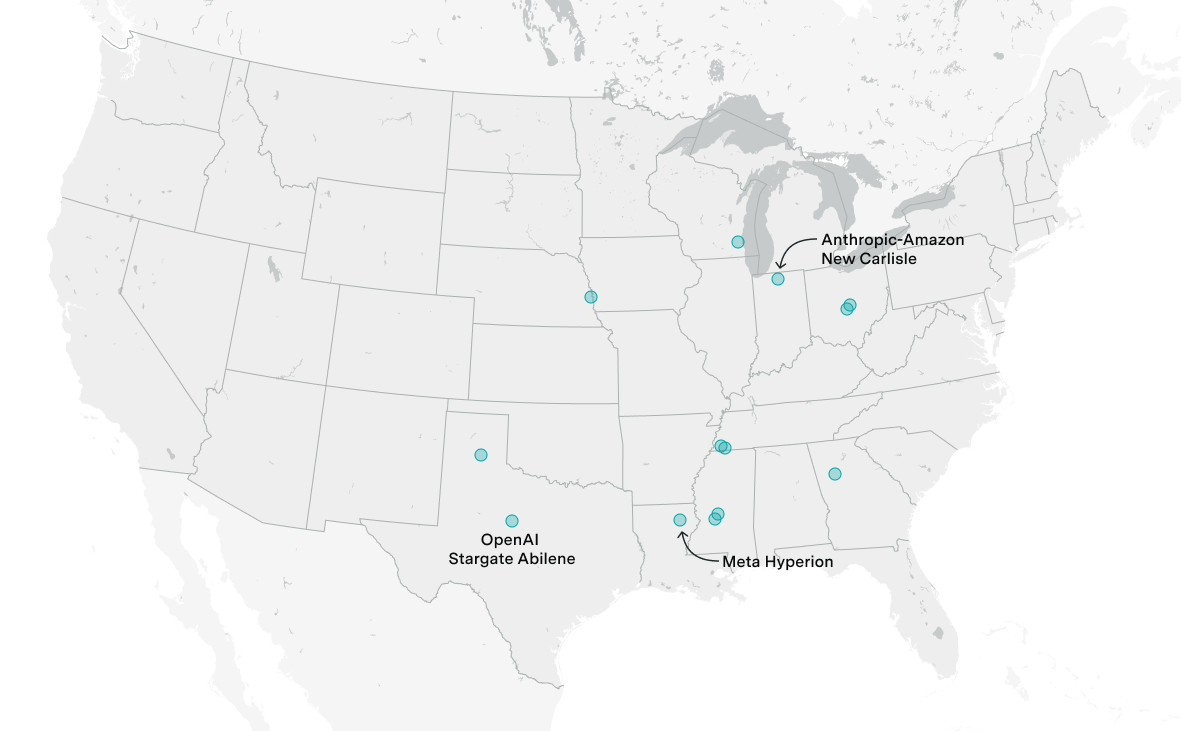

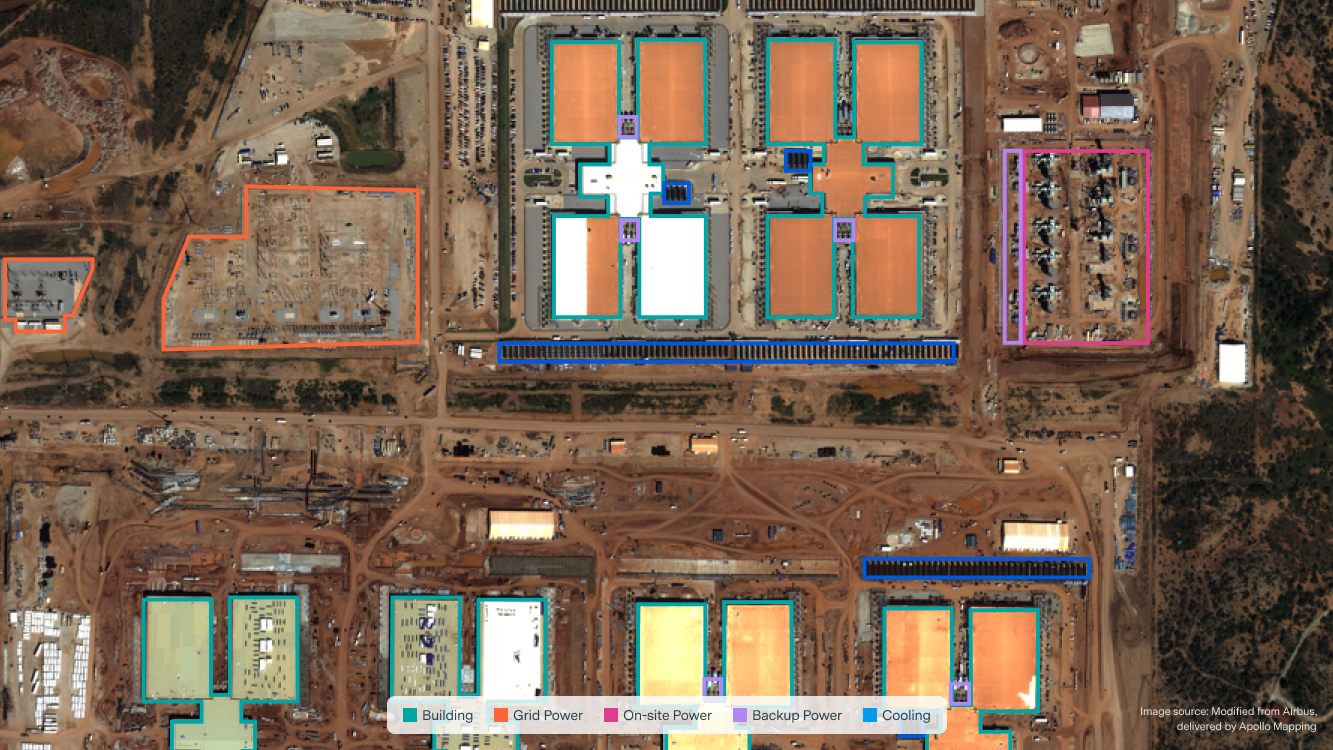

We announce our new Frontier Data Centers Hub, a database tracking large AI data centers using satellite and permit data to show compute, power use, and construction timelines.

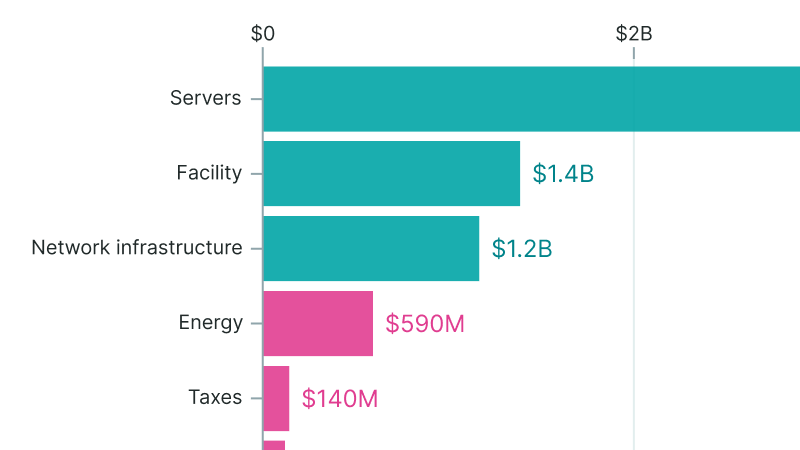

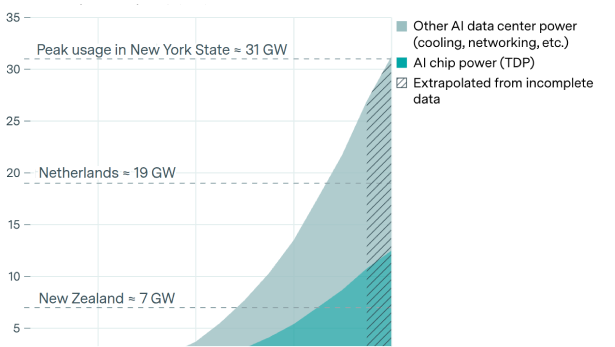

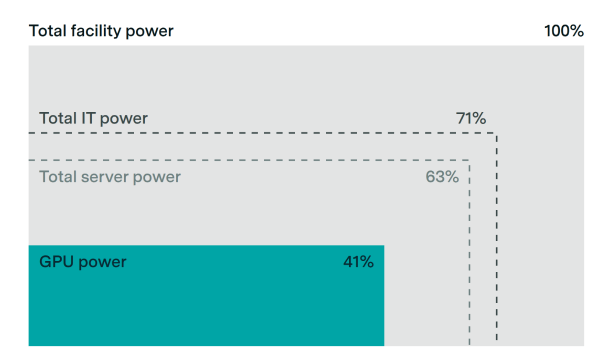

AI companies are planning a buildout of data centers that will rank among the largest infrastructure projects in history. We examine their power demands, what makes AI data centers special, and what all this means for AI policy and the future of AI.

We illustrate a decentralized 10 GW training run across a dozen sites spanning thousands of kilometers. Developers are likely to scale datacenters to multi-gigawatt levels before adopting decentralized training.

An AI Manhattan Project could accelerate compute scaling by two years.

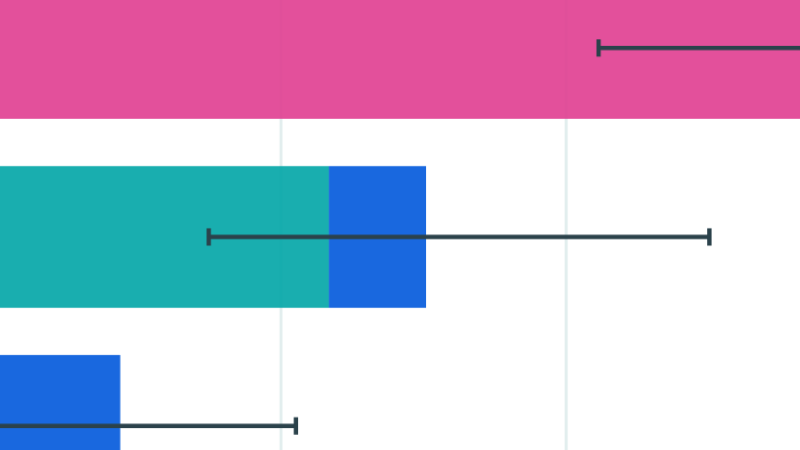

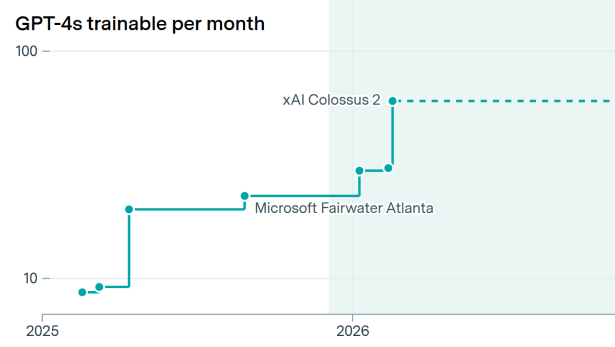

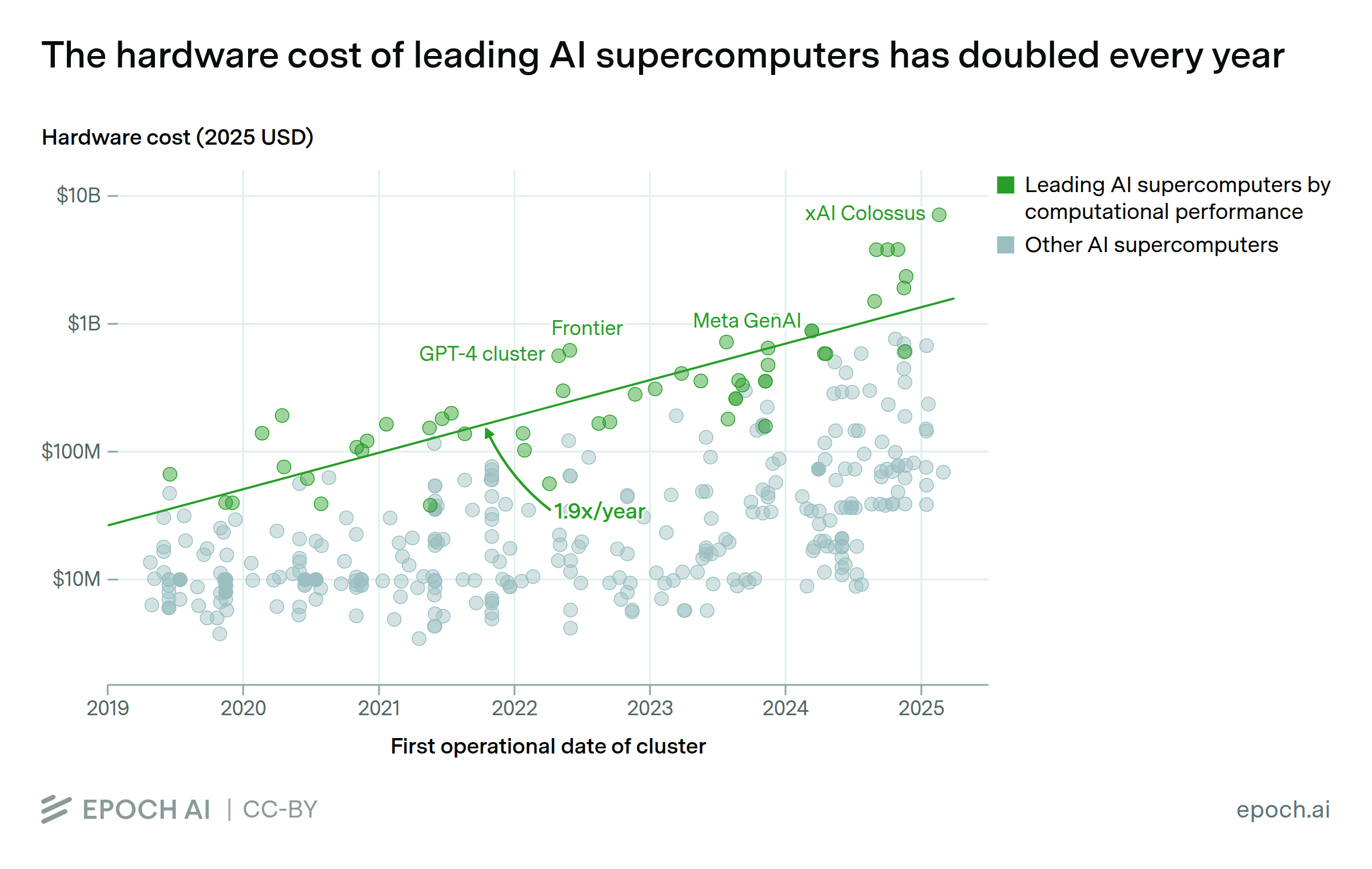

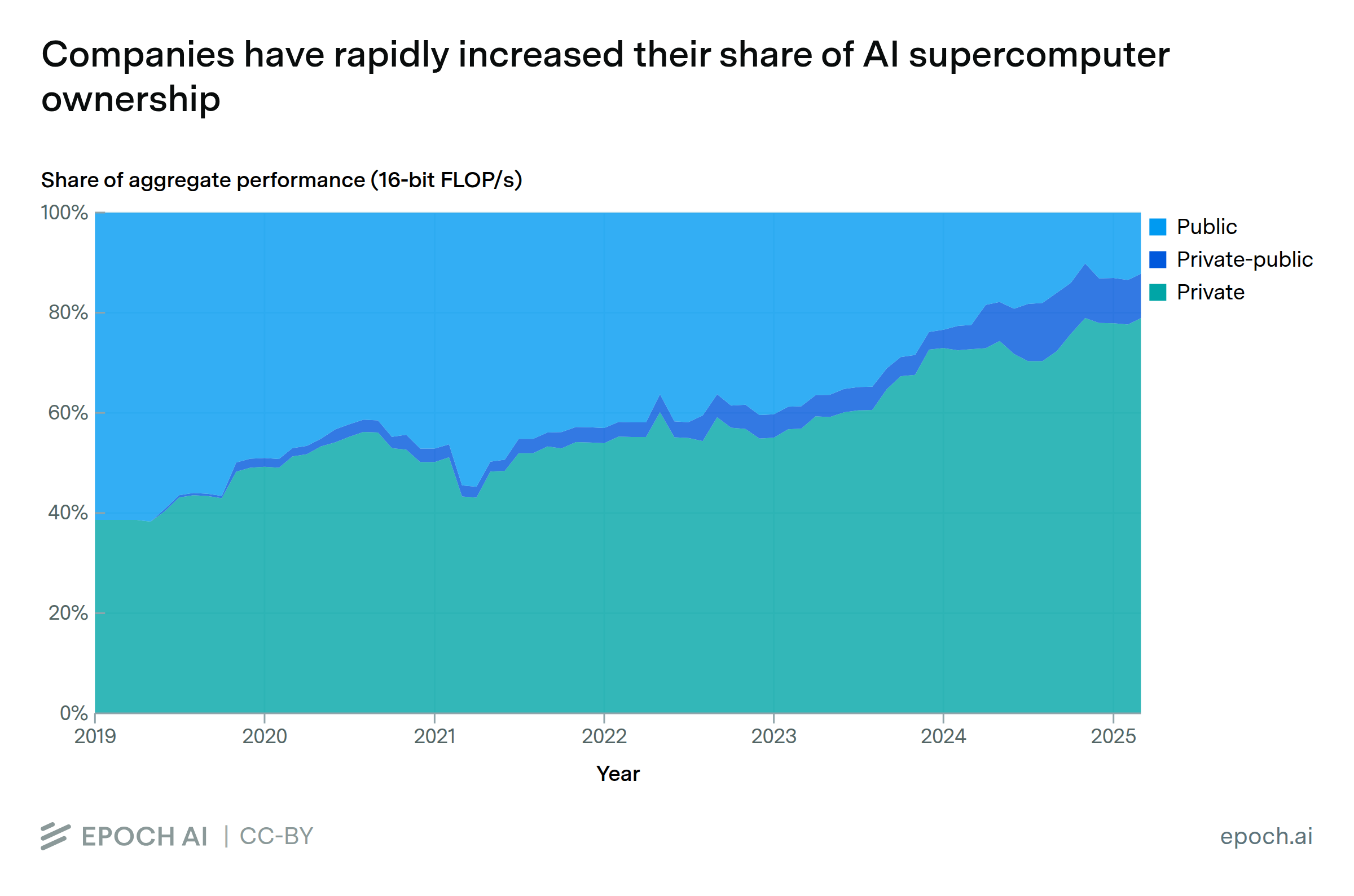

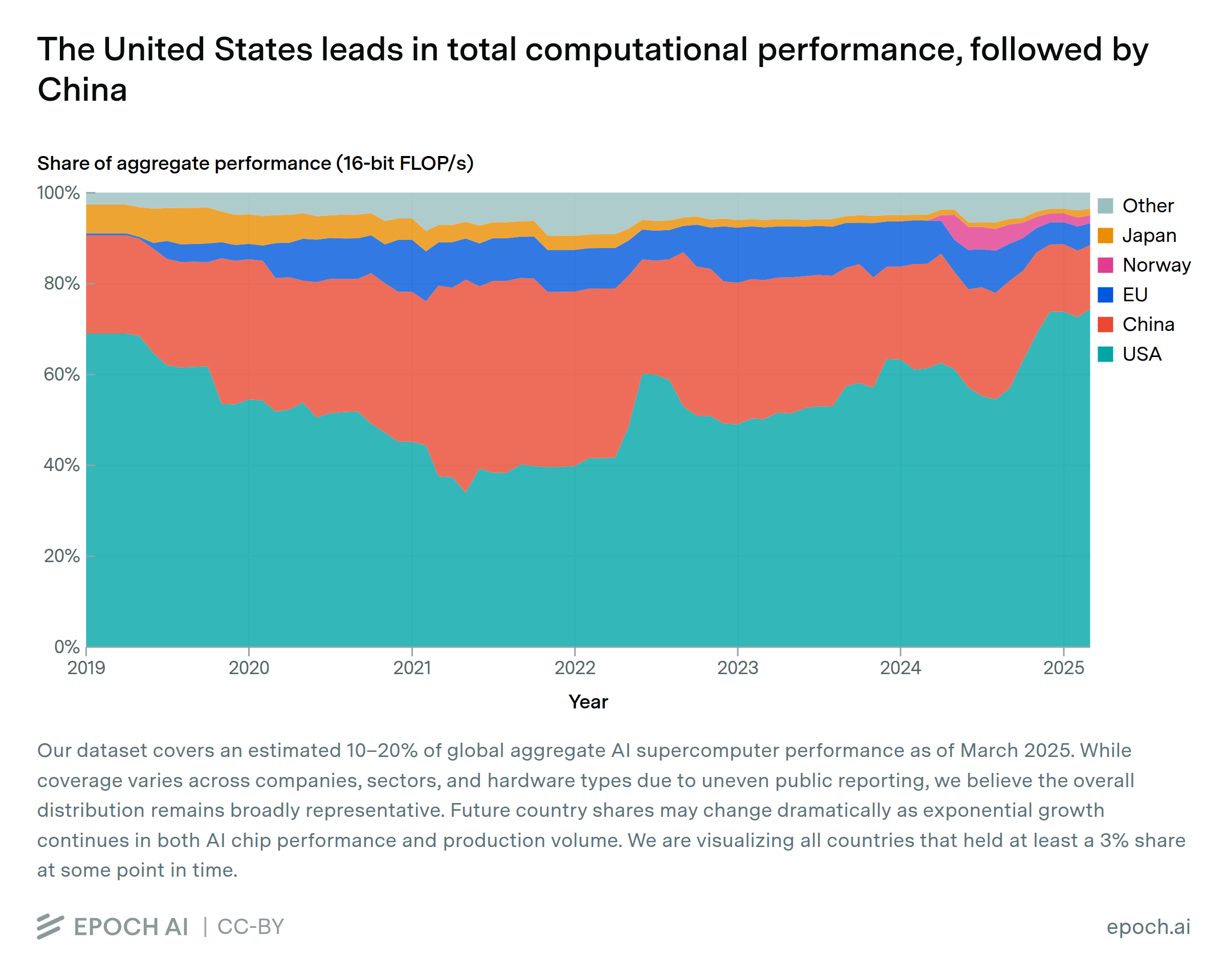

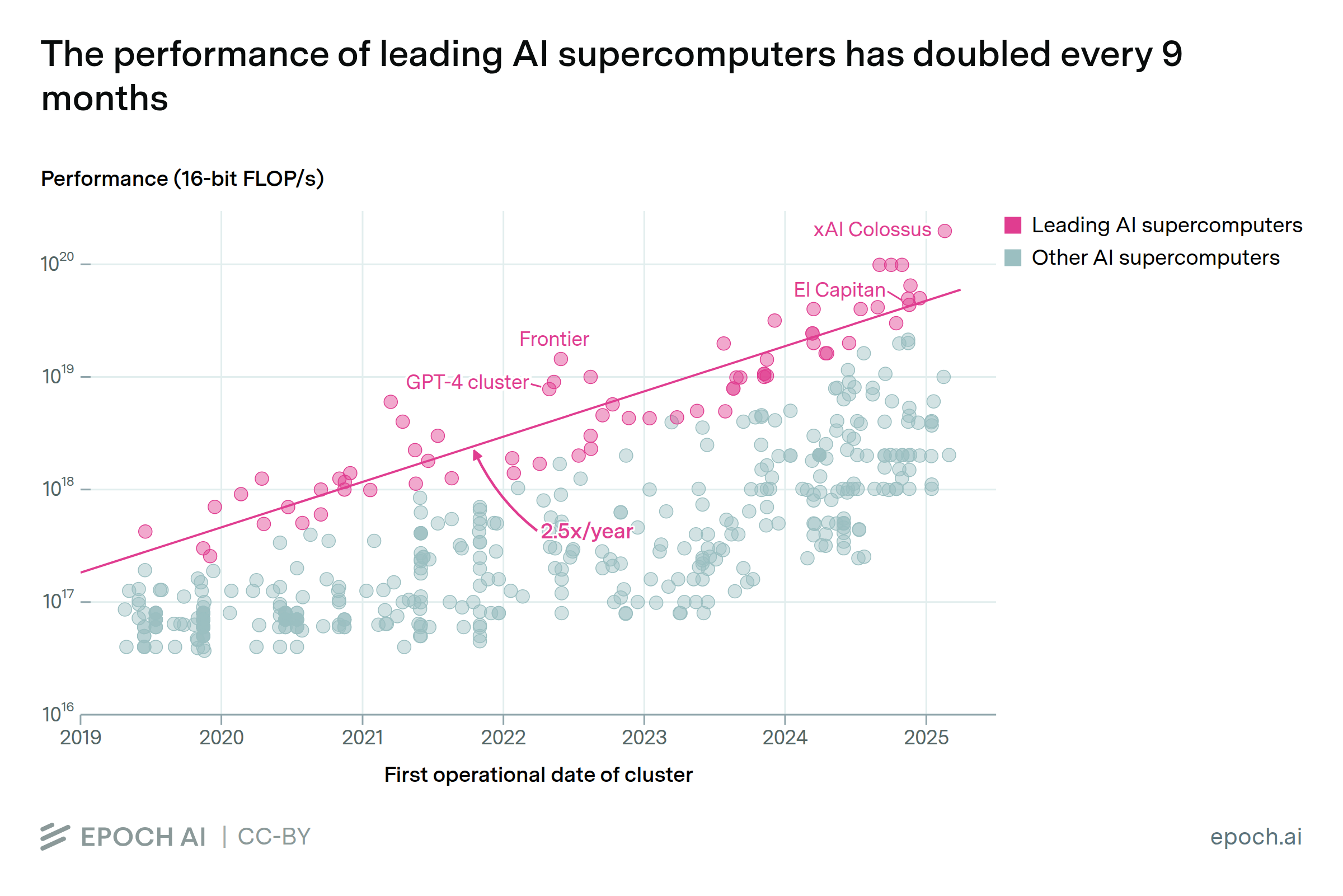

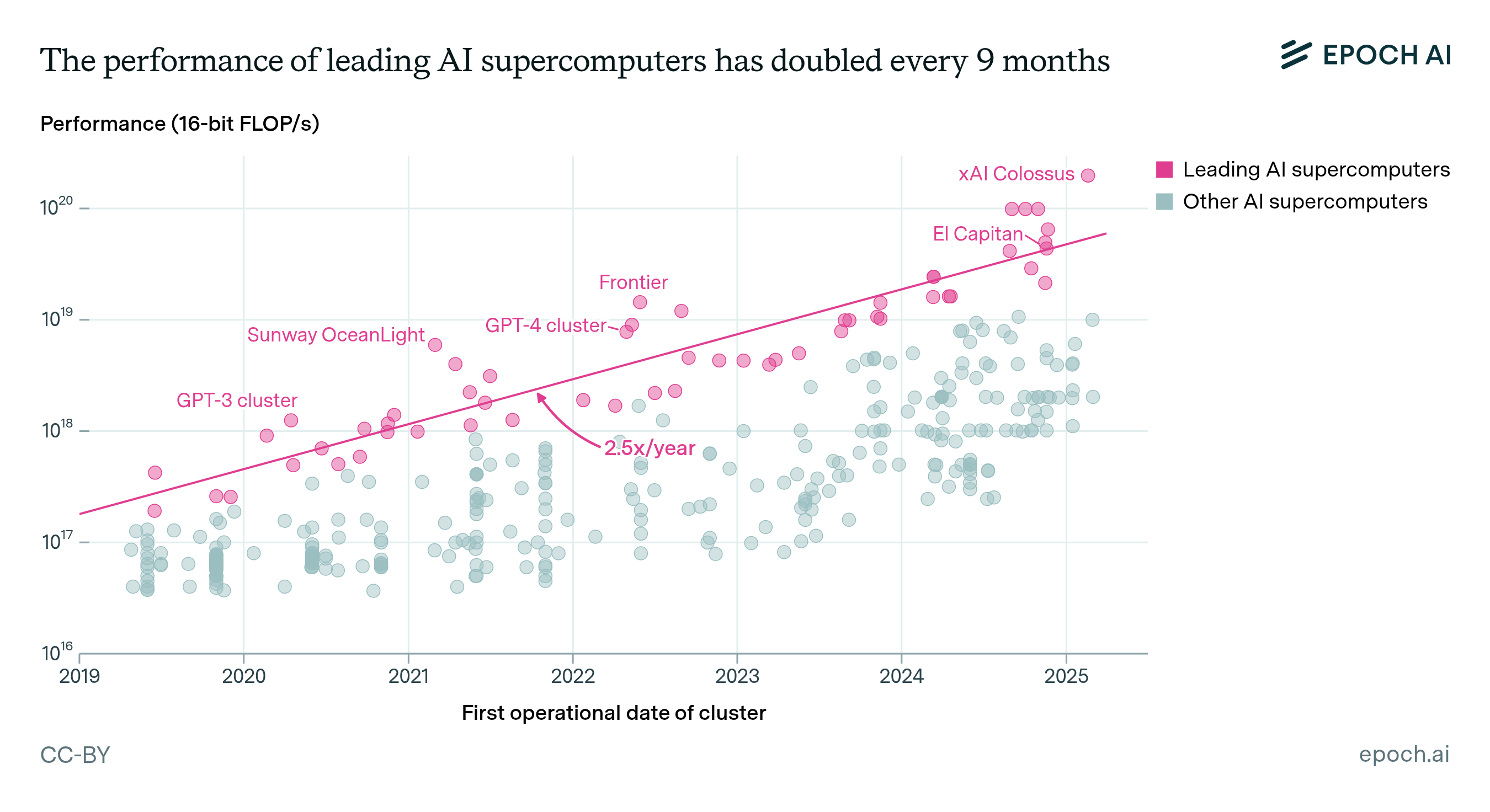

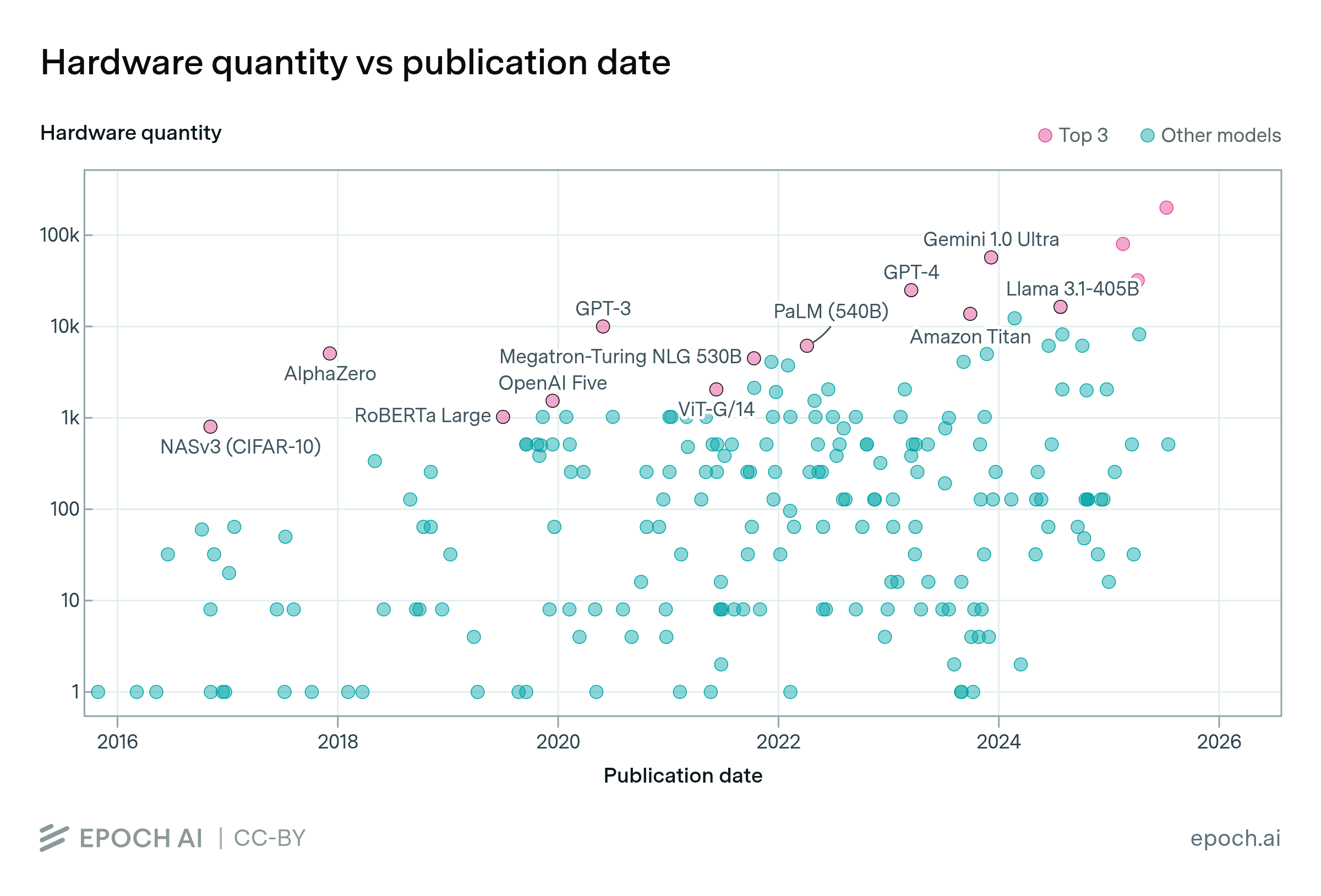

AI supercomputers double in performance every 9 months, cost billions of dollars, and require as much power as mid-sized cities. Companies now own 80% of all AI supercomputers, while governments’ share has declined.

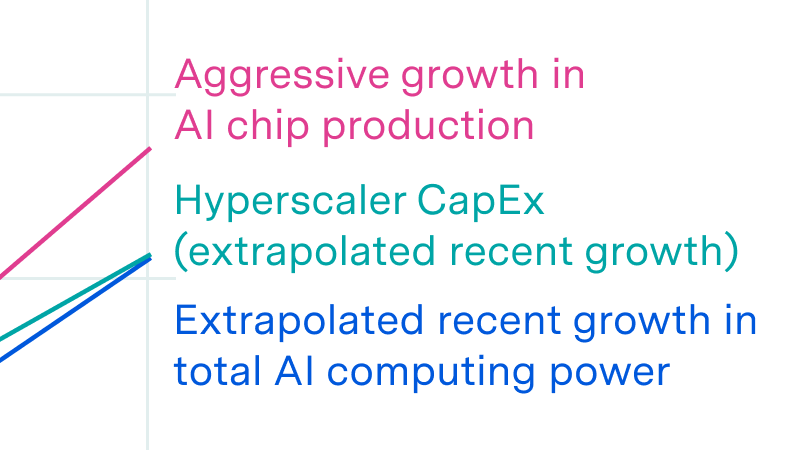

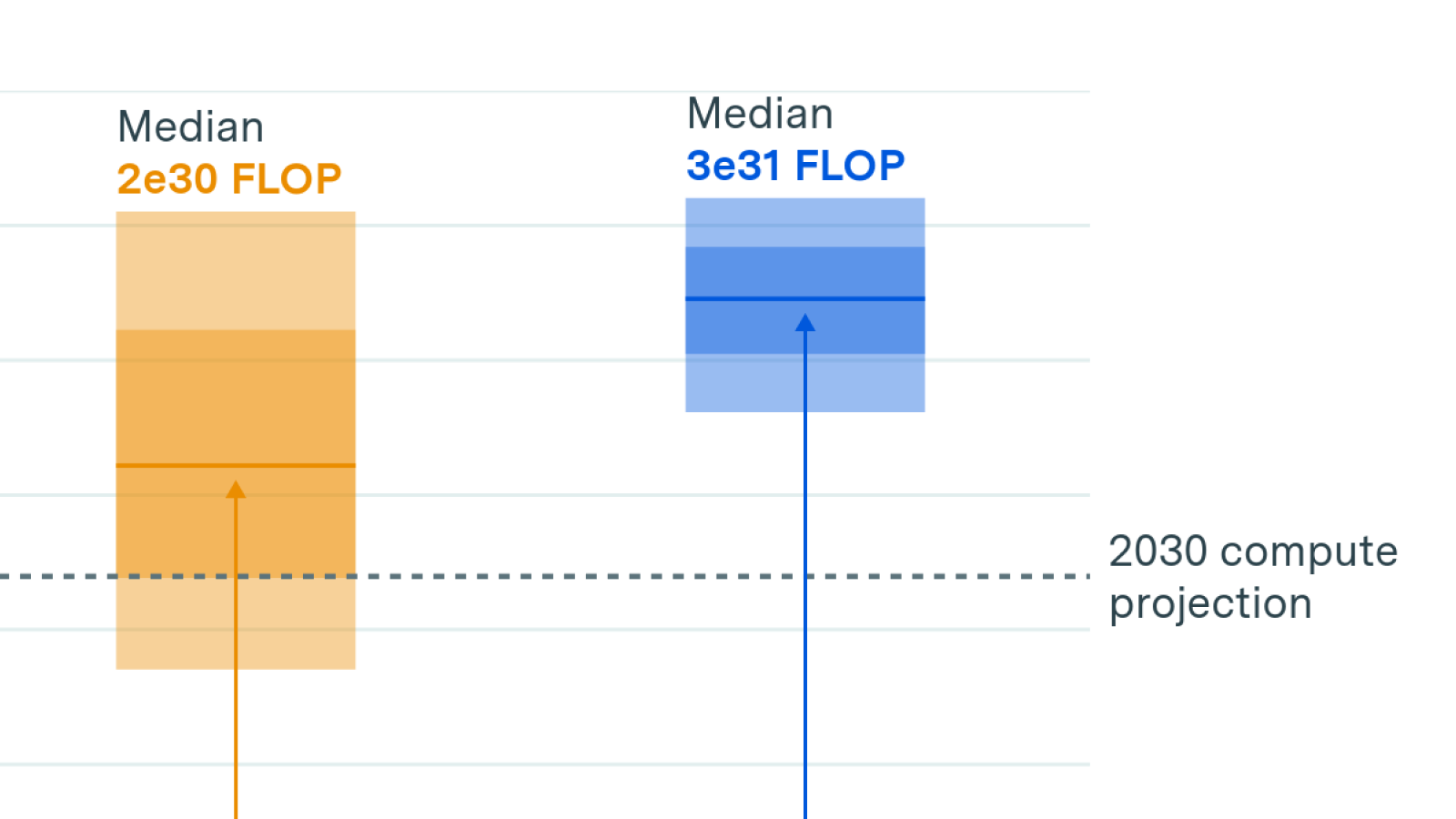

We investigate the scalability of AI training runs. We identify electric power, chip manufacturing, data and latency as constraints. We conclude that 2e29 FLOP training runs will likely be feasible by 2030.