Featured

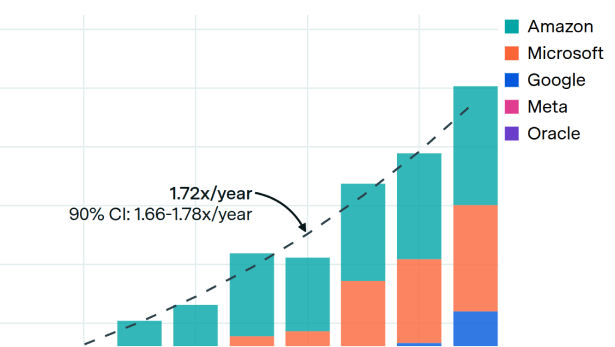

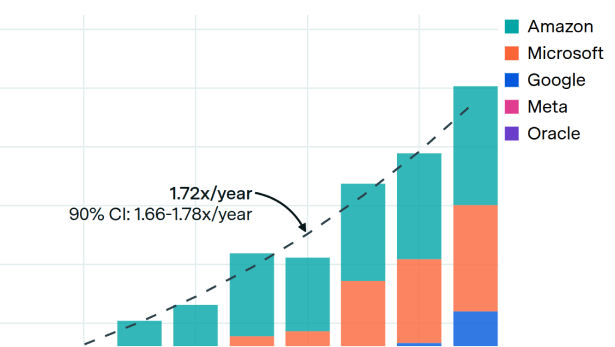

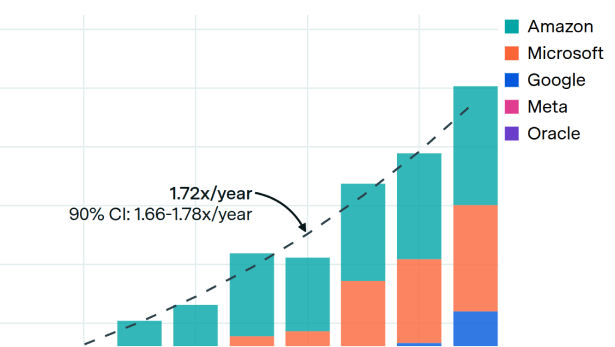

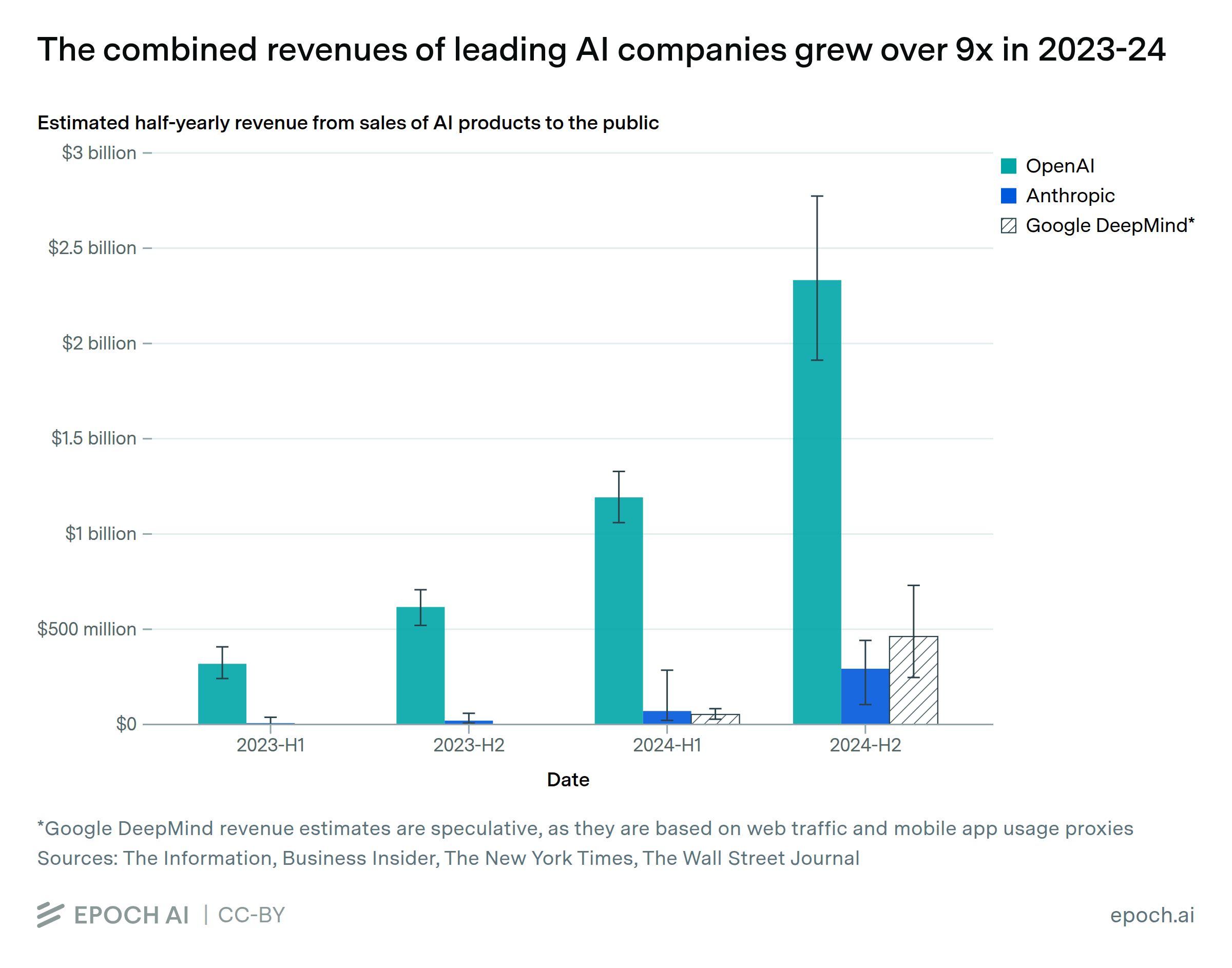

AI is attracting unprecedented levels of investment, with companies spending billions on hardware, data centers, and talent to build frontier models. The costs are rising fast, and the gap between what companies are spending and what they are earning is an important dynamic in the industry. Epoch tracks the financial side of AI development: what it costs to train and run models, how much companies are spending and earning, and how hardware prices are trending.

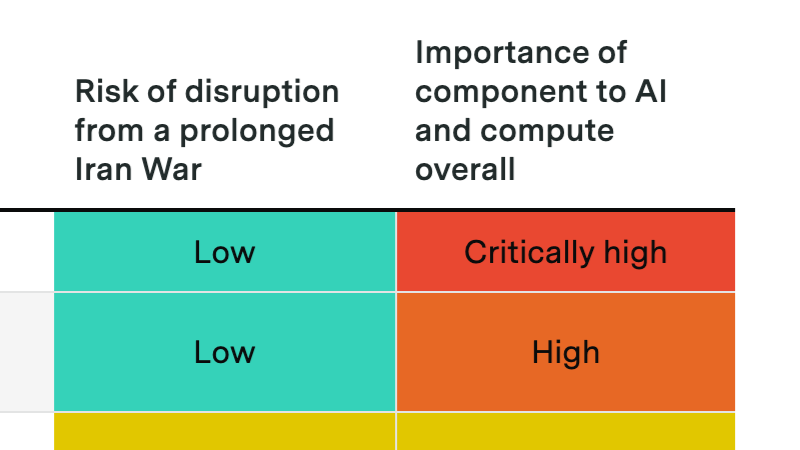

A prolonged Hormuz crisis probably won't derail the compute buildout, but it could slow data center expansion and disrupt Gulf investment flows into AI.

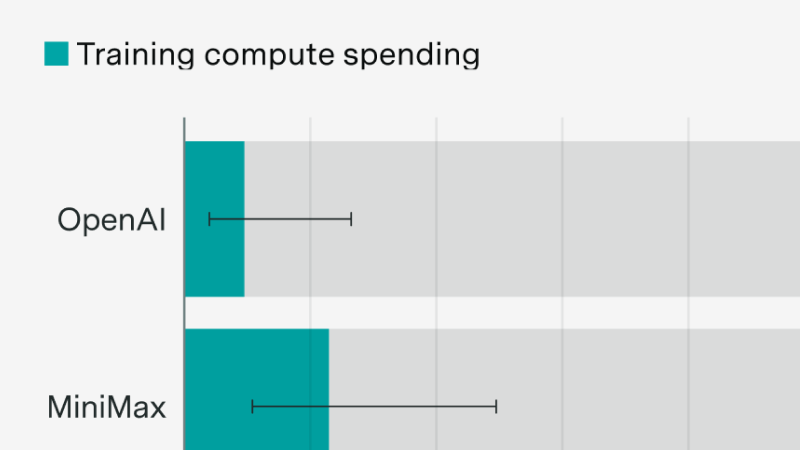

New evidence following the MiniMax and Z.ai IPOs

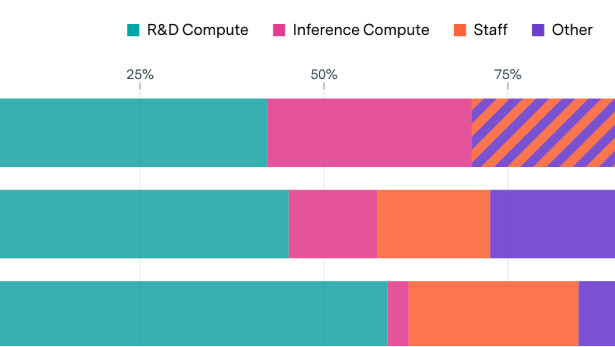

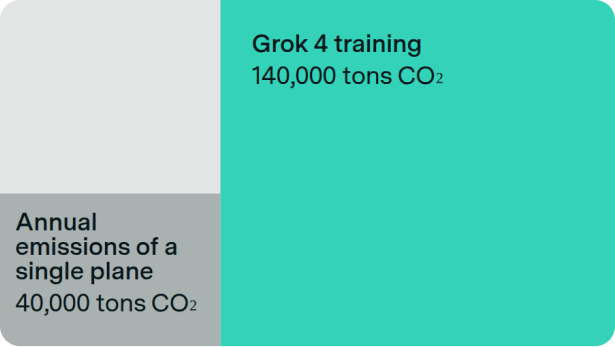

Toby Ord argues that RL scaling primarily increases inference costs, creating a persistent economic burden. While the framing is useful, the cost to reach a given capability level falls fast, and the RL scaling data is thin.

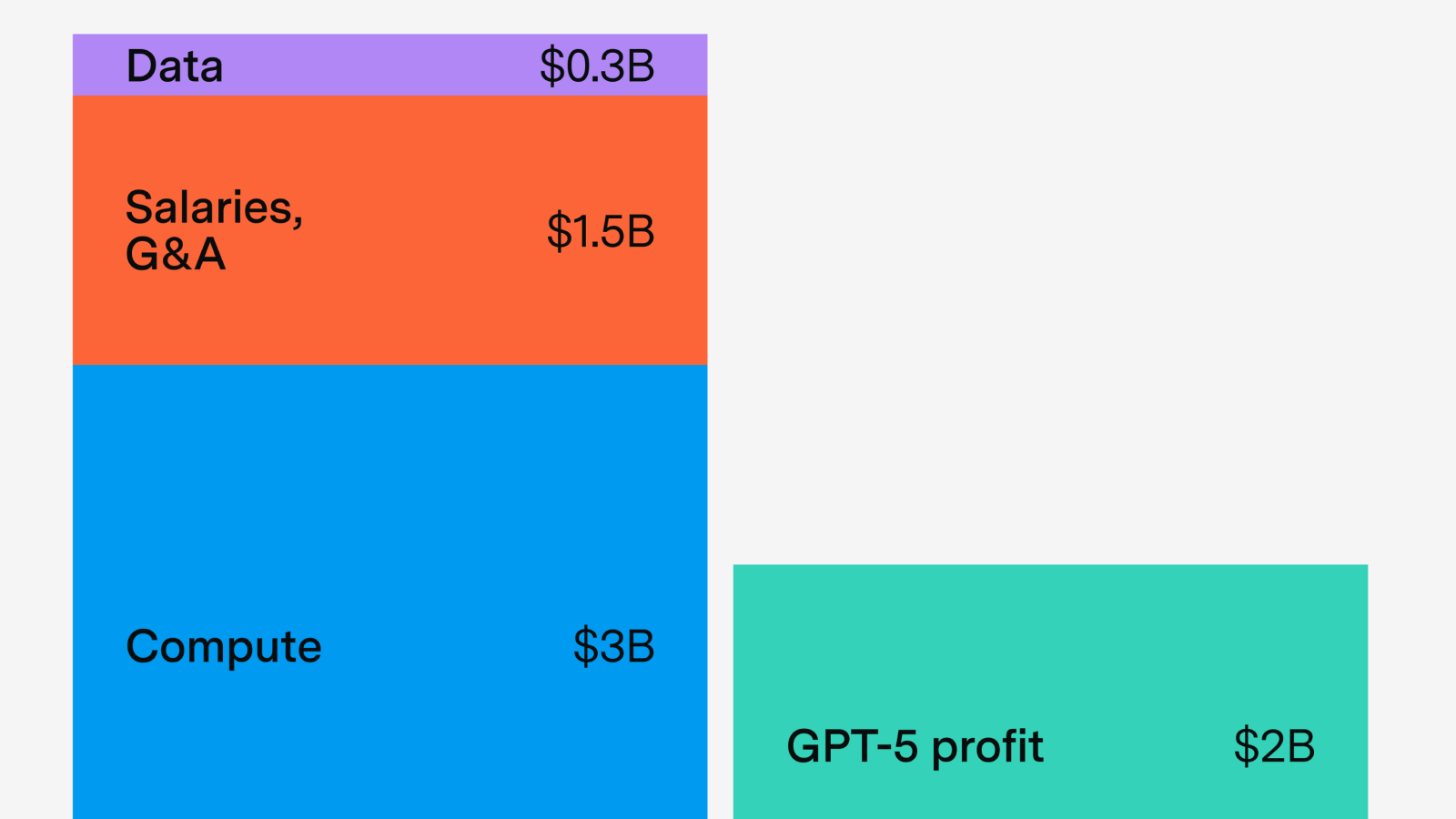

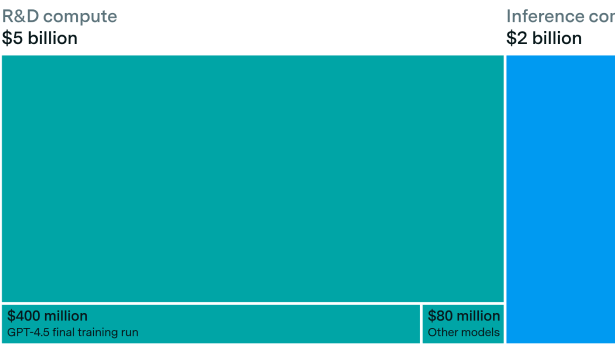

Lessons from GPT-5’s economics

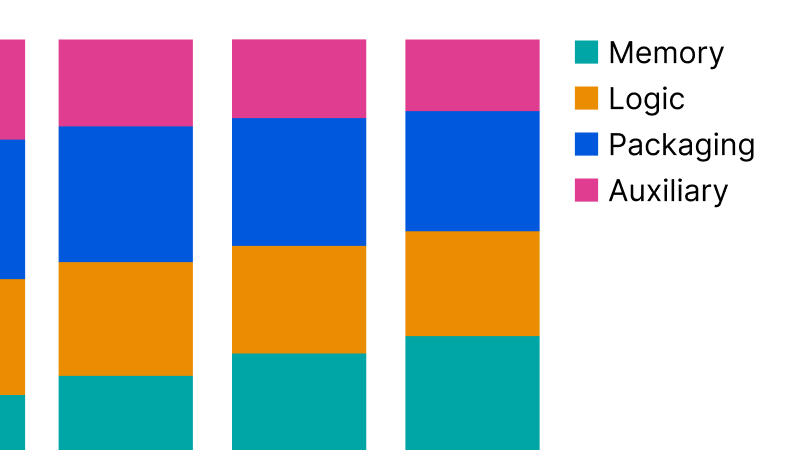

We announce our new AI Chip Sales data explorer, which uses financial reports, company disclosures, and more to estimate compute, power usage, and spending over time for a wide variety of AI chips.

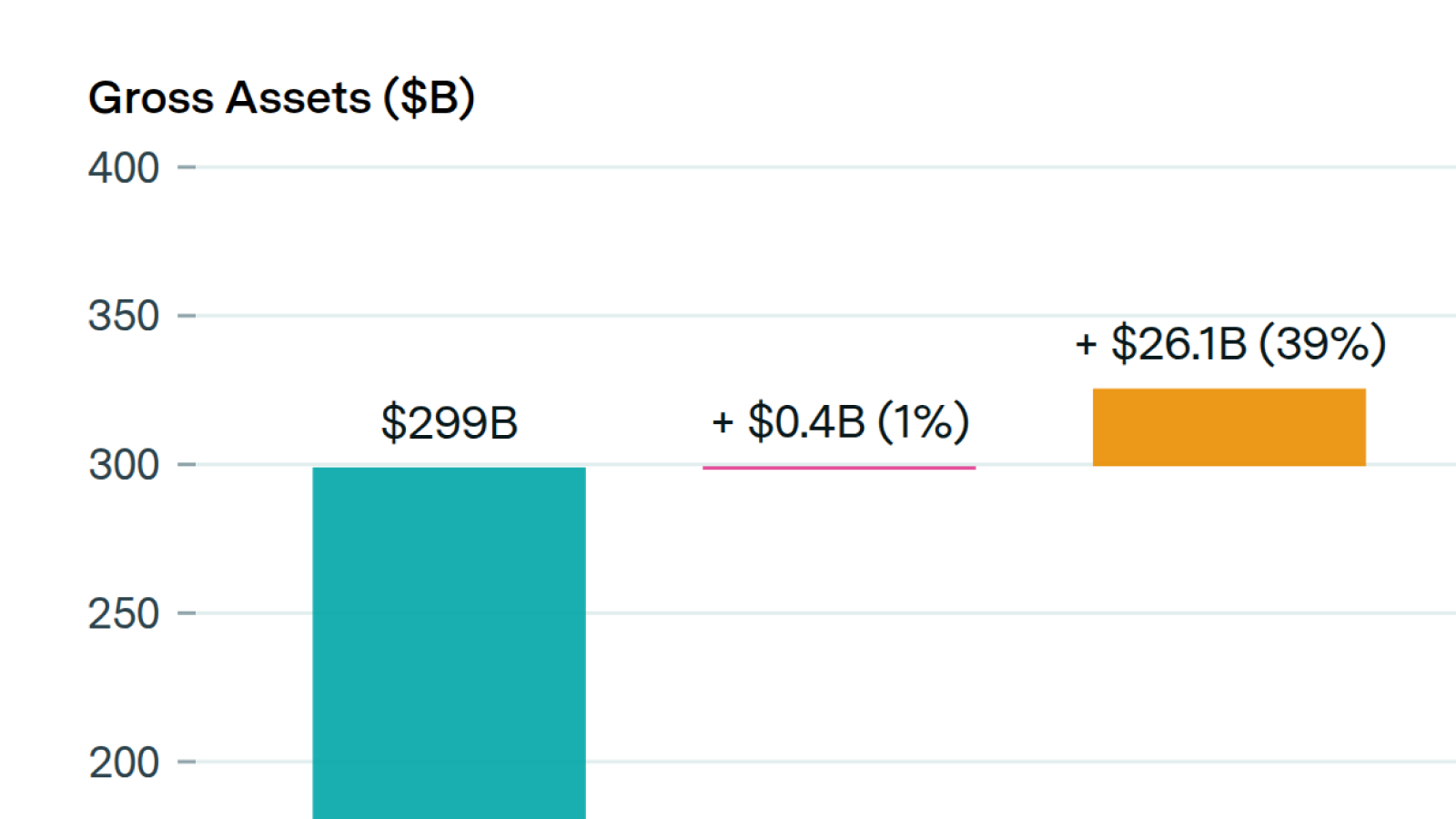

No company has gone from $10B to $100B as fast as OpenAI projects to do.

A heavily underappreciated dynamic when thinking about AI timelines.

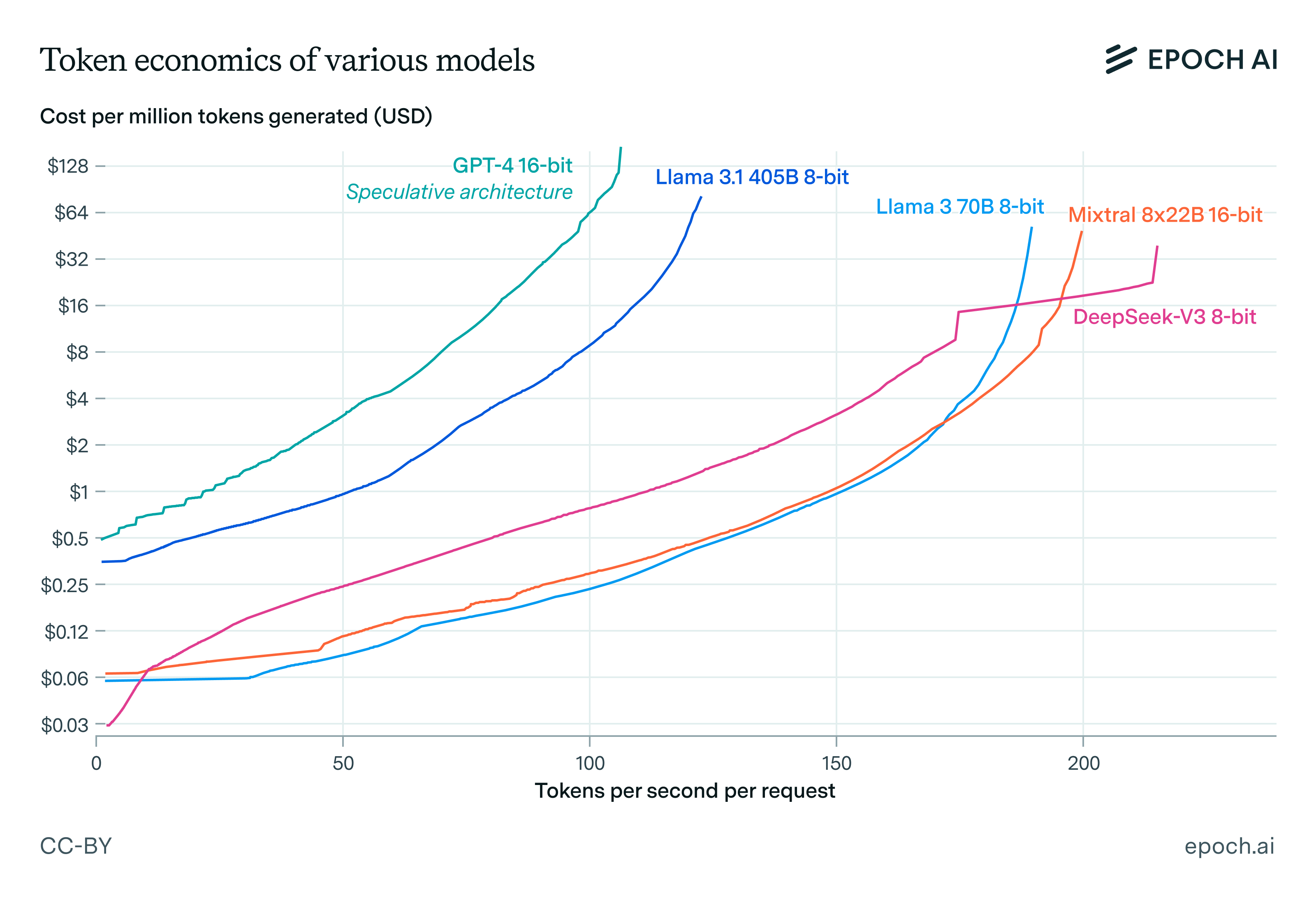

We investigate how speed trades off against cost in language model inference. We find that inference latency scales with the square root of model size and the cube root of memory bandwidth, and other results.

Algorithmic progress in AI may not reduce compute spending—instead, it could drive higher investment as efficiency unlocks new opportunities.

This Gradient Updates issue explores DeepSeek-R1's architecture, training cost, and pricing, showing how it rivals OpenAI's o1 at 30x lower cost.

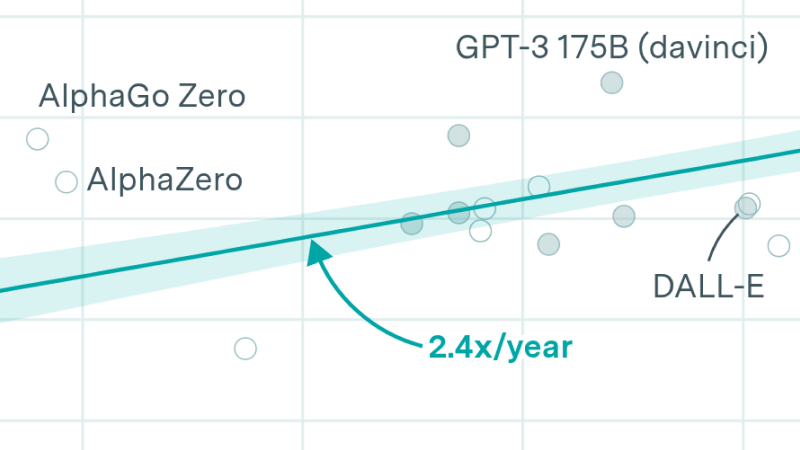

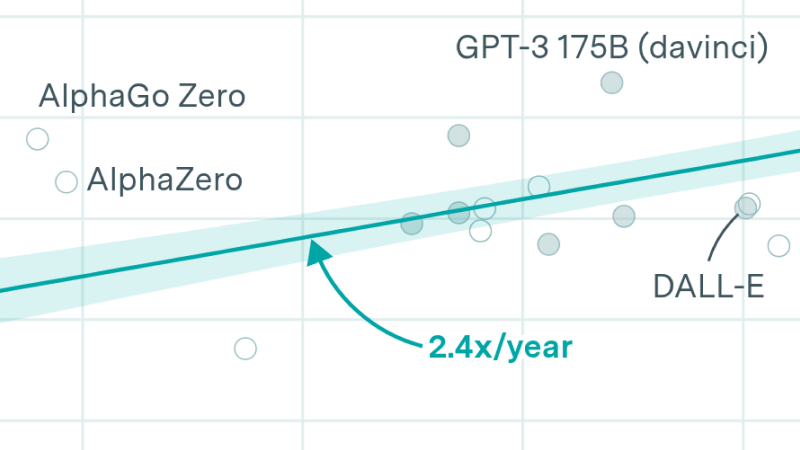

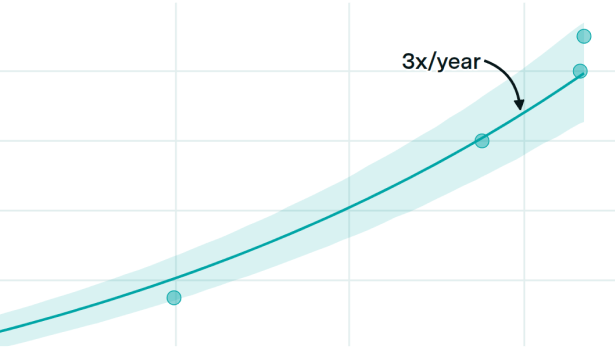

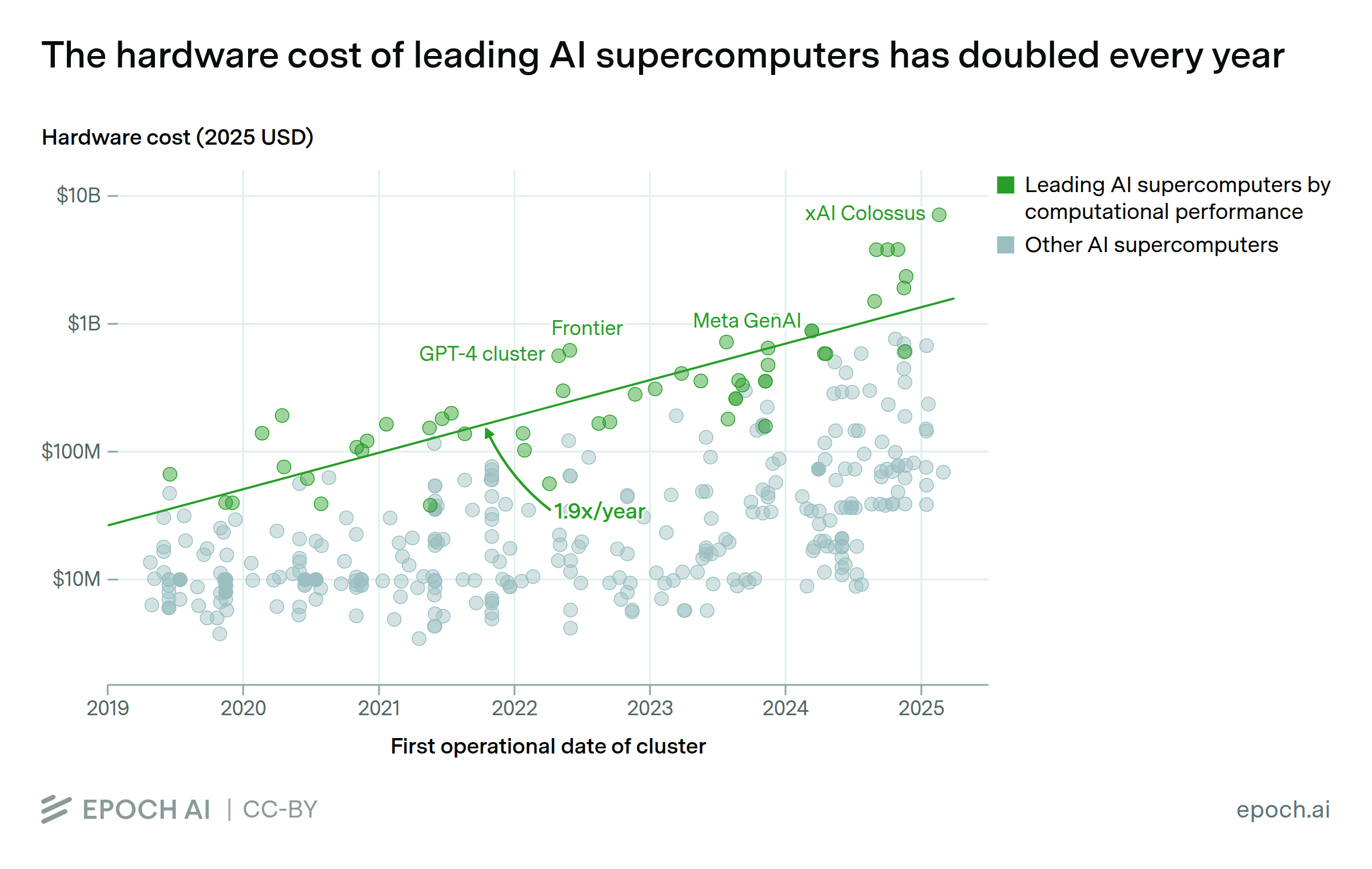

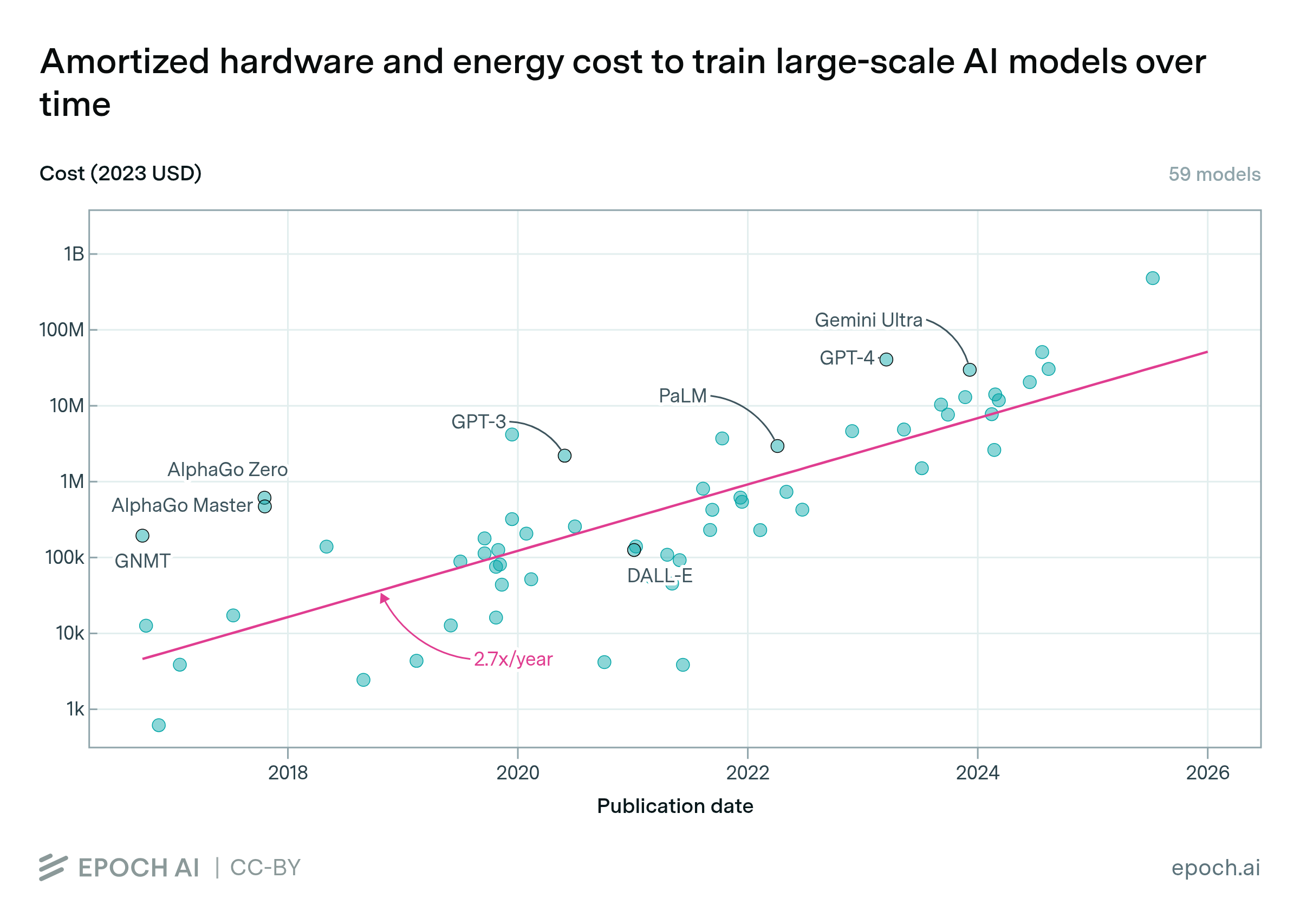

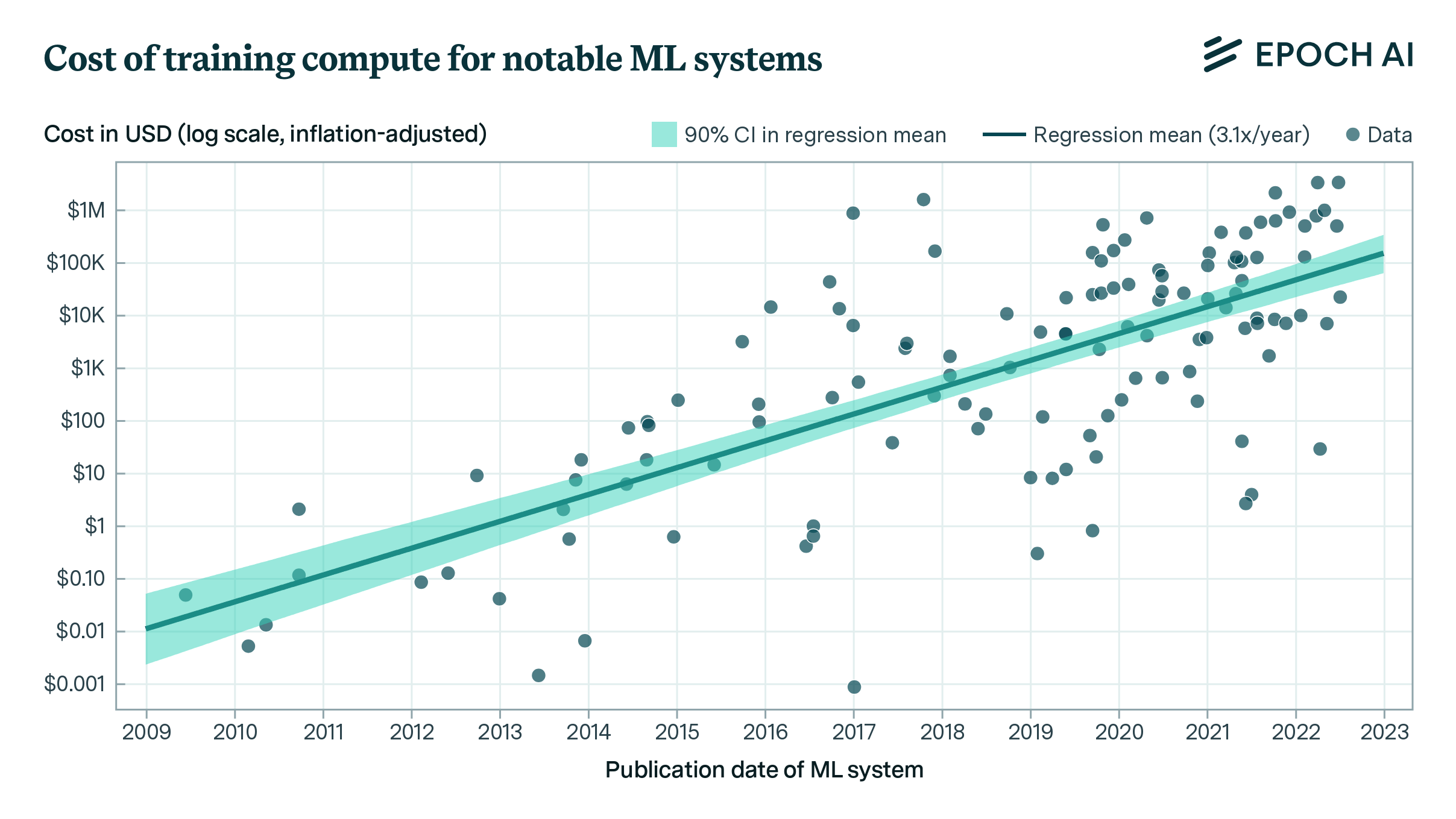

The cost of training frontier AI models has grown by a factor of 2 to 3x per year for the past eight years, suggesting that the largest models will cost over a billion dollars by 2027.

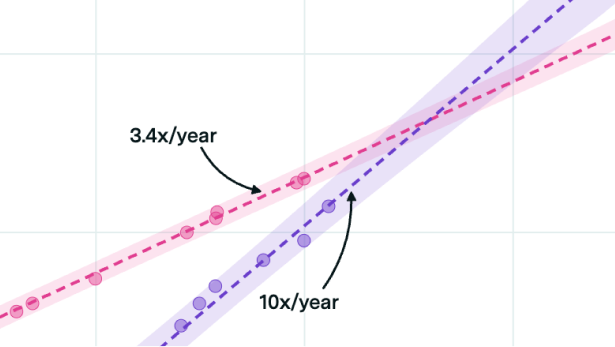

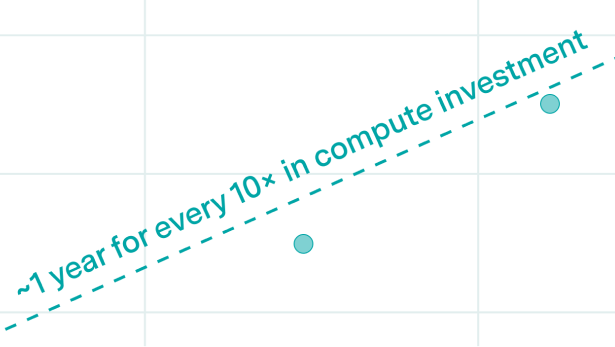

I combine training compute and GPU price-performance data to estimate the cost of compute in US dollars for the final training run of 124 machine learning systems published between 2009 and 2022, and find that the cost has grown by approximately 0.5 orders of magnitude per year.

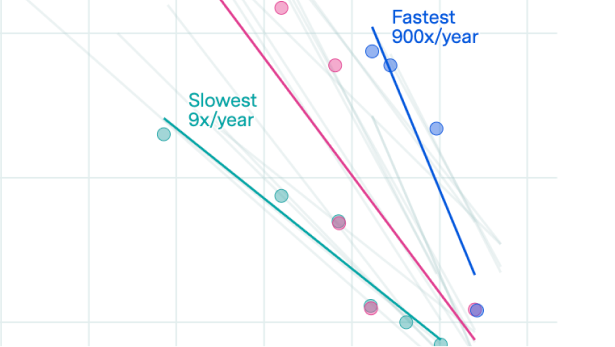

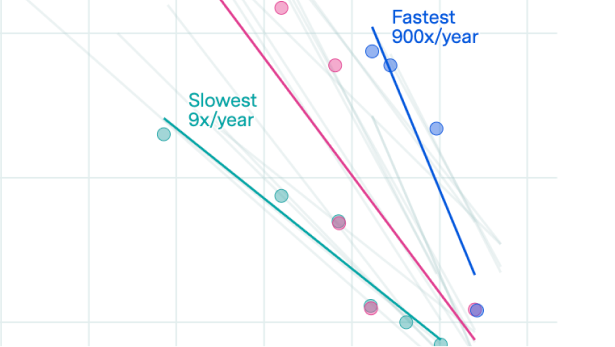

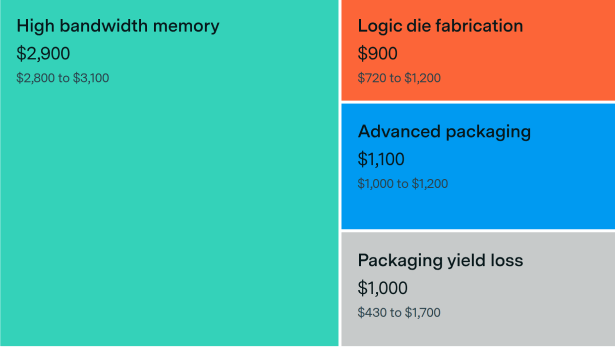

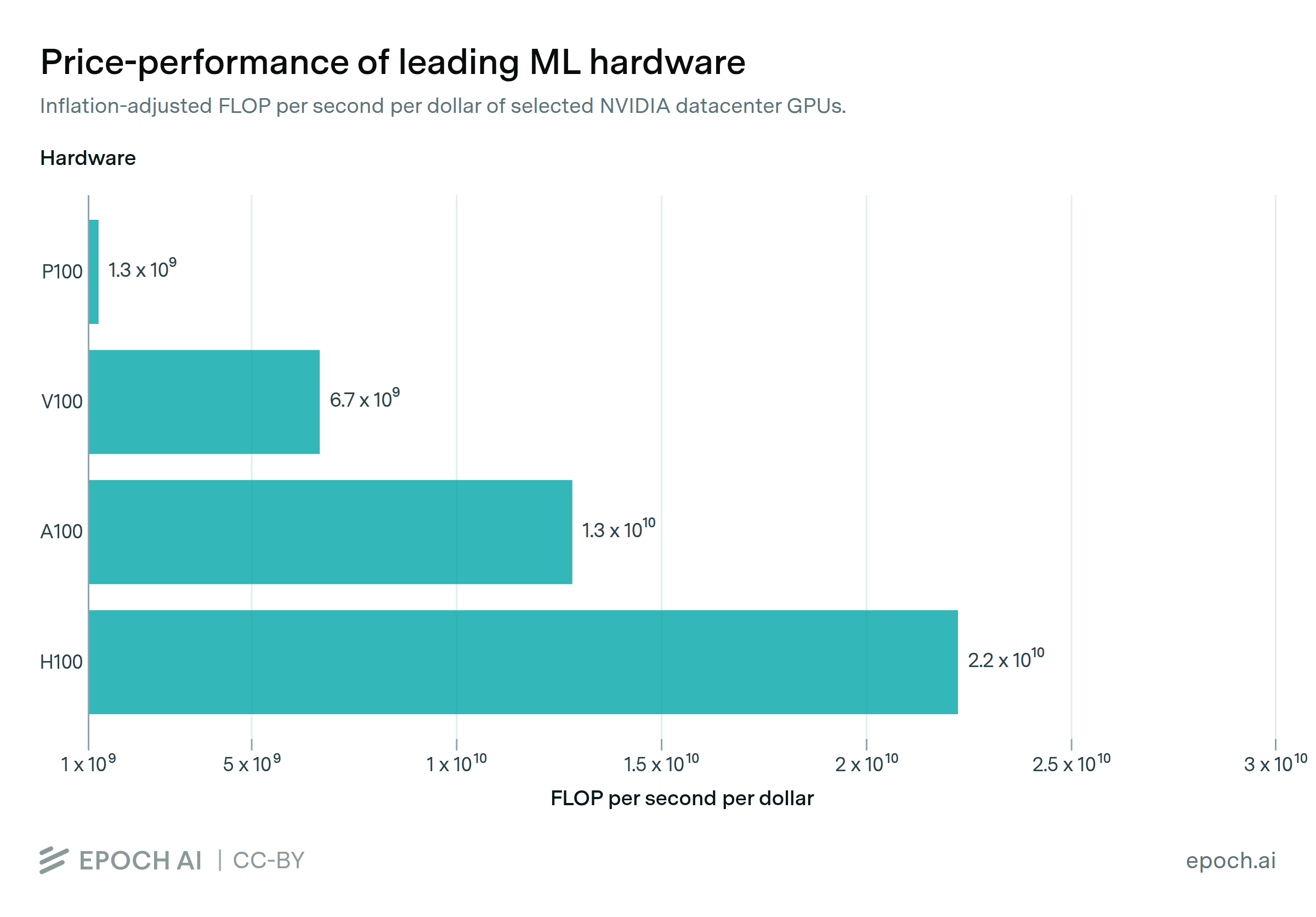

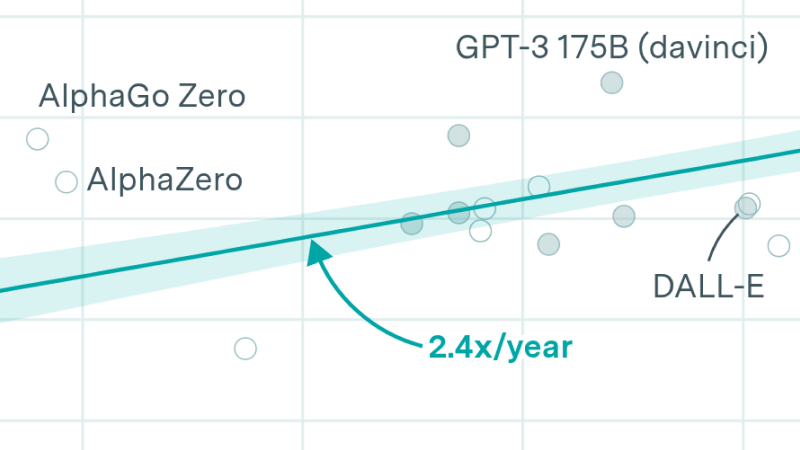

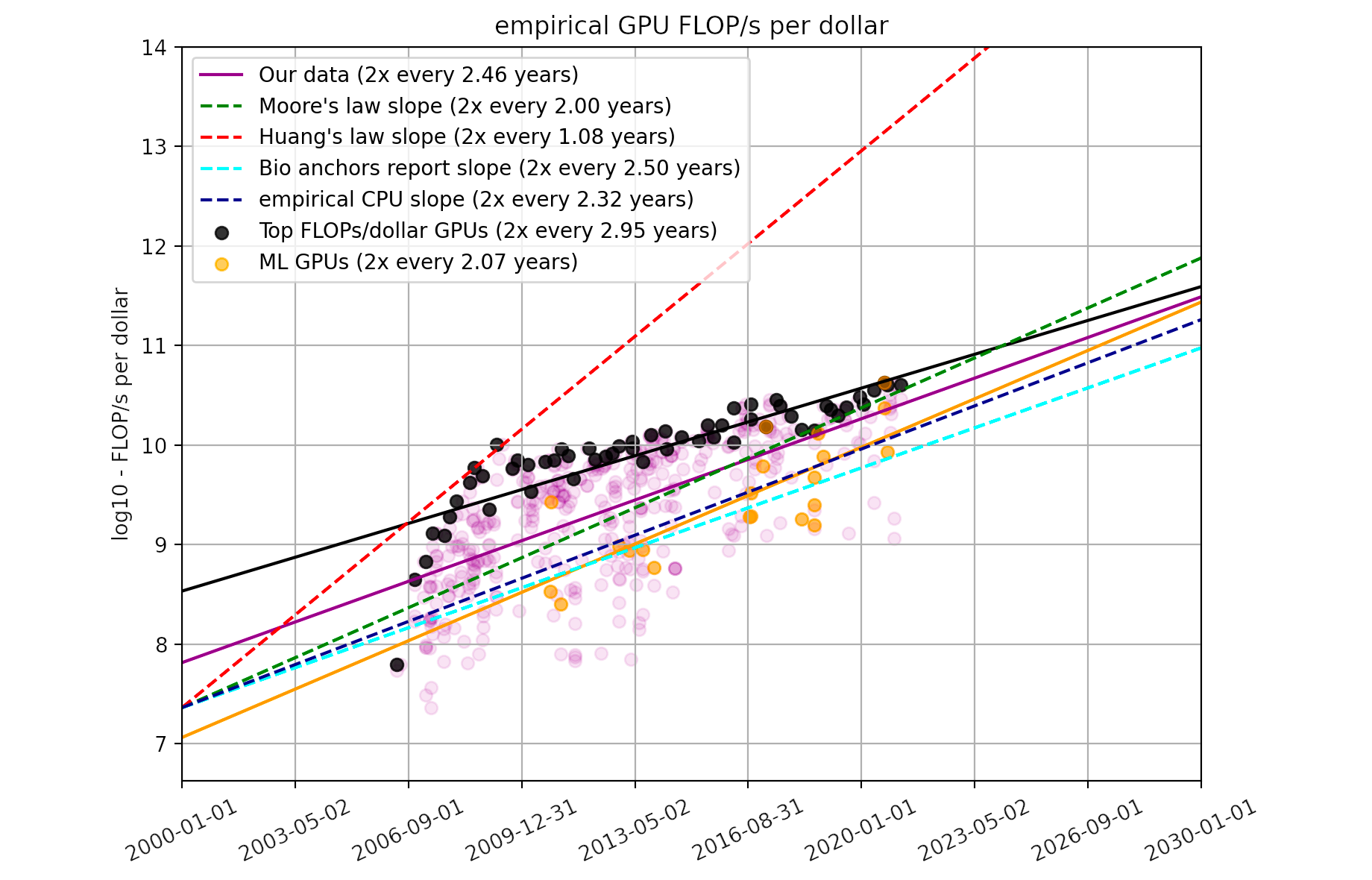

Using a dataset of 470 models of graphics processing units released between 2006 and 2021, we find that the amount of floating-point operations/second per $ doubles every ~2.5 years.