Featured

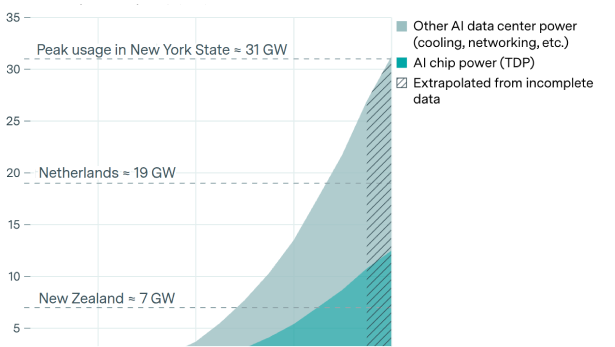

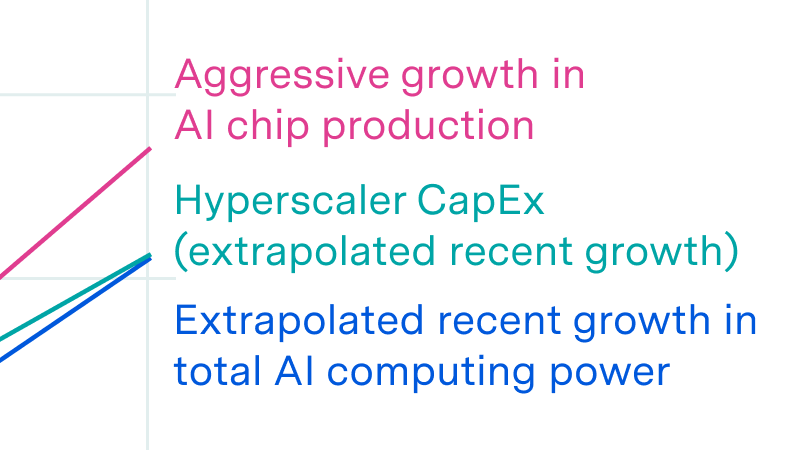

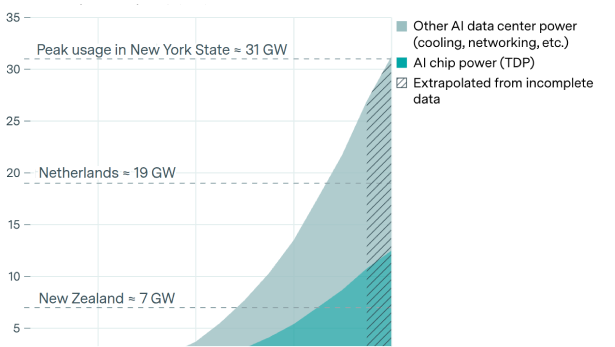

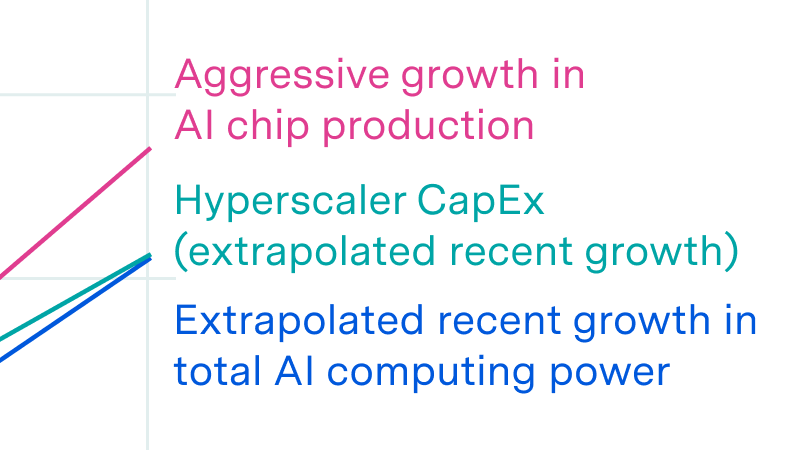

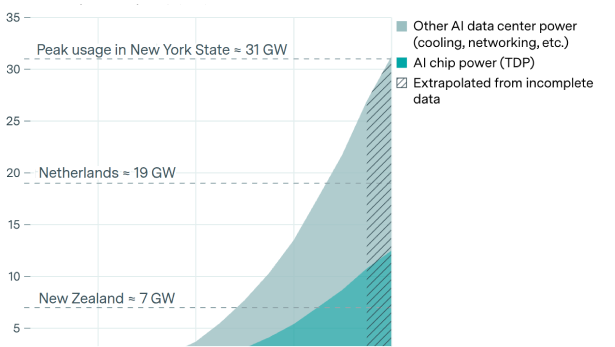

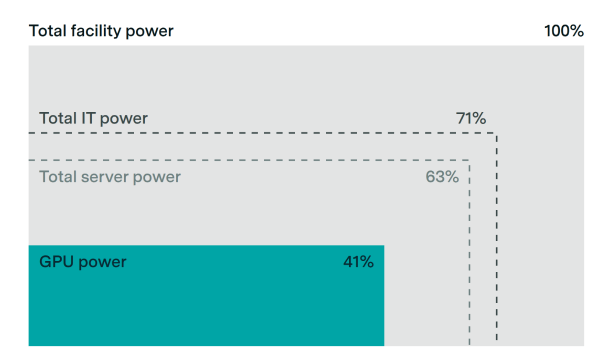

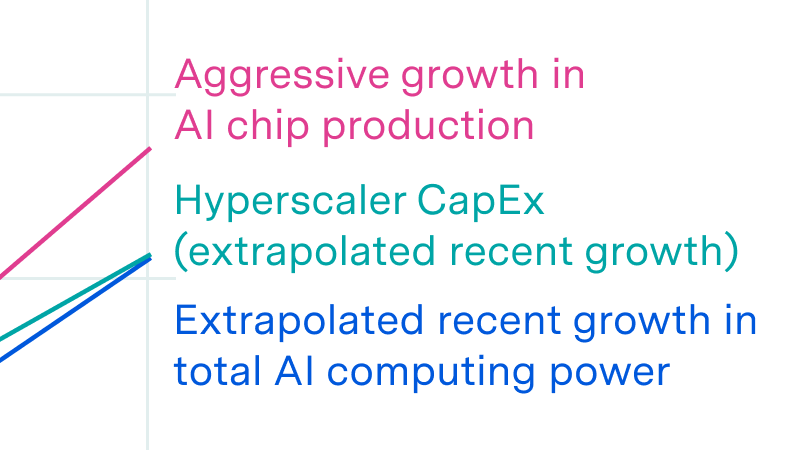

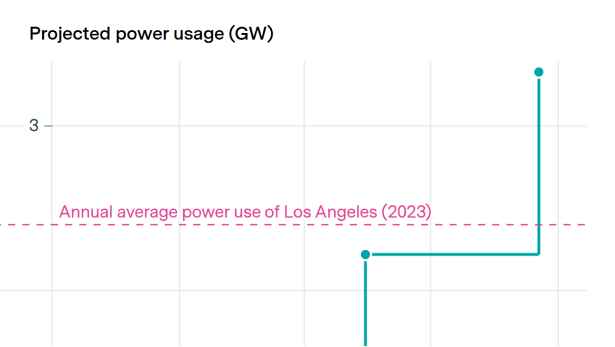

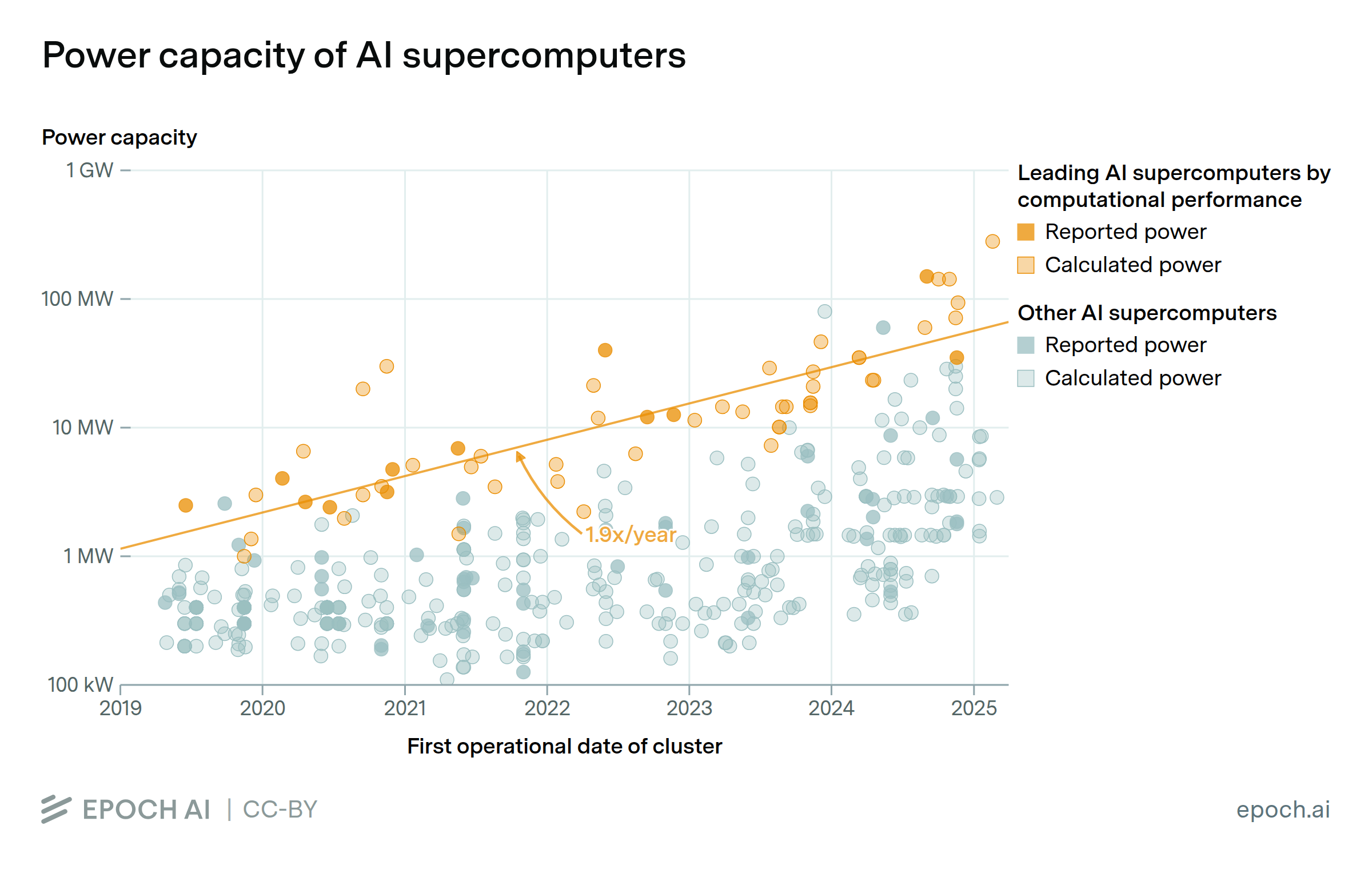

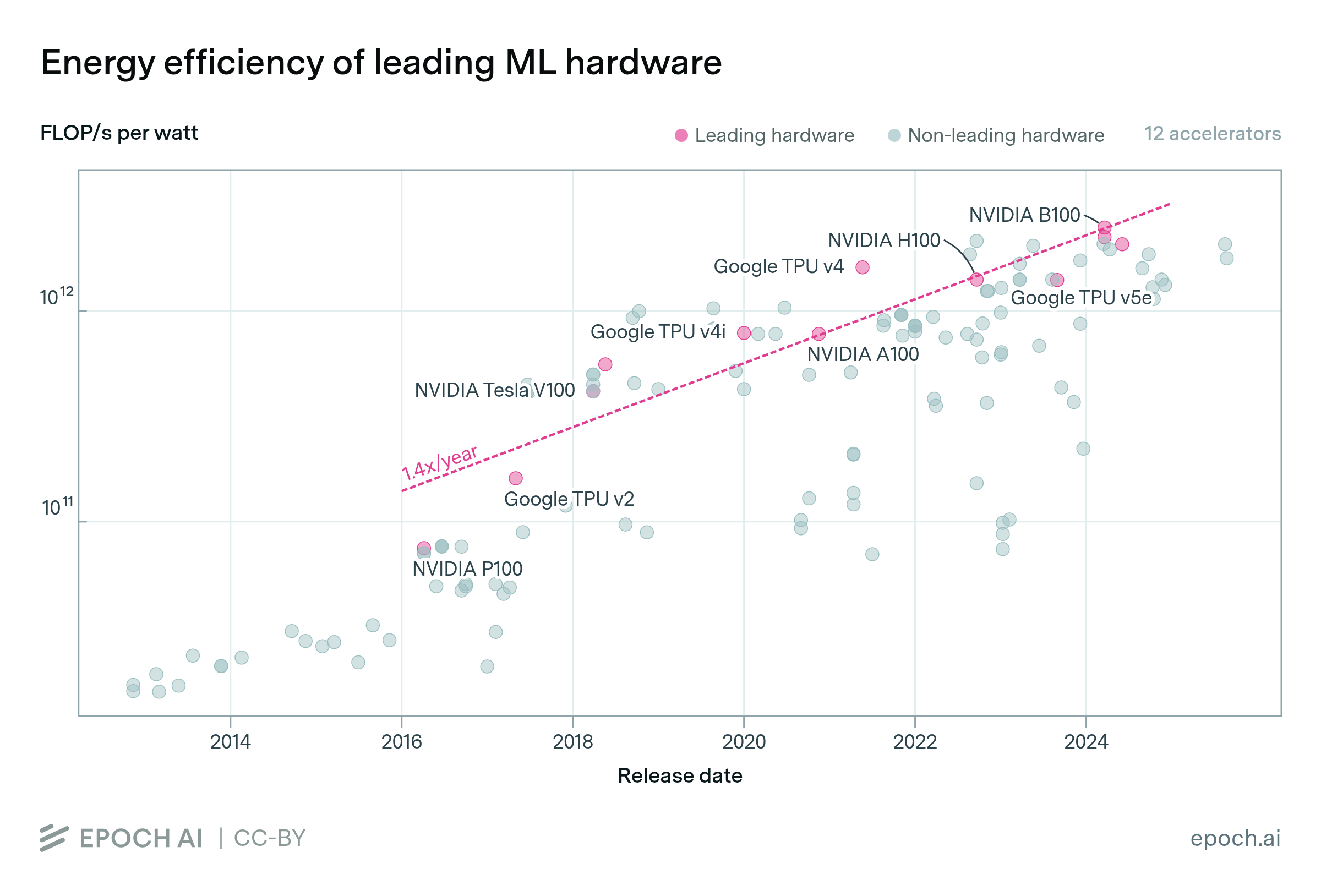

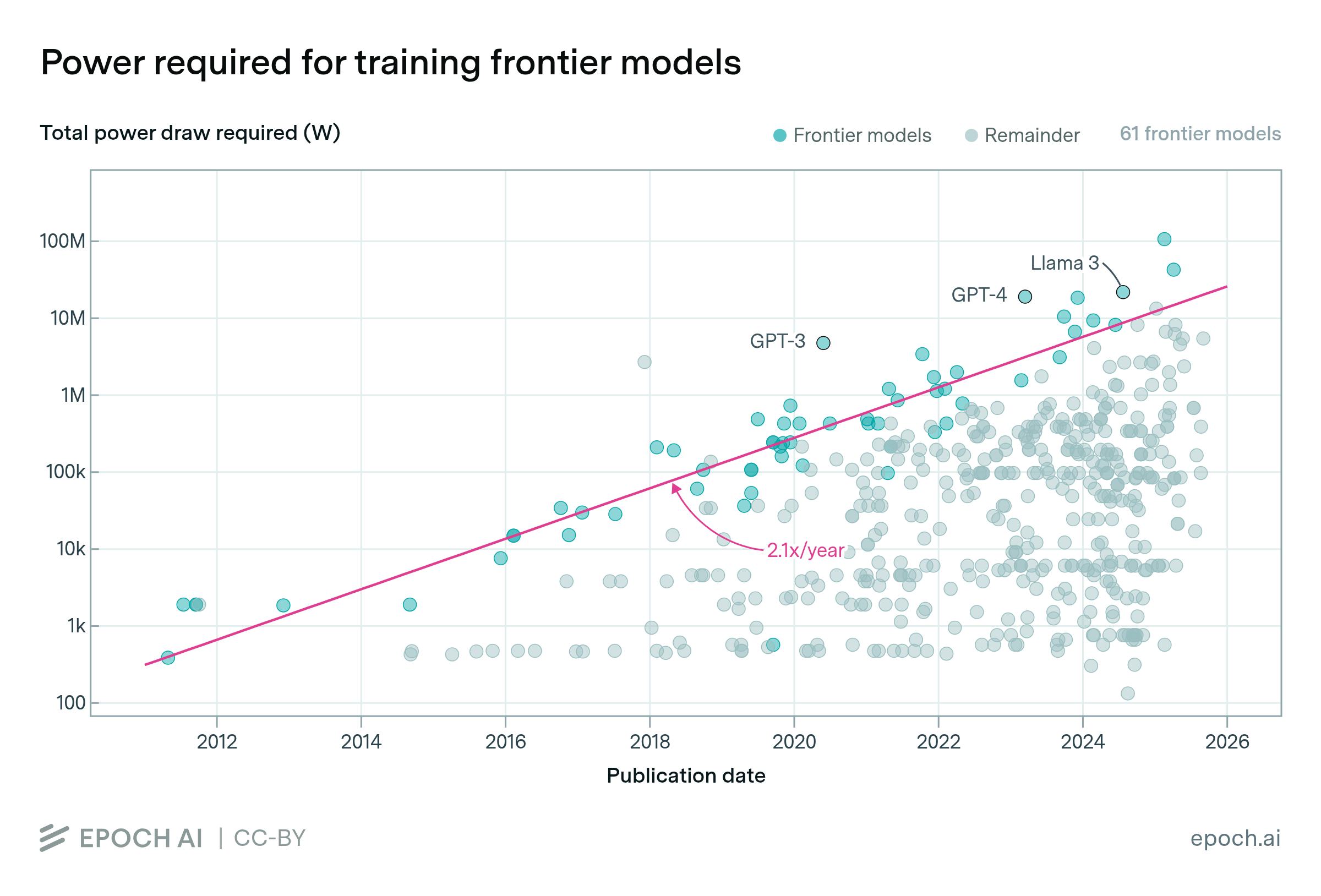

AI systems consume enormous and rapidly growing amounts of energy. As of 2025, the power required to train frontier models has been doubling annually, and new data centers are placing significant demands on power grids. The energy efficiency of AI hardware has been improving by roughly 40% each year, but the growth in compute demand has been outpacing it. Epoch tracks the power requirements of frontier AI, how efficiency gains compare to the growth in compute usage, and what the balance between energy demand and supply means for AI development.

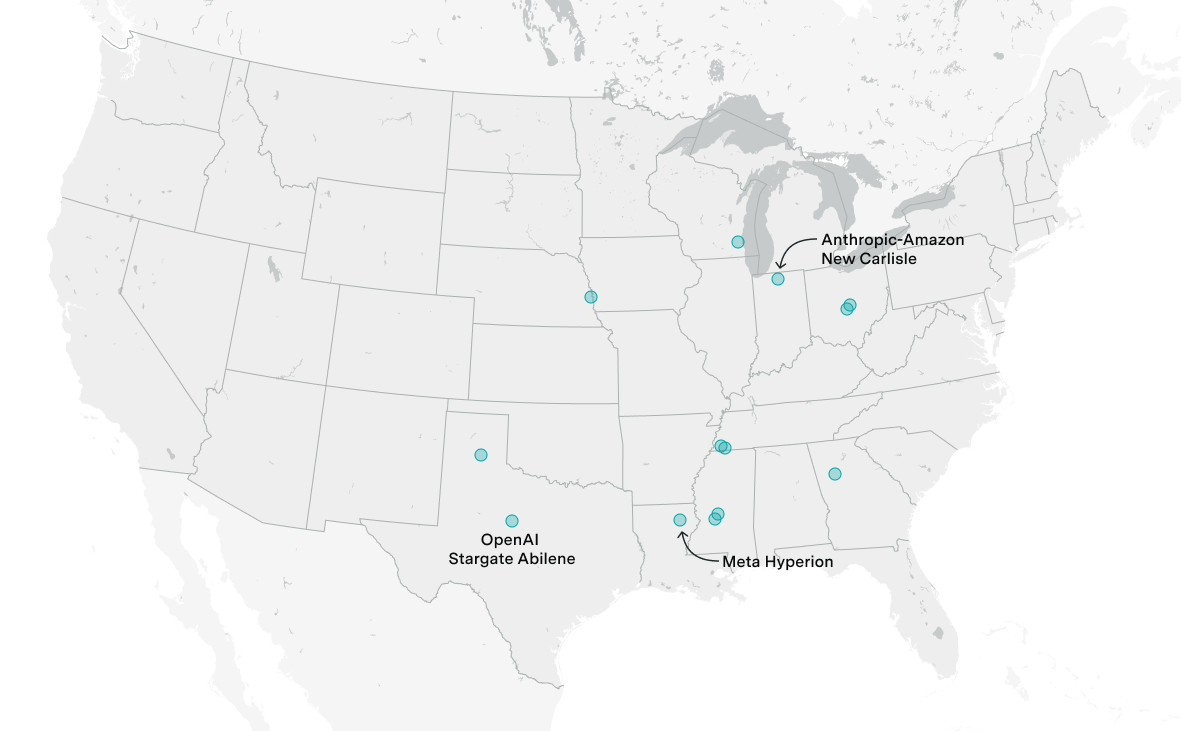

The $500 billion AI data center initiative is projected to exceed 9 gigawatts of capacity by 2029, with 0.3 gigawatts already operational in Abilene and six more US sites under active construction.

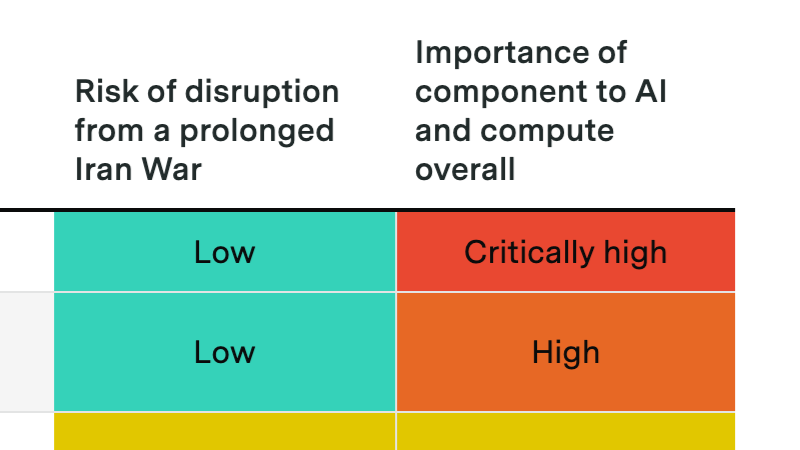

A prolonged Hormuz crisis probably won't derail the compute buildout, but it could slow data center expansion and disrupt Gulf investment flows into AI.

Why power is less of a bottleneck than you think.

AI companies are planning a buildout of data centers that will rank among the largest infrastructure projects in history. We examine their power demands, what makes AI data centers special, and what all this means for AI policy and the future of AI.

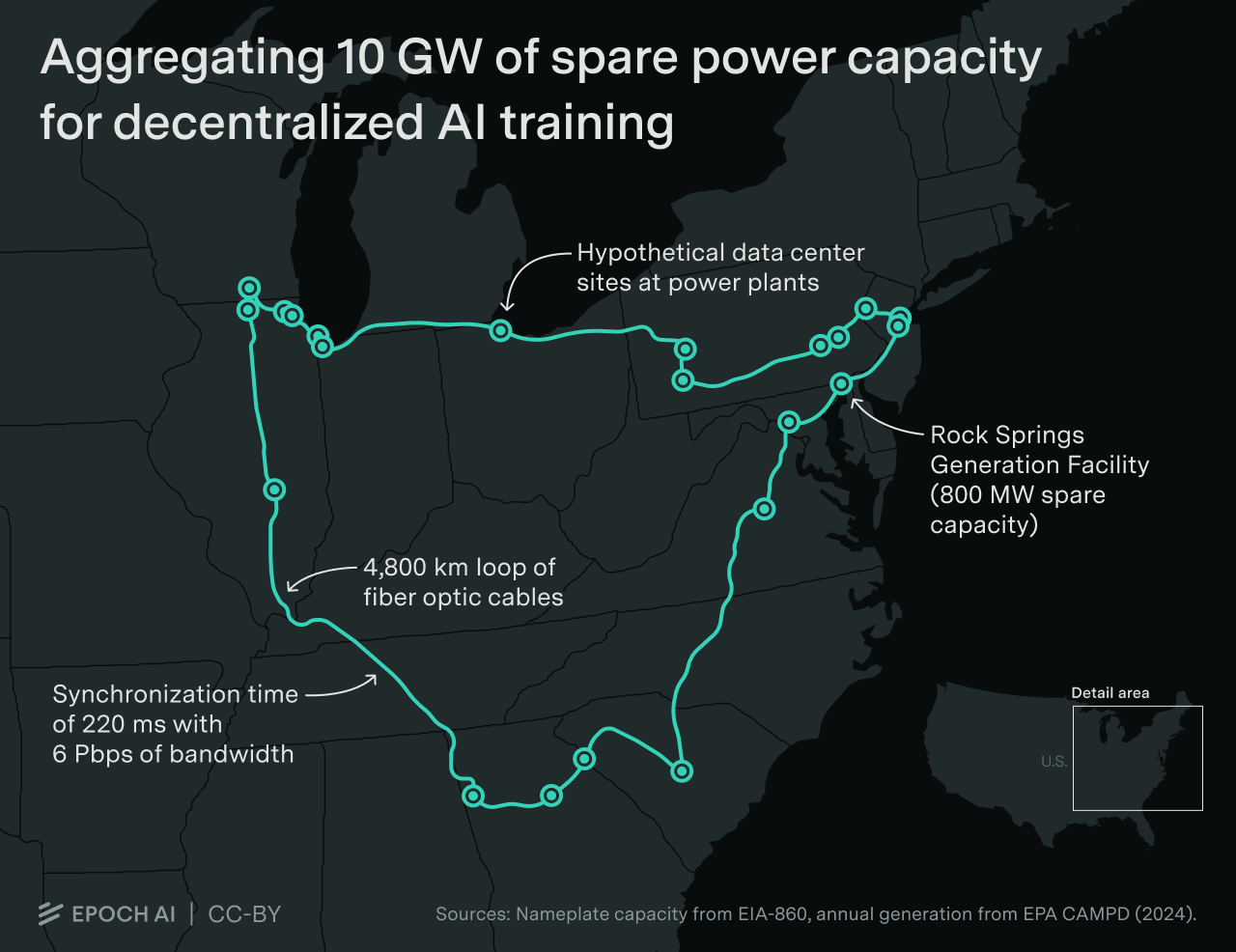

We illustrate a decentralized 10 GW training run across a dozen sites spanning thousands of kilometers. Developers are likely to scale datacenters to multi-gigawatt levels before adopting decentralized training.

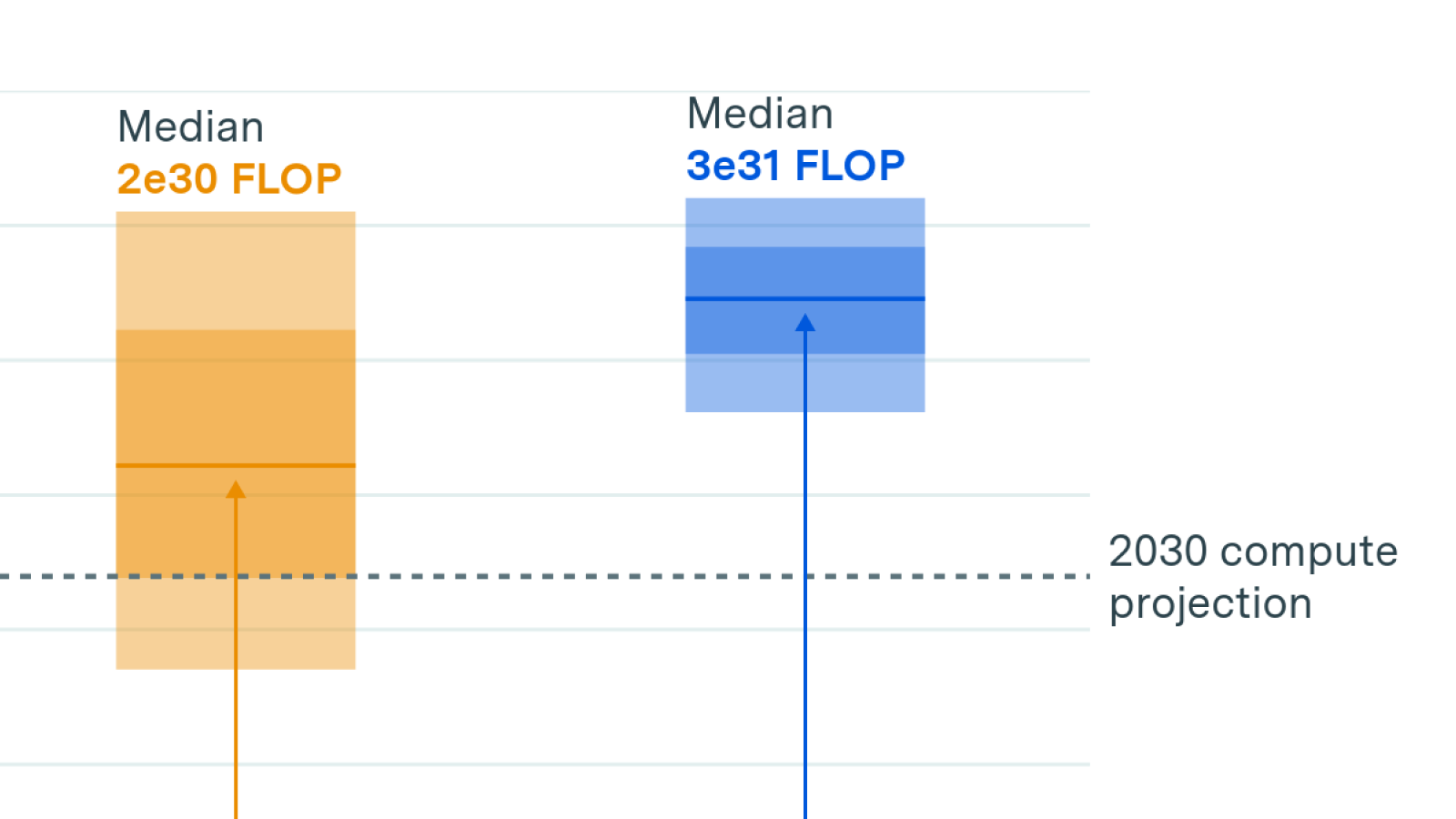

If scaling persists to 2030, AI investments will reach hundreds of billions of dollars and require gigawatts of power. Benchmarks suggest AI could improve productivity in valuable areas such as scientific R&D.

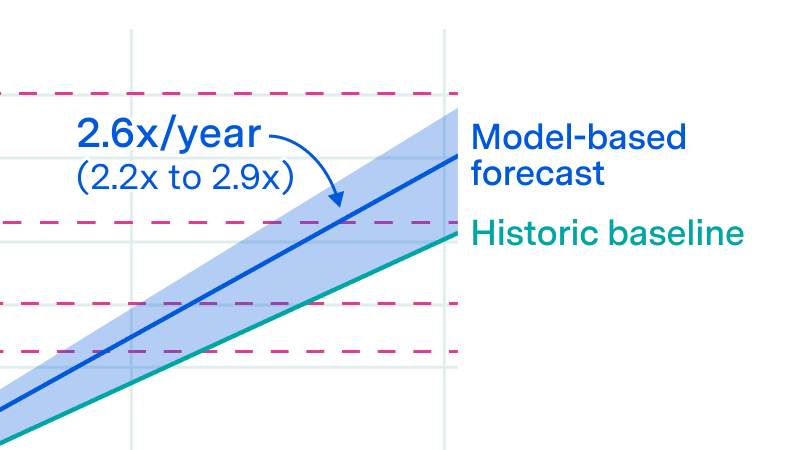

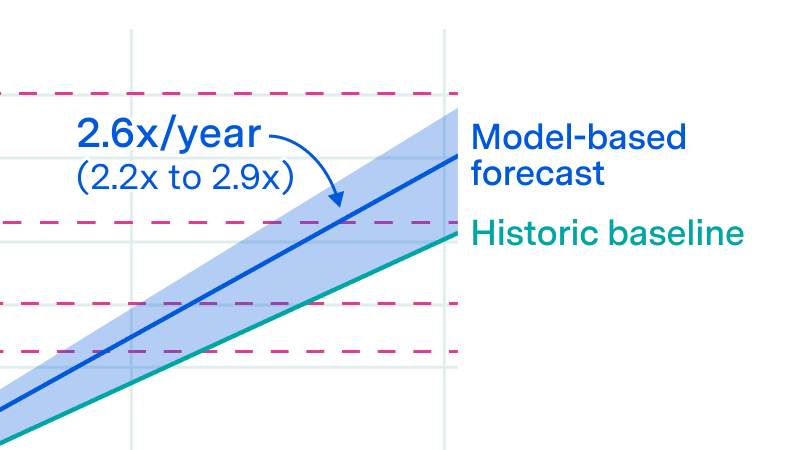

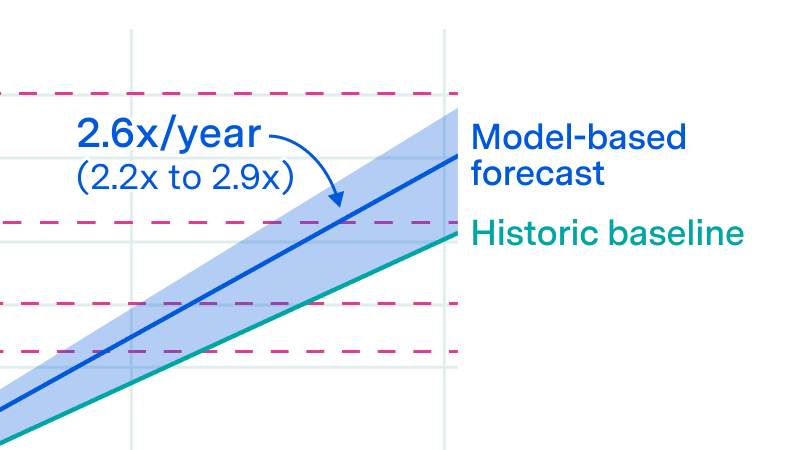

The power required to train the largest frontier models is growing by more than 2x per year, and is on trend to reaching multiple gigawatts by 2030.

An AI Manhattan Project could accelerate compute scaling by two years.

This Gradient Updates issue explores how much energy ChatGPT uses per query, revealing it's 10x less than common estimates.

We investigate the scalability of AI training runs. We identify electric power, chip manufacturing, data and latency as constraints. We conclude that 2e29 FLOP training runs will likely be feasible by 2030.

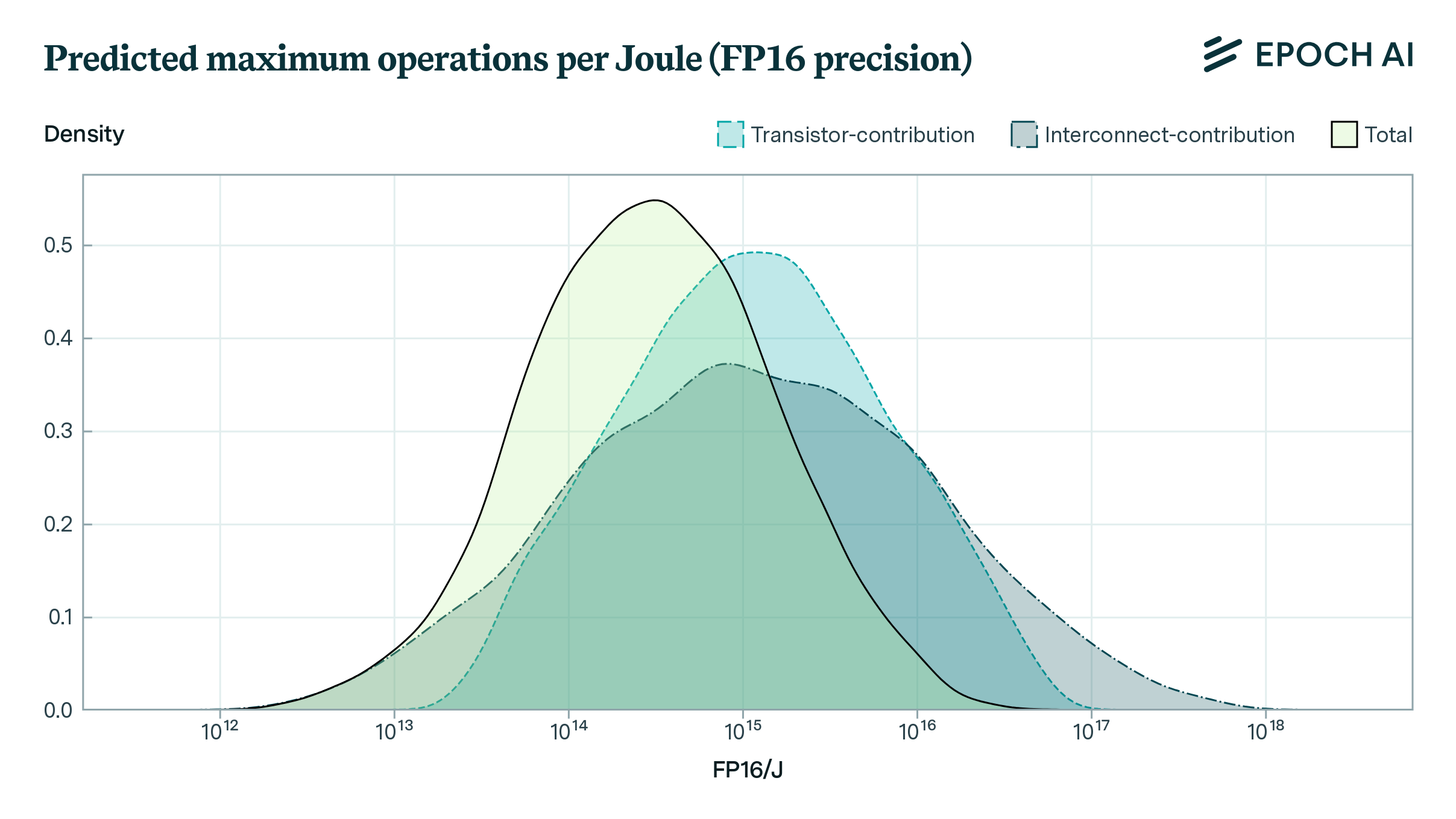

How far can the energy efficiency of CMOS microprocessors be pushed before we hit physical limits? Using a simple model, we find that there is room for a further 50 to 1000x improvement in energy efficiency.