Epoch's work is free to use, distribute, and reproduce provided the source and authors are credited under the Creative Commons BY license.

Learn more about this graph

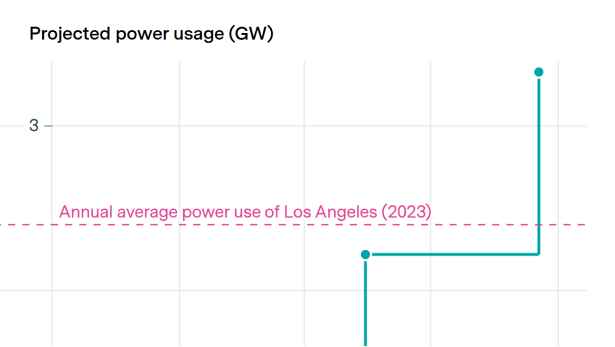

We combine training compute and training duration estimates for GPT-4 from our AI Models database with computational power estimates from our Frontier AI Data Centers database to calculate how many parallel GPT-4-sized runs could be completed in one month, using the largest data center at each date. To compare directly against GPT-4’s training setting, we assume that calculate our numbers using 16-bit precision performance.

You can learn more about how we estimate current and future data center specs on our Frontier AI Data Centers documentation page.

Explore this data

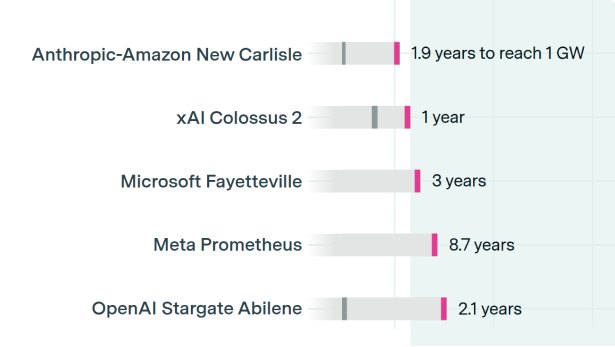

Open database of AI data centers using satellite and permit data to show compute, power use, and construction timelines.