One of the biggest factors shaping AI progress today is access to a very specific kind of computer chip, manufactured almost entirely by a single company in Taiwan. These specialized AI chips, also sometimes called AI accelerators, power every frontier AI product, from chatbots to image generators, and are the most important physical input to the training and deployment of AI systems. Some prominent examples of AI chips are Nvidia’s Blackwell and Hopper GPUs (named for the graphics chips they descend from), Google’s TPU, and Amazon’s Trainium series.

An Nvidia Blackwell GPU. Credit: Nvidia.

Who manufactures AI chips, who can buy them, and whether there is enough electricity to power them at scale — these questions are shaping which companies can build the most capable AI, which countries can support an AI industry, and how fast the technology advances.

Why AI companies want more chips than they can get

When a company wants to train a new AI model, one of the most important things they need is computing power: the capacity to do vast amounts of number crunching at extreme speed. Developing and deploying AI requires chips that can do this faster, cheaper, and at a larger scale than ordinary computer processors. That computing power, commonly known as “compute”, comes from thousands of individual AI chips working together in specialized facilities called AI data centers. These chips are so central to continued AI progress that leaders at most major AI companies have publicly said they want to buy more chips than they can get.

The main reason supply hasn’t kept pace is that AI chip production is complicated, depends on multiple interdependent steps, and scales only as fast as the slowest link. The supply chain starts with design. A few companies create blueprints for AI chips; Nvidia is the best-known, but Google, Amazon, AMD, and Huawei also design their own. But most of these companies don’t build the chips themselves. Chip fabrication pushes against the physical limits of how small things can be made, and requires working at a scale approaching that of individual atoms. Instead of trying to replicate the necessary know-how, companies send their designs to Taiwan Semiconductor Manufacturing Company (TSMC), which operates the fabrication plants where chips are physically produced.

TSMC is by far the world leader in fabricating AI chips (alongside many other types of chips, such as smartphone chips), in terms of both quantity and quality. This means that nearly the entire global AI chip supply, regardless of who designed it or who ultimately uses it, flows through this one company. Most of TSMC’s facilities are located in Taiwan, though TSMC is investing heavily in multiple fabs in Arizona, as well as in Japan and Germany.

An AI chip is made up of multiple parts. The most expensive component is its high-bandwidth memory (HBM), a specialized, fast type of memory. It is mostly made by three companies: Samsung, SK Hynix, and Micron, and then shipped to TSMC, which combines it with the rest of the chip into the final package. HBM was perhaps the most binding constraint on AI chip production as of late 2025. In the words of Micron’s executive vice president (December 2025): “This is the most significant disconnect between demand and supply … that we’ve experienced in my 25 years in the industry.”

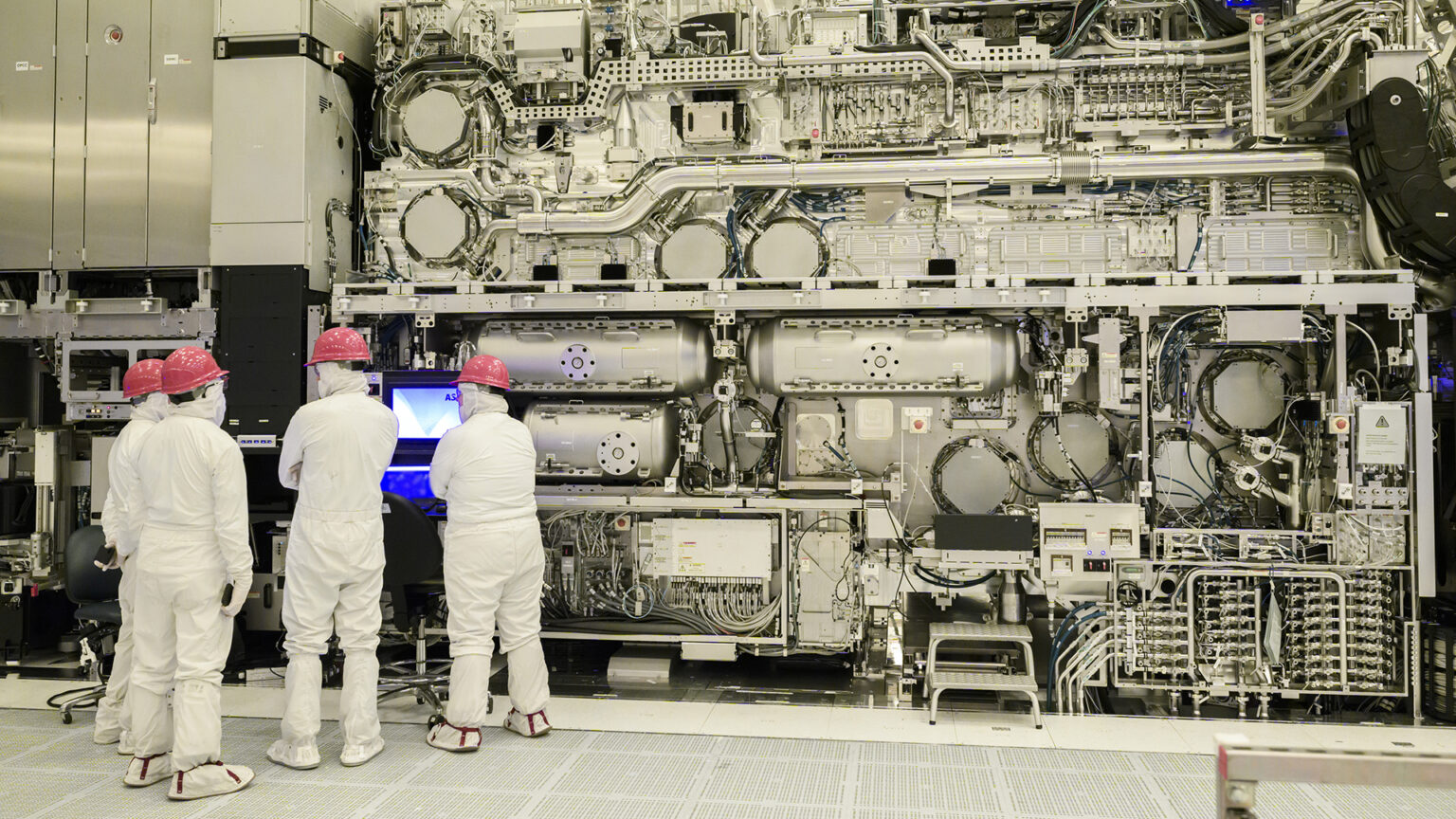

TSMC and the memory companies, in turn, depend on specialized semiconductor manufacturing equipment for manufacturing chips. The most complex piece of equipment is the extreme ultraviolet lithography (EUV) machine, which etches features smaller than a virus onto silicon. Just one company makes them: ASML, in the Netherlands.

A 165-ton ASML-built EUV tool installed in a clean room at an Intel Corporation facility. Credit: Intel Corporation.

Chip production growth depends on all of these players expanding capacity in step. When they don’t, the effects ripple far beyond AI. The same companies that supply memory for AI chips also supply memory for smartphones, cars, and gaming consoles. In 2025, outsized demand for AI chips drove up prices across all of these industries.

Despite this intricate chain, production has been growing rapidly. By the fourth quarter of 2025, the five largest chip designers had cumulatively shipped roughly 20 million AI chips. Total AI computing capacity has been doubling approximately every seven months, driven by both rising manufacturing volumes and each new generation of chip being substantially more powerful than the last.

Cumulative AI computing capacity by chip designer, measured in H100-equivalent GPUs. Nvidia accounts for a majority of total capacity. Explore the AI Chip Sales data.

By the fourth quarter of 2025, Nvidia accounted for roughly half of all chips sold by unit count and over 66% of total AI computing capacity in use worldwide. Google shipped the second-largest volume, primarily its own TPU chips for use in its own data centers. Amazon, AMD, and Huawei each represent smaller but growing shares. Huawei’s Ascend series, developed through a separate manufacturing chain after US export controls restricted its access to TSMC, accounted for roughly 6% of chips in 2025.

The high demand also means that these chips are expensive, and getting more so with each generation. But the price tag on the box turns out to be the wrong number to pay attention to.

AI chips get more cost-effective every year

Nvidia’s flagship AI chips have gotten more expensive with each generation, rising from $5,700 in 2016 to $34,000 in 2022. Each chip costs as much as a new car, and frontier AI projects require tens to hundreds of thousands of them. Computing hardware is the single largest expense in AI development. Across leading AI companies where breakdowns are available, the chips and computing time to run them account for 54% to 62% of total spending.

Chip prices have risen sharply with each new generation. But price alone is misleading — each generation also delivers substantially more computation per dollar. Explore the Machine Learning Hardware data.

But rising prices haven’t stopped companies from buying hundreds of thousands of chips. What matters isn’t the cost per chip, but the cost per unit of useful work that chip performs. A company deciding whether to buy the latest generation is asking: how many AI queries can this chip handle, how many training runs can it support, and how much time will it cut from training a model?

The standard measure of cost-effectiveness is compute per dollar. Chip performance is measured in FLOP/s (floating-point operations per second), where each operation is a single arithmetic operation, such as multiplication or addition. Training a large AI model can require performing trillions of these every second, for weeks. On that measure, the trend has been dramatically positive. For example, the Nvidia H100 in 2022 cost six times more than the P100 did in 2016, but performs seventeen times as much computation per dollar. From the buyer’s perspective, the more expensive chip is actually far cheaper for the work it does.

Raw machine learning performance per chip has grown at roughly 1.6x per year since 2015. Because performance has risen faster than price over the same period, computation per dollar has improved with each generation. Explore the Machine Learning Hardware data.

The main forces driving AI chip cost-effectiveness are improvements to AI chip architectures, as well as higher transistor density, famously described by Moore’s Law. Across chip generations, performance per dollar has roughly doubled every 2.5 years since the early 2010s, and newer chips like Nvidia’s Blackwell family continue this pattern.

An additional factor improving cost-effectiveness over time is reduced arithmetic precision. Over time, AI developers have figured out how to make AI computations work well with less precise numbers, and chips have been designed to support lower precision at higher speeds. As a rough rule, halving the precision of the arithmetic roughly doubles a chip’s throughput. This has contributed meaningful additional gains over the past decade, but it is a secondary factor: AI developers halve their precision at a slower pace than hardware architecture improves. And by 2025, most of the easy gains from reducing precision appear to have been captured; common number formats for AI have shrunk from 32 bits of precision to 8 or 4 bits today, with little or no room to shrink further.

Electricity efficiency is increasing, but so is total consumption

AI chips require electricity to run. A single chip at full load draws around 1,000 watts, about as much as a countertop microwave running nonstop. One microwave is nothing, but millions of them running 24 hours a day is a different story. By late 2025, total AI data center power capacity had reached roughly tens of gigawatts, which puts AI’s electricity consumption at a scale comparable to the peak electricity demand of the state of New York. Each new generation of chip delivers more computation per dollar. But every chip needs to be plugged in, cooled, and kept running. If the electrical grid cannot supply enough power, or if new power generation cannot be built fast enough, the expensive chips just sit in warehouses.

At the individual chip level, each new generation of AI chip does more useful work per watt of electricity consumed. Leading chips have been getting roughly 40% more energy-efficient each year, doubling in efficiency approximately every 2.7 years. Nvidia’s B100, released in 2024, does roughly three times as much computation per watt as the A100 from 2020.

Machine learning performance versus power draw of AI accelerators. Chips further to the right are more energy-efficient. Chips higher up are more powerful overall. Newer generations tend to be both. Explore the Machine Learning Hardware data.

But at the same time, total power consumption is rising, not falling, because the number of chips being installed is growing faster than efficiency gains can offset. The industry is plugging in new chips faster than engineers can improve their energy efficiency. Because energy efficiency (computation per watt) and cost-effectiveness (computation per dollar) have been improving at roughly the same rate, these trends roughly cancel each other out in terms of the watts consumed per dollar spent on AI chips. So if cumulative spending on AI hardware continues to grow, total energy consumption will also grow over time.

AI chips sit at the center of progress in AI

How capable AI systems become, how fast they improve, which companies lead and which fall behind, which countries can build an AI industry at all? These questions all run through the same physical input: a specific type of chip, fabricated at the leading edge by a handful of companies, in quantities that have not kept pace with demand. The volume of chips being produced, what each costs per unit of computation, and how much electricity they consume in aggregate determine whether AI can scale to the hundred-billion-dollar, gigawatt-scale training runs projected for 2030.

To learn more about the data centers where these chips are housed and the infrastructure challenges they create, read our report on what you need to know about AI data centers. To see more data and information on AI chips, you can visit the AI Chip Sales and the Machine Learning Hardware data explorers.