Related work

Our estimates of how much advanced logic wafer capacity, CoWoS packaging, and HBM memory leading AI chip designers consumed.

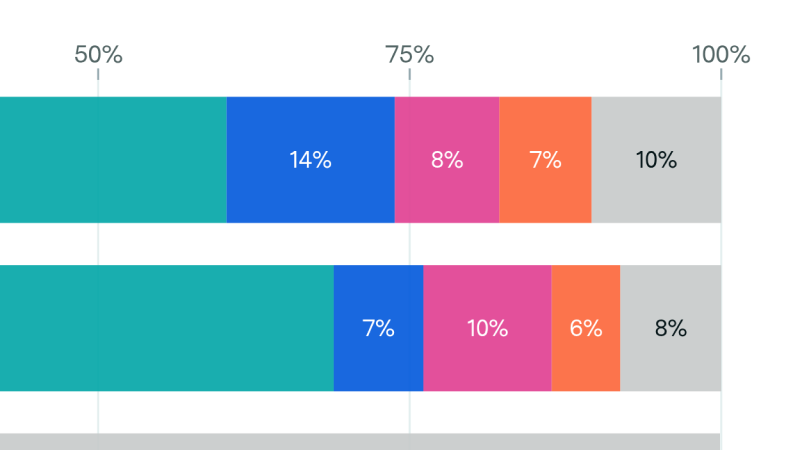

Our AI Chip Components explorer estimates what share of global advanced semiconductor manufacturing capacity — advanced-node logic wafers, CoWoS packaging, and high-bandwidth memory (HBM) — was consumed each quarter by the leading AI chip designers (Nvidia, AMD, Google, and Amazon) from Q1 2024 through Q4 2025.

The model works in three steps: it estimates quarterly chip production by designer and chip type starting from our AI Chip Sales hub (with adjustments for manufacturing lags, inventory, and export-control write-downs), translates those chip volumes into component demand using per-chip bill-of-materials specifications and yield assumptions, then compares each designer’s demand against our estimates of total quarterly component supply.

Epoch AI’s data is free to use, distribute, and reproduce provided the source and authors are credited under the Creative Commons Attribution license.

The AI Chip Components explorer shows Epoch AI’s estimates of how much of three critical semiconductor supply chain components — advanced logic wafers, CoWoS advanced packaging, and HBM memory — was consumed by the leading AI chip designers in each quarter from Q1 2024 through Q4 2025.

The three components tracked are:

Logic wafers, CoWoS packaging, and HBM are the three principal bottlenecks constraining AI chip production. All three have been in tight supply relative to demand since 2023.

TSMC has the vast majority share of global manufacturing capacity at 3 nm and 5 nm, and CoWoS capacity is also essentially controlled by TSMC. HBM is produced by a small number of memory manufacturers (SK Hynix, Samsung, and Micron). Because these are the binding physical constraints, understanding AI’s share of each gives insight into how much of the world’s most advanced semiconductor manufacturing is being absorbed by AI chip production.

We cover the four largest AI chip designers by supply chain consumption: NVIDIA, AMD, Google (TPUs), and Amazon (Trainium). Together these account for the large majority of CoWoS and HBM consumption and a substantial share of advanced logic wafer consumption.

Other chip designers, including Meta, Microsoft, Tesla, Groq, SambaNova, Huawei, and Cambricon, are not currently tracked. Adding these designers is a possible direction for future work. Each table also includes an “Other” row representing unattributed global supply: the residual after subtracting tracked designer demand from total global supply for that quarter.

Each row represents total supply chain consumption attributable to a chip type in a given quarter, regardless of whether the underlying units were shipped to customers, sit in finished-goods inventory, or are still being built (work-in-process). For example, Blackwell chips in WIP at end-Q3 2025 consumed logic wafers (fab complete) but not yet CoWoS wafers or HBM (packaging incomplete); that consumption appears as logic > 0 and CoWoS = HBM = 0 for that quarter.

We model NVIDIA and AMD inventory directly from their year-end balance sheets, splitting the GPU-attributable share across raw materials, work-in-process, and finished goods, and phasing each category into the quarters in which the underlying components were likely consumed. Google and Amazon do not hold finished-chip inventory (their backend partners do), so for them we extrapolate Q1 2026 production volumes from 2025 trajectories instead.

The shipped-vs-inventory decomposition is an internal modelling detail rather than a schema dimension. All inventory consumption is folded into the chip-level rows.

The “Other” row captures global component supply not assigned to the four tracked designers. It can include several things — capacity consumed by chip designers we don’t track (e.g. Apple and Qualcomm for logic wafers, Chinese AI accelerator and HBM stockpiling for HBM and CoWoS), capacity that was available but not utilised in that period, upstream inventory held by partners we don’t directly model, or modelling error in our tracked-designer estimates. It should be read as a directional residual rather than a precise estimate of unused capacity.

Annual residuals tend to be more reliable than quarterly ones. For full-year 2025, the probability that tracked demand exceeds total supply is approximately 0% for CoWoS and around 5% for HBM, so the annual residuals likely reflect a real gap. Some quarter-level residuals also line up with known events — the Q4 2024 HBM “Other” rises to ~38% of supply, consistent with reports that Chinese companies stockpiled HBM ahead of US export controls — and provide useful signal beyond noise.

All figures are estimates with 90% confidence intervals (P5 / P95) derived from 10,000 Monte Carlo simulation draws. We have a fair amount of confidence in our annual estimates: they line up with several independent analyst and industry estimates (JP Morgan, SemiWiki, TrendForce, Global Semi Research, and GFHK) which serve as cross-checks. The cross-validation section in the methodology walks through each comparison.

Underlying uncertainty enters through three modelling steps:

Quarterly estimates carry more uncertainty than annual totals because they are additionally sensitive to:

Where a single quarter looks surprising, the annual total is a more reliable signal.

Total component cost in this hub captures only the accelerator-level bill of materials: logic die fabrication, CoWoS advanced packaging, HBM memory, and per-chip auxiliary components such as substrate, board, and final-assembly cost.

It does not include rack-scale or data-center-level costs — the infrastructure, networking switches and NICs, host CPUs, storage, cooling, power delivery, building shell, land, and operating overhead that surround the accelerators in a deployed AI system are all out of scope. So our 2025 estimate of roughly $53B in component spend across the four tracked designers is the cost of producing the chips themselves, not the cost of the data centers they ultimately end up in.

Epoch AI’s data is free to use, distribute, and reproduce provided the source and authors are credited under the Creative Commons Attribution license.

Have a question? Noticed something wrong? Let us know.

Our estimates of how much advanced logic wafer capacity, CoWoS packaging, and HBM memory leading AI chip designers consumed.