Computing capacity (“compute”) is a critical input to the development, training, and deployment of AI systems. How much AI-optimized compute exists in the world, and who owns it? Earlier this year, we launched the AI Chip Sales explorer to track the first question. Today, we’re launching our AI Chip Owners explorer to track the second.

Our AI Chip Owners explorer contains interactive visualizations of our analysis of the number of leading AI chips owned by the largest US hyperscalers and cloud companies, one frontier AI developer (xAI), and Chinese customers — with breakdowns by chip family, chip model, and shifts in ownership over time. We build upon our estimates the total volumes of Nvidia, Google TPU, Amazon Trainium, AMD, and Huawei chips from the AI Chip Sales, and distribute these chips among major owners using estimates from analysts and industry researchers, company financial disclosures, capital spending, and our analysis of frontier-scale AI data centers.

The AI Chip Owners explorer is intended as a resource for researchers, policymakers, and anyone tracking the strategic landscape of AI compute. You can visit the explorer here, and read on for highlights!

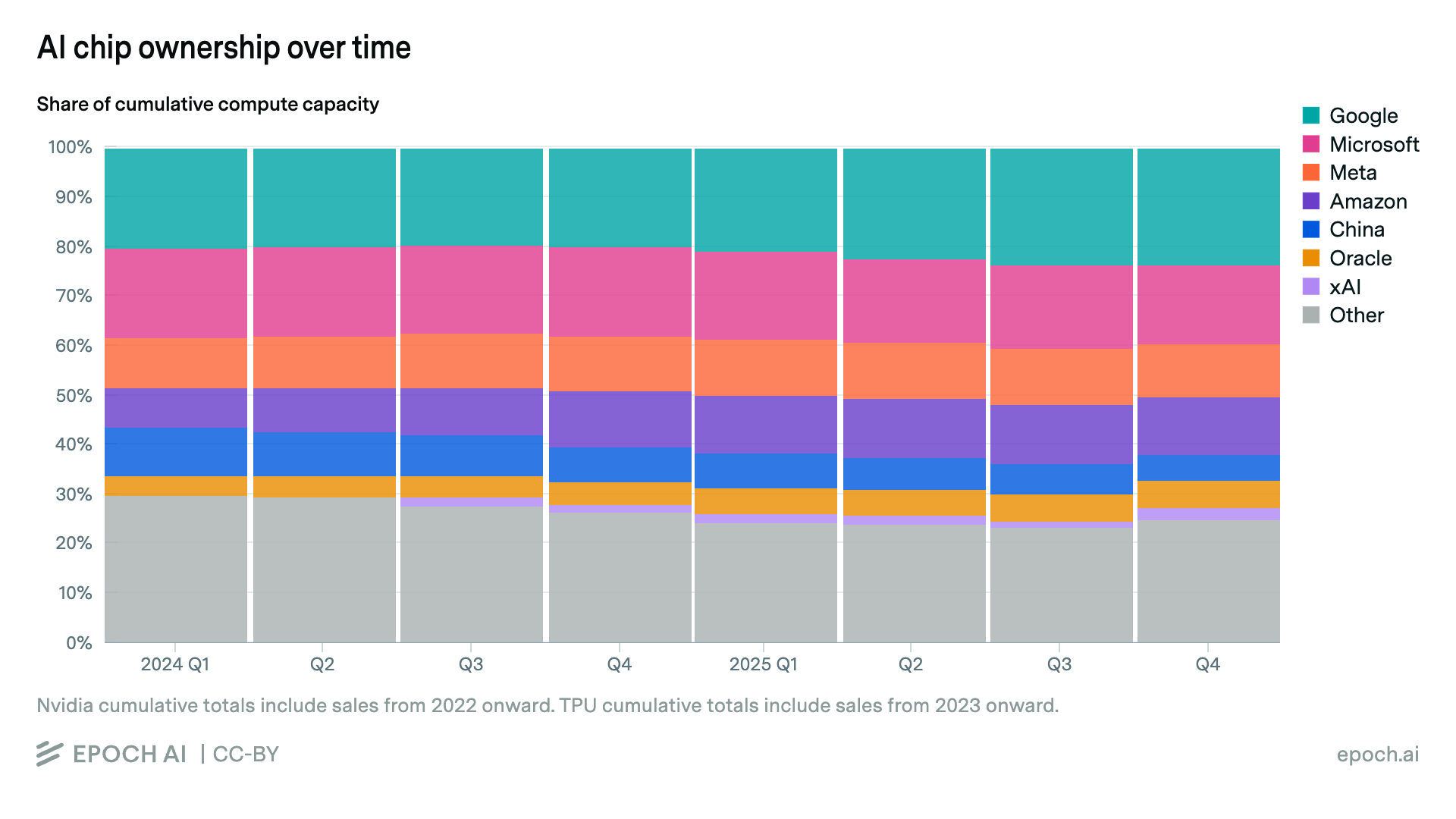

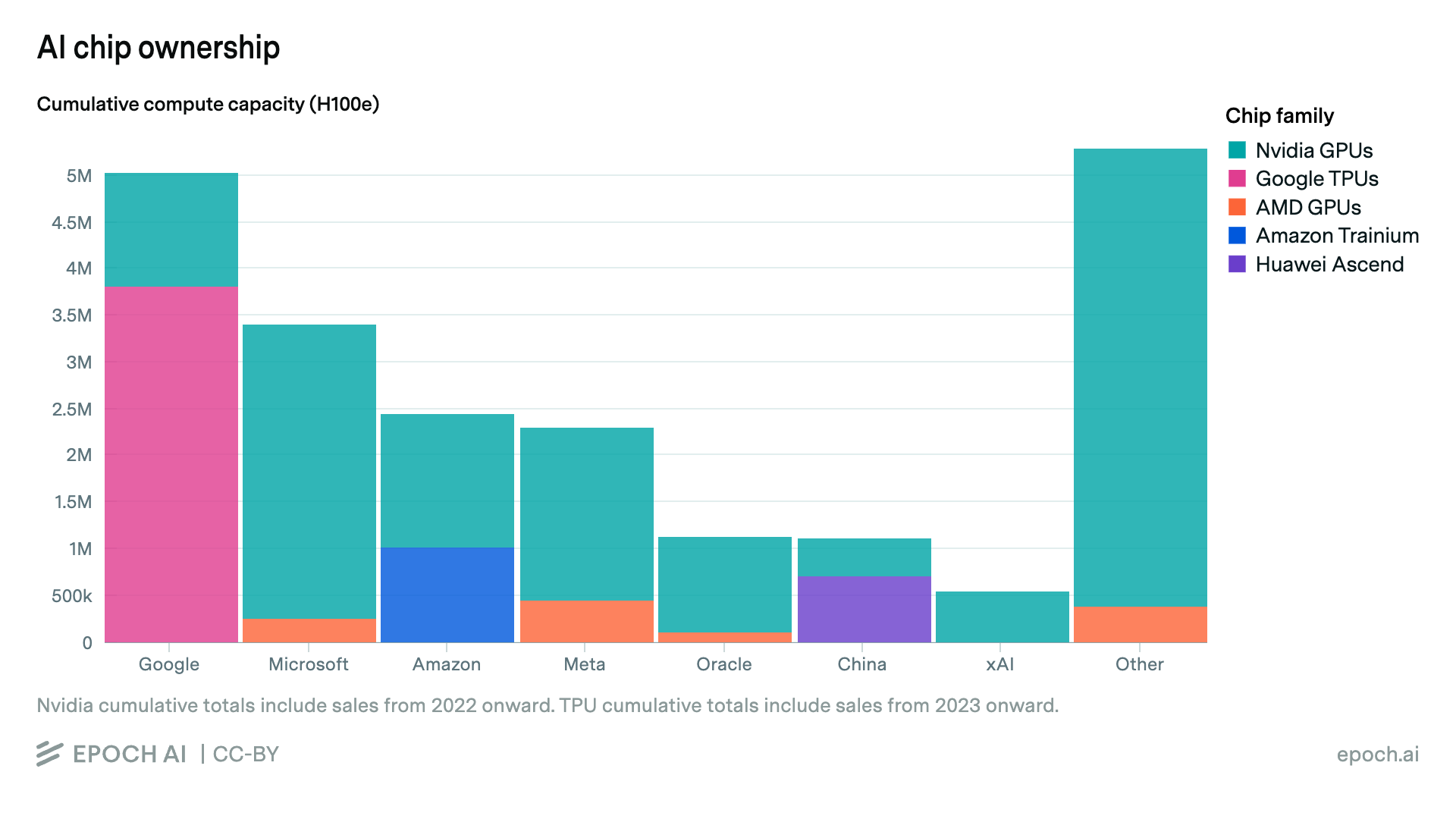

Hyperscalers own the majority of global AI compute

Most of the world’s AI computing power is owned by hyperscalers and large cloud companies. “Hyperscalers” are the leading companies in data center deployments; among US companies, this refers to Amazon, Google (Alphabet), Meta, Microsoft, and Oracle. We estimate that over 70% of global AI compute (in terms of total computing power) is owned by the five US hyperscalers, led by Google.

Google holds the equivalent of around 5 million Nvidia H100 GPUs in compute capacity, roughly 25% of the world’s total! This capacity is led by the large scale of its custom TPU chips, which we estimate have a total compute capacity of almost 4 million H100-equivalents [confidence interval: 3.1M to 4.5M]. For the other hyperscalers, Nvidia is responsible for the majority of their AI compute acquired to date.

Besides Meta, the hyperscalers are all major cloud companies, meaning that they rent out part of their compute to other companies. Many frontier AI developers, including Anthropic and OpenAI, acquire almost all of their compute from hyperscalers and other cloud providers. OpenAI’s compute primarily comes from Microsoft, Oracle, and CoreWeave, and Anthropic’s from Google and Amazon, though neither should be assumed to be renting out the entire capacity of these clouds. We will follow up soon with an analysis of how much compute is used by frontier AI developers like OpenAI and Anthropic, as well as the frontier model divisions housed within Google and Meta.

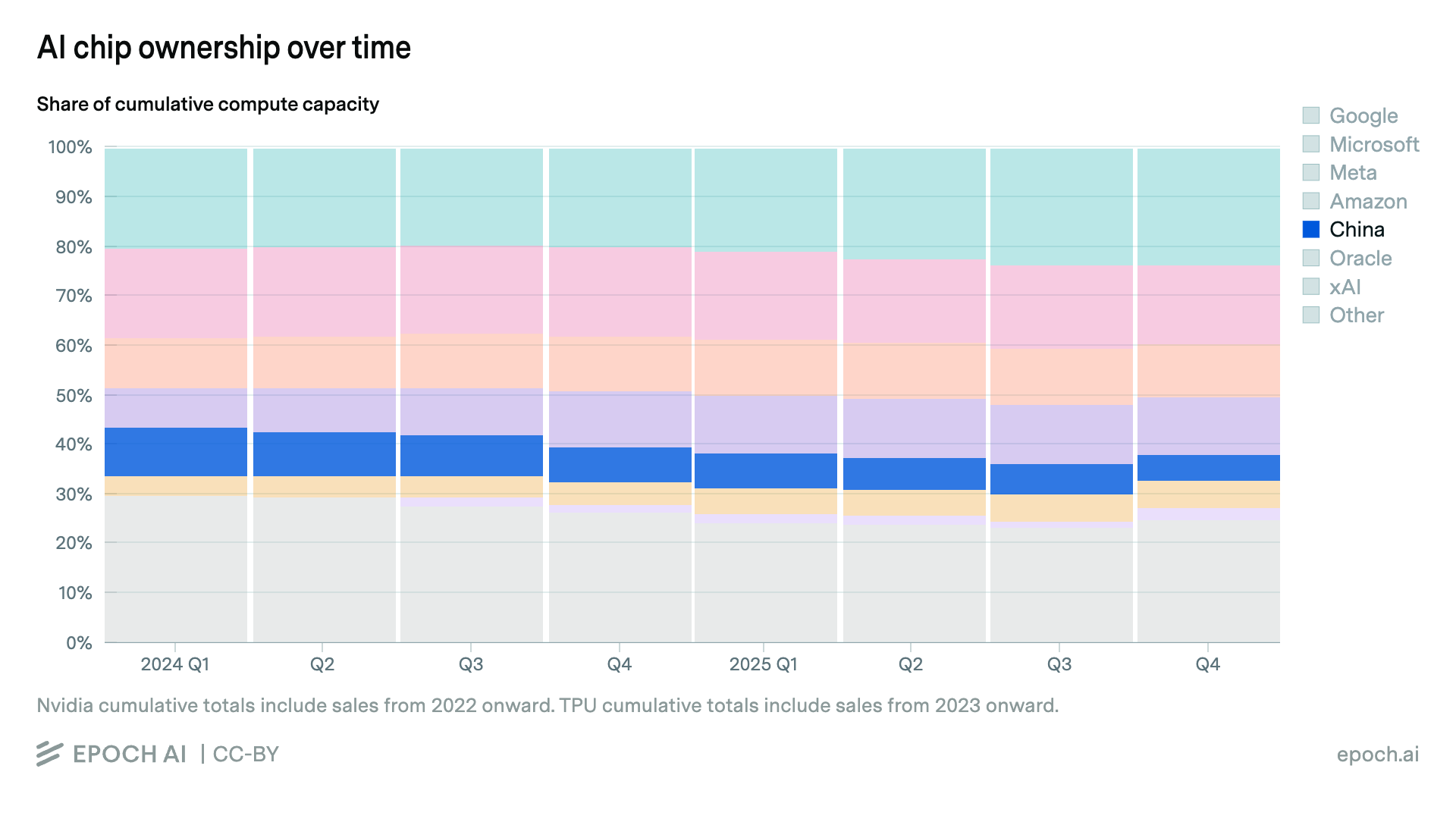

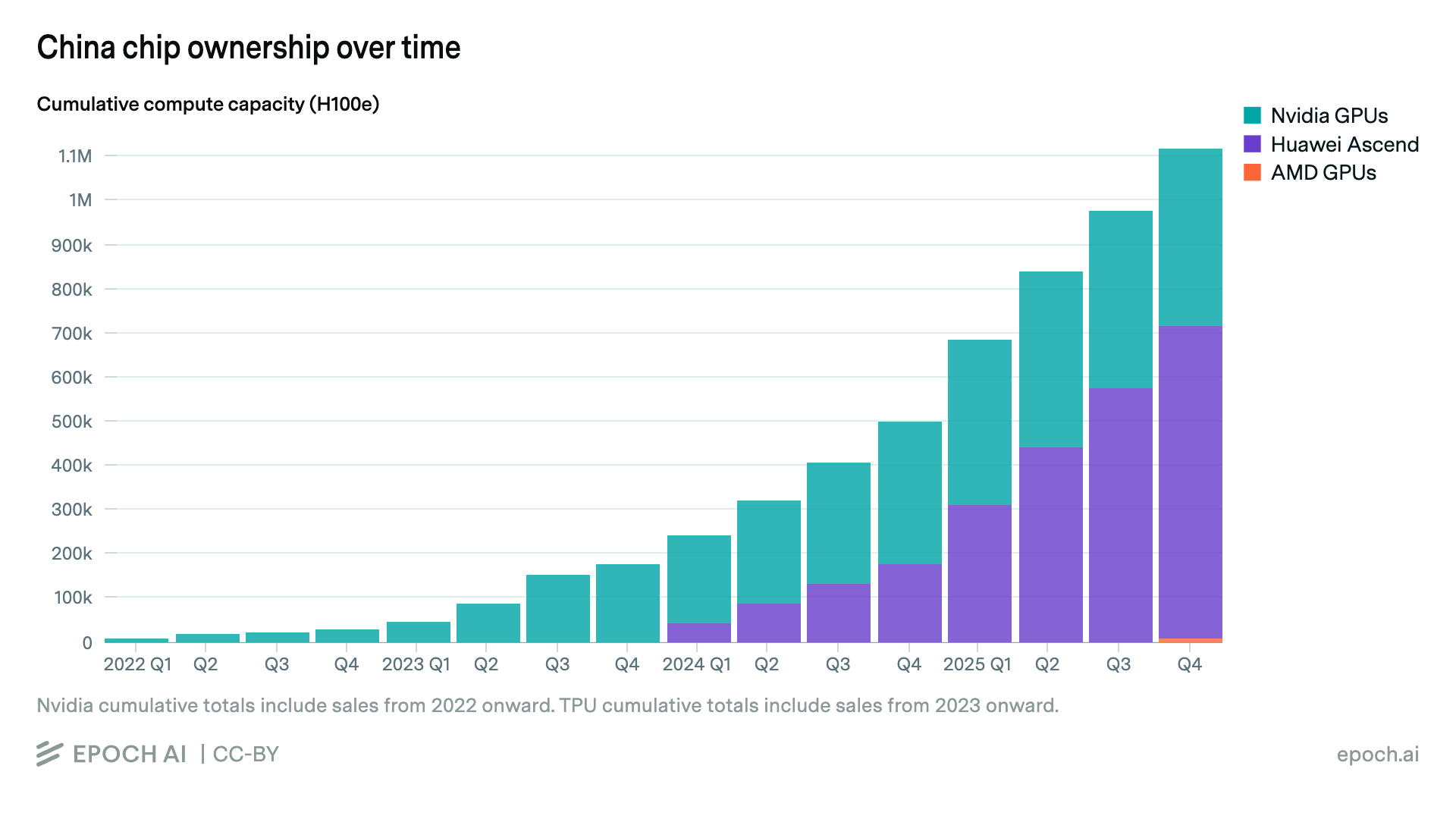

Chinese customers own just 5% of global AI compute

We estimate that as of the end of 2025, Chinese companies collectively own just over 5% of the cumulative computing power of the leading AI chips sold in recent years — less than any single top US hyperscaler, and a share that has decreased over time. We bucket Nvidia, AMD, and Huawei chips purchased by mainland Chinese customers into a single “China” category, with specific customer breakdowns left for future work.

Our estimates of Chinese compute do not include chips smuggled in contravention of US export controls. Prior research and recent reporting suggest these volumes may be significant. Grunewald and Fist estimate that over 100,000 Nvidia A100s and H100s were shipped to China in 2024, though with significant uncertainty. These imports continued through 2025, according to multiple reports of Nvidia shipments upwards of several billion dollars, or at least tens of thousands of chips. However, chip smuggling does not seem likely to add up to the millions of chips required to significantly close the balance of compute between Chinese companies and US hyperscalers.

Like our other ownership figures, these China totals do not include any offshore cloud compute rented by Chinese companies.

Notably, in the past year Huawei has overtaken Nvidia as the leading source of AI computing power in China, at least in terms of rated FLOP/s, which may not reflect real-world performance. This is due to a pause in official Nvidia exports to China following tightened controls on the Nvidia H20 chip. Nvidia is now preparing to export the more advanced H200 to China.

We plan to follow up this work with more coverage and analysis of how AI compute is allocated and used by major players, complementing our coverage of overall AI chip sales and frontier AI data centers to give a detailed overview of the world’s AI compute capacity.

To learn more, visit the AI Chip Owners explorer to find the methodology, full dataset, interactive visualizations, and more analysis!

Thanks to Brendan Halstead, Erich Grunewald, Konstantin Pilz, and Theo Bearman for their feedback.

About the authors