Reliable data and independent research

Open database of AI data centers using satellite and permit data to show compute, power use, and construction timelines.

Trusted by leading publications and institutions

Open database of AI data centers using satellite and permit data to show compute, power use, and construction timelines.

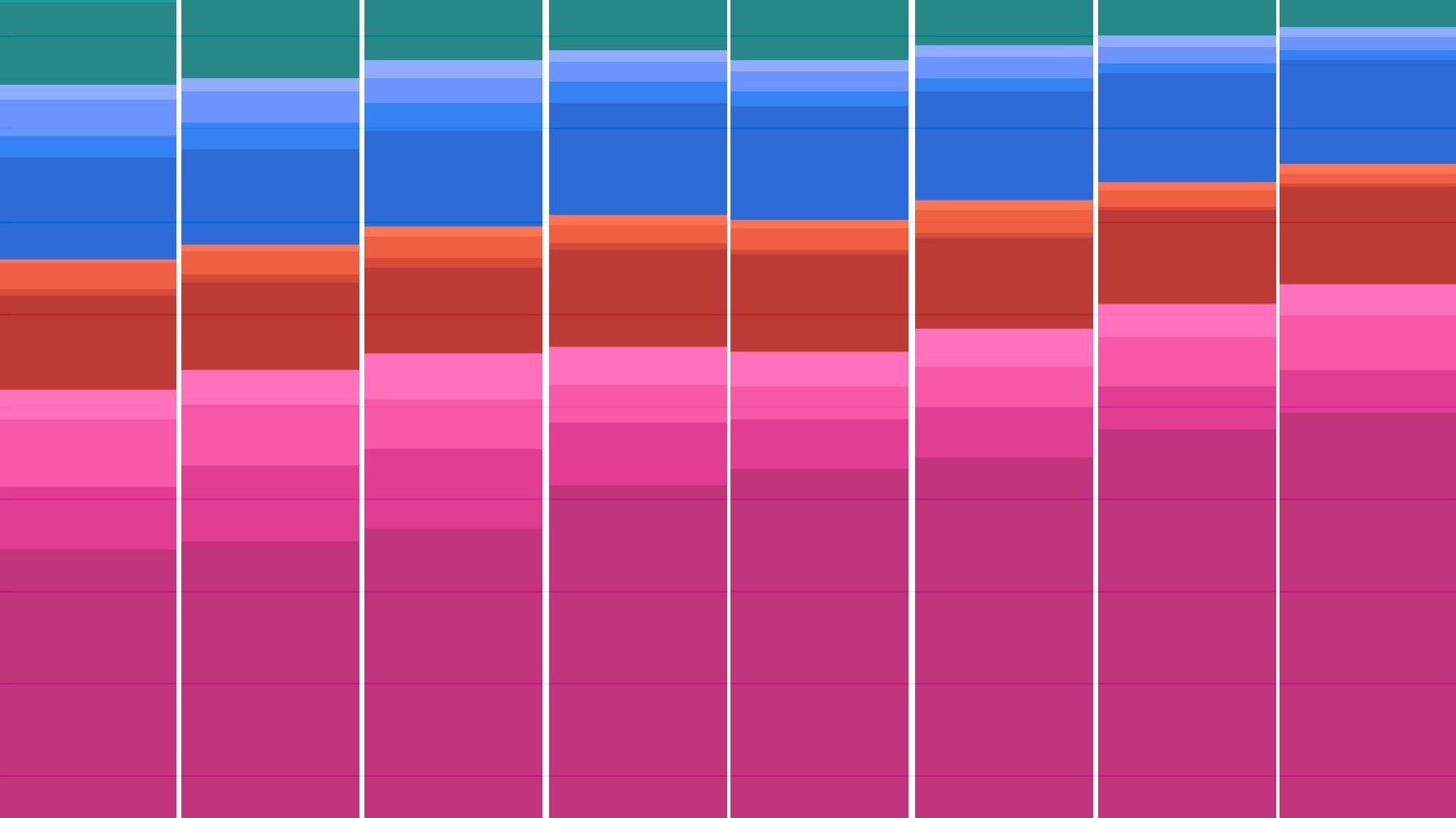

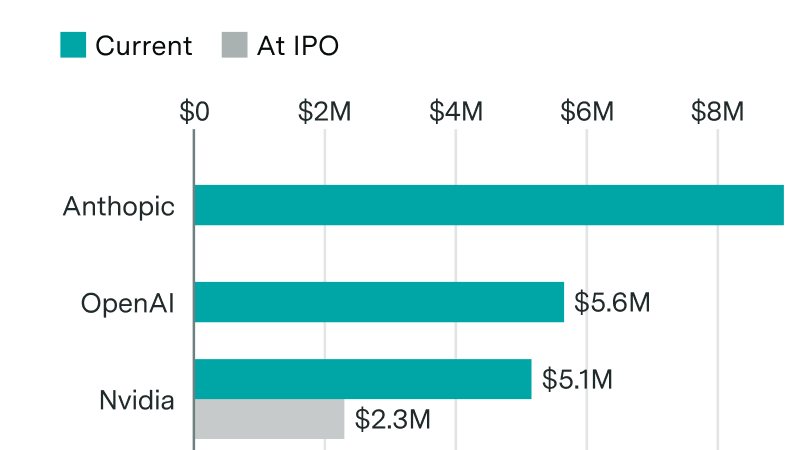

Total available computing capacity from AI chips across all major designers has grown by approximately 3.3x per year since 2022, enabling larger-scale model development and consumer adoption. NVIDIA AI chips currently account for over 60% of total compute, with Google and Amazon making up much of the remainder.

These estimates are inferred based on revenue data, other financial disclosures, and analyst reports. Data coverage varies by manufacturer: Nvidia and Google data begin in 2022 while others start in 2024.

3,200+ ML models tracked, 1950–today

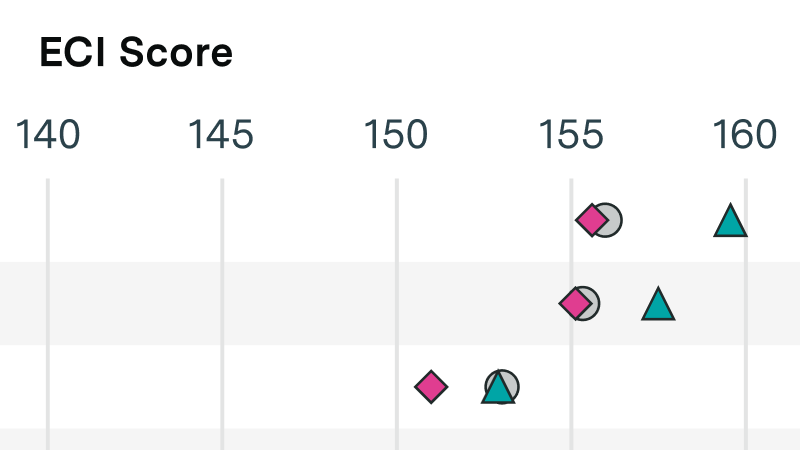

Model performance on key benchmarks

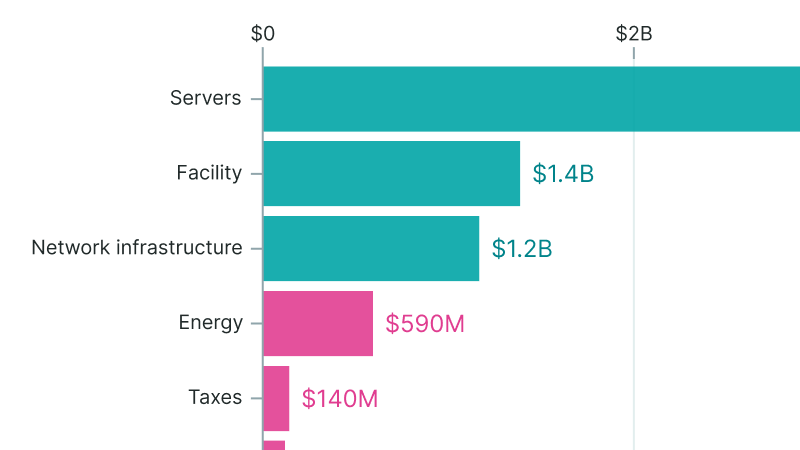

Open database of large AI data centers

Chip shipment volumes and revenue

Need deeper insights? Our team offers custom research and advisory services.

Book a consultation